Introducing FineVision: A Groundbreaking Multimodal Dataset for Vision-Language Model Training

Hugging Face has unveiled FineVision, a comprehensive open-source multimodal dataset crafted to elevate the training of Vision-Language Models (VLMs). Boasting an impressive collection of 17.3 million images, 24.3 million annotated samples, 88.9 million question-answer interactions, and nearly 10 billion answer tokens, FineVision stands as one of the most extensive and meticulously organized datasets publicly accessible for VLM development.

What Sets FineVision Apart in the VLM Landscape?

Unlike many cutting-edge VLMs that depend on proprietary datasets, FineVision offers an open alternative that enhances reproducibility and accessibility for researchers and developers worldwide. Key attributes include:

- Massive Scale and Diverse Domains: Encompassing 5 terabytes of curated data spanning nine distinct categories such as General Visual Question Answering (VQA), Optical Character Recognition (OCR) QA, chart and table interpretation, scientific reasoning, image captioning, object grounding and counting, as well as graphical user interface (GUI) navigation.

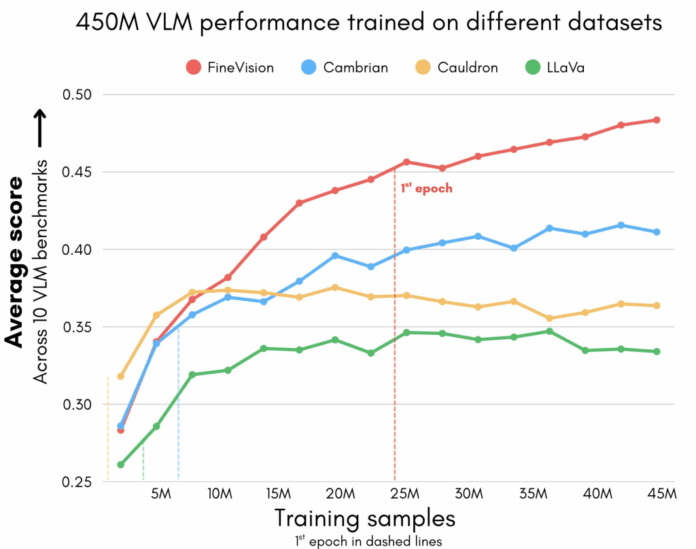

- Superior Benchmark Performance: Models trained on FineVision demonstrate remarkable improvements across 11 prominent benchmarks-including AI2D, ChartQA, DocVQA, ScienceQA, and OCRBench-surpassing leading datasets by margins up to 46.3% compared to LLaVA, 40.7% over Cauldron, and 12.1% beyond Cambrian.

- Expanded Skill Set: Incorporates novel task domains such as GUI navigation, pointing, and counting, broadening the functional capabilities of VLMs beyond traditional captioning and question answering.

Methodical Construction of FineVision

The creation of FineVision involved a rigorous three-phase pipeline:

- Data Aggregation and Enhancement: Over 200 publicly available image-text datasets were consolidated. Text-only datasets were transformed into question-answer pairs to ensure multimodal consistency. Additionally, underrepresented areas like GUI data were enriched through targeted data collection efforts.

- Data Refinement:

- Eliminated excessively large QA pairs exceeding 8,192 tokens.

- Resized images to a maximum dimension of 2,048 pixels while maintaining aspect ratios.

- Removed corrupted or unusable samples to maintain dataset integrity.

- Quality Assessment: Leveraging advanced evaluators such as Qwen3-32B and Qwen2.5-VL-32B-Instruct, each QA pair was scored on four critical dimensions:

- Text formatting accuracy

- Relevance between question and answer

- Dependence on visual content

- Alignment between image and question

FineVision Compared to Other Leading Open Datasets

| Dataset | Images | Samples | QA Turns | Answer Tokens | Data Leakage (%) | Performance Drop Post-Deduplication (%) |

|---|---|---|---|---|---|---|

| Cauldron | 2.0M | 1.8M | 27.8M | 0.3B | 3.05 | -2.39 |

| LLaVA-Vision | 2.5M | 3.9M | 9.1M | 1.0B | 2.15 | -2.72 |

| Cambrian-7M | 5.4M | 7.0M | 12.2M | 0.8B | 2.29 | -2.78 |

| FineVision | 17.3M | 24.3M | 88.9M | 9.5B | 1.02 | -1.45 |

FineVision not only leads in dataset size but also exhibits the lowest rate of data contamination, with only about 1% overlap with benchmark test sets. This minimal leakage ensures more trustworthy evaluation outcomes and reduces the risk of inflated performance metrics.

Insights into FineVision’s Training Performance

- Experimental Setup: Evaluations utilized the nanoVLM architecture (460 million parameters), integrating SmolLM2-360M-Instruct as the language model and SigLIP2-Base-512 as the vision encoder.

- Training Efficiency: Running on 32 NVIDIA H100 GPUs, a complete epoch of 12,000 training steps requires approximately 20 hours.

- Performance Observations:

- Models trained on FineVision consistently improve with increased exposure to its diverse data, surpassing baseline models after around 12,000 steps.

- Deduplication tests confirm FineVision’s superior resistance to data leakage compared to Cauldron, LLaVA, and Cambrian datasets.

- Inclusion of multilingual data subsets, even when paired with monolingual backbones, yields modest performance enhancements, highlighting the value of data diversity over strict language alignment.

- Attempts at multi-phase training strategies (two or more stages) did not produce consistent gains, underscoring that dataset scale and variety are more influential than complex training schedules.

Why FineVision Establishes a New Benchmark for VLM Datasets

- Significant Performance Improvement: Delivers over 20% average gains across more than 10 evaluation benchmarks compared to existing open datasets.

- Unmatched Dataset Scale: Contains over 17 million images, 24 million samples, and nearly 10 billion tokens, enabling richer model training.

- Broadened Functional Scope: Supports advanced tasks such as GUI navigation, object counting, pointing, and document reasoning.

- Minimal Data Leakage: Maintains only 1% contamination, substantially lower than the 2-3% typical in other datasets.

- Open Accessibility: Fully open-source and readily available on the Hugging Face Hub, easily integrated via the

datasetslibrary.

Final Thoughts

FineVision represents a pivotal leap forward in the realm of open multimodal datasets. Its vast scale, meticulous curation, and transparent quality metrics provide a solid, reproducible foundation for training next-generation Vision-Language Models. By reducing reliance on closed proprietary data, FineVision empowers the research community to develop more capable, versatile models that advance fields such as document understanding, visual reasoning, and interactive multimodal AI applications.