Mastering Advanced Computer Vision with TorchVision v2 and Modern Deep Learning Techniques

This guide delves into sophisticated computer vision methodologies by leveraging TorchVision’s latest v2 transforms, cutting-edge augmentation methods, and enhanced training protocols. We will construct a comprehensive augmentation pipeline, integrate MixUp and CutMix strategies, architect a contemporary convolutional neural network (CNN) with attention modules, and implement a resilient training loop. Executed seamlessly on Google Colab, this tutorial equips you with practical insights to apply state-of-the-art deep learning techniques efficiently and effectively.

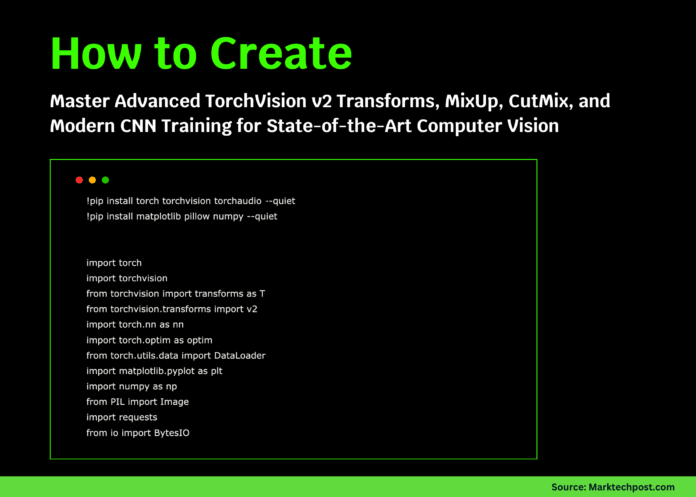

Setting Up the Environment and Essential Libraries

!pip install torch torchvision torchaudio --quiet

!pip install matplotlib pillow numpy --quiet

import torch

import torchvision

from torchvision.transforms import v2

import torch.nn as nn

import torch.optim as optim

from torch.utils.data import DataLoader

import matplotlib.pyplot as plt

import numpy as np

from PIL import Image

print(f"PyTorch version: {torch.__version__}")

print(f"TorchVision version: {torchvision.__version__}")We start by installing the necessary packages and importing key modules such as PyTorch, TorchVision v2 transforms, NumPy, PIL, and Matplotlib. This setup prepares us to build and experiment with advanced computer vision pipelines.

Designing a Dynamic Augmentation Pipeline for Robust Training

class DynamicAugmentationPipeline:

def __init__(self, target_size=224, is_training=True):

self.target_size = target_size

self.is_training = is_training

base_steps = [

v2.ToImage(),

v2.ToDtype(torch.uint8, scale=True),

]

if is_training:

self.pipeline = v2.Compose([

*base_steps,

v2.Resize((target_size + 30, target_size + 30)),

v2.RandomResizedCrop(target_size, scale=(0.75, 1.0), ratio=(0.85, 1.15)),

v2.RandomHorizontalFlip(p=0.5),

v2.RandomRotation(degrees=20),

v2.ColorJitter(brightness=0.5, contrast=0.5, saturation=0.5, hue=0.15),

v2.RandomGrayscale(p=0.15),

v2.GaussianBlur(kernel_size=5, sigma=(0.2, 2.5)),

v2.RandomPerspective(distortion_scale=0.15, p=0.35),

v2.RandomAffine(degrees=12, translate=(0.12, 0.12), scale=(0.85, 1.15)),

v2.ToDtype(torch.float32, scale=True),

v2.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225])

])

else:

self.pipeline = v2.Compose([

*base_steps,

v2.Resize((target_size, target_size)),

v2.ToDtype(torch.float32, scale=True),

v2.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225])

])

def __call__(self, img):

return self.pipeline(img)This augmentation framework adapts based on the mode-training or validation. During training, it applies a rich set of transformations including resizing, random cropping, flipping, color adjustments, blurring, perspective warping, and affine transformations to diversify the dataset and improve model generalization. For validation, it simplifies preprocessing to resizing and normalization, ensuring consistent evaluation.

Implementing MixUp and CutMix for Enhanced Data Diversity

class MixupCutmixAugmentor:

def __init__(self, alpha_mixup=1.0, alpha_cutmix=1.0, apply_prob=0.6):

self.alpha_mixup = alpha_mixup

self.alpha_cutmix = alpha_cutmix

self.apply_prob = apply_prob

def _random_bbox(self, size, lam):

width, height = size[2], size[3]

cut_ratio = np.sqrt(1. - lam)

cut_w = int(width * cut_ratio)

cut_h = int(height * cut_ratio)

center_x = np.random.randint(width)

center_y = np.random.randint(height)

x1 = np.clip(center_x - cut_w // 2, 0, width)

y1 = np.clip(center_y - cut_h // 2, 0, height)

x2 = np.clip(center_x + cut_w // 2, 0, width)

y2 = np.clip(center_y + cut_h // 2, 0, height)

return x1, y1, x2, y2

def mixup(self, inputs, targets):

batch_size = inputs.size(0)

lam = np.random.beta(self.alpha_mixup, self.alpha_mixup) if self.alpha_mixup > 0 else 1

shuffled_indices = torch.randperm(batch_size)

mixed_inputs = lam * inputs + (1 - lam) * inputs[shuffled_indices]

target_a, target_b = targets, targets[shuffled_indices]

return mixed_inputs, target_a, target_b, lam

def cutmix(self, inputs, targets):

batch_size = inputs.size(0)

lam = np.random.beta(self.alpha_cutmix, self.alpha_cutmix) if self.alpha_cutmix > 0 else 1

shuffled_indices = torch.randperm(batch_size)

target_a, target_b = targets, targets[shuffled_indices]

x1, y1, x2, y2 = self._random_bbox(inputs.size(), lam)

inputs[:, :, x1:x2, y1:y2] = inputs[shuffled_indices, :, x1:x2, y1:y2]

lam_adjusted = 1 - ((x2 - x1) * (y2 - y1) / (inputs.size(2) * inputs.size(3)))

return inputs, target_a, target_b, lam_adjusted

def __call__(self, inputs, targets):

if np.random.rand() > self.apply_prob:

return inputs, targets, targets, 1.0

if np.random.rand() < 0.5:

return self.mixup(inputs, targets)

else:

return self.cutmix(inputs, targets)This module probabilistically applies MixUp or CutMix augmentations, blending images or swapping patches between samples. It calculates the precise label interpolation ratio based on pixel mixing, which helps the model learn smoother decision boundaries and improves robustness.

Constructing a Contemporary CNN with Attention Mechanisms

class AttentionEnhancedCNN(nn.Module):

def __init__(self, num_classes=10, dropout_rate=0.3):

super(AttentionEnhancedCNN, self).__init__()

self.layer1 = self._conv_block(3, 64)

self.layer2 = self._conv_block(64, 128, downsample=True)

self.layer3 = self._conv_block(128, 256, downsample=True)

self.layer4 = self._conv_block(256, 512, downsample=True)

self.global_pool = nn.AdaptiveAvgPool2d(1)

self.attention_gate = nn.Sequential(

nn.Linear(512, 256),

nn.ReLU(),

nn.Linear(256, 512),

nn.Sigmoid()

)

self.classifier = nn.Sequential(

nn.Dropout(dropout_rate),

nn.Linear(512, 256),

nn.BatchNorm1d(256),

nn.ReLU(),

nn.Dropout(dropout_rate / 2),

nn.Linear(256, num_classes)

)

def _conv_block(self, in_channels, out_channels, downsample=False):

stride = 2 if downsample else 1

return nn.Sequential(

nn.Conv2d(in_channels, out_channels, kernel_size=3, stride=stride, padding=1),

nn.BatchNorm2d(out_channels),

nn.ReLU(inplace=True),

nn.Conv2d(out_channels, out_channels, kernel_size=3, padding=1),

nn.BatchNorm2d(out_channels),

nn.ReLU(inplace=True)

)

def forward(self, x):

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

x = self.layer4(x)

x = self.global_pool(x)

x = torch.flatten(x, 1)

attn_weights = self.attention_gate(x)

x = x * attn_weights

return self.classifier(x)This CNN architecture features sequential convolutional blocks with optional downsampling, followed by global average pooling. A learned attention gate modulates the feature representation before classification, enhancing the network's focus on salient features. Dropout and batch normalization layers are incorporated to improve generalization and training stability.

Advanced Training Loop with Adaptive Learning and Gradient Management

class RobustTrainer:

def __init__(self, model, device=None):

self.device = device or ('cuda' if torch.cuda.is_available() else 'cpu')

self.model = model.to(self.device)

self.augmentor = MixupCutmixAugmentor()

self.optimizer = optim.AdamW(self.model.parameters(), lr=1e-3, weight_decay=1e-4)

self.scheduler = optim.lr_scheduler.OneCycleLR(

self.optimizer, max_lr=1e-2, epochs=10, steps_per_epoch=100

)

self.loss_fn = nn.CrossEntropyLoss()

def _compute_mixup_loss(self, predictions, targets_a, targets_b, lam):

return lam * self.loss_fn(predictions, targets_a) + (1 - lam) * self.loss_fn(predictions, targets_b)

def train_one_epoch(self, dataloader):

self.model.train()

cumulative_loss = 0

correct_preds = 0

total_samples = 0

for batch_idx, (inputs, labels) in enumerate(dataloader):

inputs, labels = inputs.to(self.device), labels.to(self.device)

inputs, targets_a, targets_b, lam = self.augmentor(inputs, labels)

self.optimizer.zero_grad()

outputs = self.model(inputs)

if lam != 1.0:

loss = self._compute_mixup_loss(outputs, targets_a, targets_b, lam)

else:

loss = self.loss_fn(outputs, labels)

loss.backward()

torch.nn.utils.clip_grad_norm_(self.model.parameters(), max_norm=1.0)

self.optimizer.step()

self.scheduler.step()

cumulative_loss += loss.item()

_, predicted = outputs.max(1)

total_samples += labels.size(0)

if lam != 1.0:

correct_preds += (lam * predicted.eq(targets_a).sum().item() + (1 - lam) * predicted.eq(targets_b).sum().item())

else:

correct_preds += predicted.eq(labels).sum().item()

avg_loss = cumulative_loss / len(dataloader)

accuracy = 100. * correct_preds / total_samples

return avg_loss, accuracyThis training routine employs the AdamW optimizer combined with a OneCycleLR scheduler to dynamically adjust learning rates for faster convergence. It integrates MixUp and CutMix augmentations during training, calculates the corresponding interpolated loss, and applies gradient clipping to prevent exploding gradients. The loop tracks loss and accuracy metrics per epoch, ensuring efficient monitoring of model performance.

End-to-End Demonstration of Advanced Techniques

def run_demo():

batch_size = 16

num_classes = 10

dummy_inputs = torch.randn(batch_size, 3, 224, 224)

dummy_labels = torch.randint(0, num_classes, (batch_size,))

augmentation = DynamicAugmentationPipeline(is_training=True)

model = AttentionEnhancedCNN(num_classes=num_classes)

trainer = RobustTrainer(model)

print("🚀 Advanced Deep Learning Pipeline Demo")

print("=" * 50)

print("n1. Augmentation Pipeline Preview:")

sample_img = Image.fromarray((dummy_inputs[0].permute(1, 2, 0).numpy() * 255).astype(np.uint8))

augmented_img = augmentation(sample_img)

print(f" Original tensor shape: {dummy_inputs[0].shape}")

print(f" Augmented tensor shape: {augmented_img.shape}")

print(" Transformations include resizing, cropping, flipping, color jitter, blur, perspective, and affine transforms.")

print("n2. MixUp and CutMix Application:")

mixcut = MixupCutmixAugmentor()

mixed_inputs, target_a, target_b, lam = mixcut(dummy_inputs, dummy_labels)

technique = "MixUp" if lam > 0.7 else "CutMix"

print(f" Mixed batch shape: {mixed_inputs.shape}")

print(f" Lambda coefficient: {lam:.3f}")

print(f" Applied technique: {technique}")

print("n3. Model Architecture Overview:")

model.eval()

with torch.no_grad():

output = model(dummy_inputs)

total_params = sum(p.numel() for p in model.parameters())

print(f" Input shape: {dummy_inputs.shape}")

print(f" Output shape: {output.shape}")

print(f" Features: Convolutional blocks, attention gating, global average pooling")

print(f" Total parameters: {total_params:,}")

print("n4. Simulated Training Epoch:")

dummy_loader = [(dummy_inputs, dummy_labels)]

loss, accuracy = trainer.train_one_epoch(dummy_loader)

print(f" Training loss: {loss:.4f}")

print(f" Training accuracy: {accuracy:.2f}%")

print(f" Current learning rate: {trainer.scheduler.get_last_lr()[0]:.6f}")

print("n✅ Demo completed successfully!")

print("This demonstration highlights:")

print("• Advanced data augmentation with TorchVision v2")

print("• MixUp and CutMix for improved generalization")

print("• Modern CNN with attention mechanisms")

print("• Sophisticated training loop with OneCycleLR and gradient clipping")

if __name__ == "__main__":

run_demo()In this concise demonstration, we visualize the augmentation effects, apply MixUp/CutMix to a batch, verify the model's forward pass, and simulate a training epoch. This end-to-end test confirms the integration and functionality of all components before scaling to larger datasets.

Summary

We have successfully crafted a comprehensive deep learning workflow that combines advanced augmentation techniques, a modern CNN architecture with attention, and a robust training strategy. Utilizing TorchVision v2 transforms alongside MixUp, CutMix, and OneCycleLR scheduling, this approach not only enhances model accuracy but also deepens practical understanding of contemporary computer vision practices.