Contents

Unveiling the True Expense of AI: The GPU Compute Challenge

Training advanced AI models demands an enormous amount of GPU resources, often costing millions of dollars. This significant expenditure restricts innovation, limits the scope of experimentation, and slows down the pace of AI advancements. For instance, training a contemporary language model or a vision transformer on datasets like ImageNet-1K can consume thousands of GPU-hours, making it financially and logistically impractical for startups, research labs, and even major technology firms.

Revolutionizing Optimization: Slash Your GPU Costs by 87% with a Simple Change

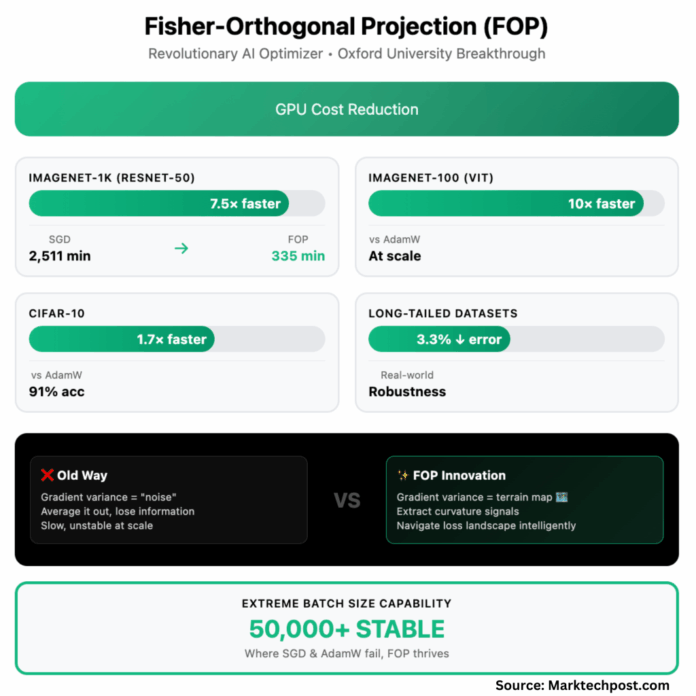

Introducing Fisher-Orthogonal Projection (FOP), a cutting-edge optimizer developed by researchers at the University of Oxford. This breakthrough method rethinks how gradient information is utilized during training, promising dramatic reductions in GPU usage. This article explores why traditional views of gradient noise are misleading, how FOP interprets gradient variations as a map of the loss landscape, and the profound implications this holds for AI development and deployment.

Rethinking Model Training: The Limitations of Conventional Gradient Descent

Most modern AI training relies on gradient descent, where model parameters are iteratively adjusted to minimize error. Typically, training uses mini-batches-small subsets of data-to estimate the gradient direction by averaging individual gradients. However, each data point’s gradient differs, and these differences are often dismissed as mere noise. In truth, this so-called noise contains vital directional cues about the complex geometry of the loss surface that guides optimization.

FOP: Navigating the Loss Landscape with Precision

FOP innovates by treating the variability among gradients within a batch not as random noise but as a detailed topographical map. It combines the average gradient with an orthogonal projection of the gradient differences, creating a curvature-aware adjustment that guides the optimizer more intelligently. This approach helps the optimizer avoid pitfalls like steep cliffs or plateaus, enabling smoother and faster convergence.

Mechanics of FOP:

- The mean gradient indicates the primary direction for parameter updates.

- The gradient variance acts as a sensor, detecting whether the optimization path is flat or surrounded by sharp gradients.

- FOP integrates these signals by adding a curvature-sensitive step orthogonal to the main gradient, preventing conflicting updates and overshooting.

- The outcome is a more stable and accelerated training process, especially effective at very large batch sizes where traditional optimizers like SGD, AdamW, and even KFAC struggle.

In technical terms, FOP applies a Fisher-orthogonal correction atop natural gradient descent, preserving intra-batch gradient variance to capture local curvature information that standard averaging discards.

Empirical Gains: FOP Accelerates Training by up to 7.5x on ImageNet-1K

The performance improvements with FOP are substantial and consistent across benchmarks:

- ImageNet-1K (ResNet-50): Achieves 75.9% validation accuracy in 40 epochs and 335 minutes, compared to 71 epochs and 2,511 minutes with SGD-a 7.5-fold reduction in training time.

- CIFAR-10: Outperforms AdamW by 1.7x and KFAC by 1.3x in speed. At massive batch sizes (up to 50,000), only FOP attains 91% accuracy, while others fail.

- ImageNet-100 (Vision Transformer): Delivers up to 10x faster training than AdamW and twice as fast as KFAC at large batch scales.

- Imbalanced Datasets: Cuts Top-1 error rates by 2.3-3.3% over strong baselines, a critical advantage for real-world, skewed data distributions.

Memory and Scalability: While FOP’s peak GPU memory usage is higher for small tasks, it aligns with KFAC when distributed across multiple GPUs. Importantly, FOP maintains near-linear scaling in training speed as batch sizes and GPU counts increase, a feat unmatched by existing optimizers.

Implications for Industry, Developers, and Researchers

- For Businesses: Cutting training costs by up to 87% revolutionizes AI project economics, enabling investment in larger models and faster iteration cycles, thereby accelerating innovation and competitive advantage.

- For Practitioners: FOP integrates seamlessly into existing PyTorch pipelines with minimal code changes and no additional hyperparameter tuning, making it accessible and practical for immediate adoption.

- For Researchers: FOP challenges the traditional notion of gradient noise, highlighting the importance of intra-batch variance. Its robustness on imbalanced datasets also opens new avenues for deploying AI in complex, real-world scenarios.

Transforming Large-Batch Training: From Challenge to Opportunity

Historically, increasing batch sizes has destabilized optimizers like SGD and AdamW, and even advanced methods like KFAC have struggled. FOP flips this paradigm by harnessing the rich information contained in gradient variability, enabling stable and efficient training at unprecedented batch scales. This shift represents a fundamental change in optimization philosophy: what was once discarded as noise is now recognized as a valuable guide through the loss landscape.

Comparative Overview: FOP Versus Traditional Optimizers

| Performance Metric | SGD / AdamW | KFAC | FOP (This Work) |

|---|---|---|---|

| Training Speed | Baseline | 1.5-2x faster | Up to 7.5x faster |

| Stability with Large Batches | Unstable | Requires damping, often stalls | Stable at extreme batch sizes |

| Robustness on Imbalanced Data | Low | Moderate | Superior |

| Ease of Integration | Yes | Yes | Yes, pip-installable |

| GPU Memory Usage (Distributed) | Low | Moderate | Moderate |

Conclusion: FOP as a Catalyst for Scalable, Cost-Effective AI Training

Fisher-Orthogonal Projection represents a significant advancement in AI optimization, enabling up to 7.5 times faster training on challenging datasets like ImageNet-1K while improving model generalization and robustness. By leveraging gradient variance as a meaningful signal rather than dismissing it as noise, FOP unlocks new efficiencies and capabilities in large-scale machine learning. This innovation not only slashes GPU compute expenses by as much as 87% but also empowers researchers and enterprises to develop larger, more sophisticated models with greater speed and reliability. With its straightforward integration into existing frameworks and minimal tuning requirements, FOP paves the way for the next era of efficient, scalable AI development.