Developing a Meta-Cognitive Agent for Adaptive Reasoning Depth

This guide demonstrates how to construct a sophisticated meta-cognitive agent capable of autonomously adjusting its reasoning depth. Instead of viewing problem-solving as a fixed process, we conceptualize reasoning as a continuum-from rapid heuristics to elaborate chain-of-thought analysis, and finally to exact, tool-assisted computation. By training a neural meta-controller, the agent learns to select the optimal reasoning mode tailored to each task.

Balancing Accuracy, Efficiency, and Reasoning Budget

The agent’s objective is to optimize the trade-offs between solution accuracy, computational expense, and a finite reasoning budget. This dynamic approach enables the agent to self-monitor its internal state and adapt its problem-solving strategy in real time. Throughout the tutorial, we experiment with various components, identify emergent patterns, and explore how meta-cognition arises as the agent reflects on its own cognitive processes.

Task Environment and Reasoning Modes

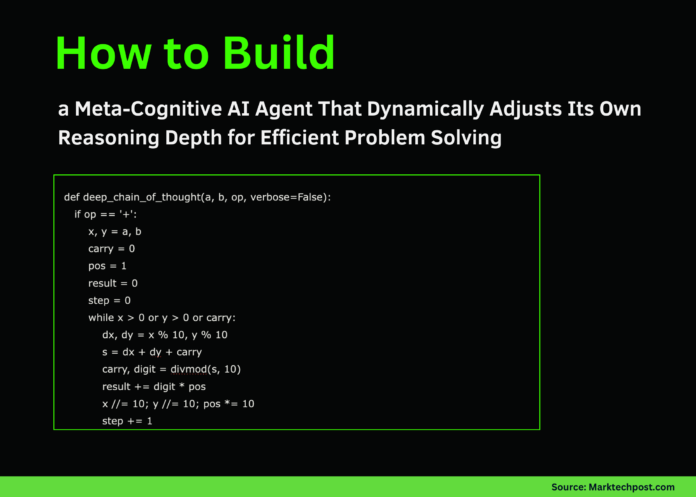

We begin by defining the environment in which the meta-agent operates. The tasks involve arithmetic operations, specifically addition and multiplication, with operands randomly generated within specified ranges. Each task is associated with a ground-truth answer and an estimated difficulty level. To solve these tasks, the agent can employ one of three reasoning strategies:

- Fast Heuristic: A quick, approximate method that trades some accuracy for speed.

- Deep Chain-of-Thought: A stepwise, detailed reasoning process that mimics human-like problem decomposition.

- Tool Solver: An exact computation method that guarantees correctness but at a higher computational cost.

Each solver exhibits distinct characteristics in terms of accuracy and resource consumption, forming the basis for the agent’s decision-making framework.

Encoding Task States and Policy Network Architecture

To enable informed decision-making, each task is encoded into a structured state vector capturing:

- Normalized operands

- Operation type (addition or multiplication)

- Estimated difficulty based on heuristics

- Remaining reasoning budget

- Exponential moving average of recent errors

- One-hot encoding of the last action taken

This state representation feeds into a neural policy network, which outputs a probability distribution over the three reasoning modes. The network consists of fully connected layers with nonlinear activations, designed to learn complex mappings from task states to optimal action choices.

Learning via Reinforcement: The REINFORCE Algorithm

The core learning mechanism employs the REINFORCE policy gradient method. The agent interacts with the environment over multiple episodes, each containing several reasoning steps. During each step, the agent samples an action based on the policy, executes the corresponding reasoning mode, and receives a reward that balances correctness, computational cost, and budget adherence.

Rewards are accumulated and discounted over time to compute returns, which guide the policy updates. This reinforcement learning framework encourages the agent to favor strategies that maximize accuracy while minimizing resource expenditure.

Training and Evaluating the Meta-Cognitive Controller

Training proceeds over hundreds of episodes, during which the agent refines its policy to better allocate reasoning resources. Evaluation across tasks of varying difficulty reveals distinct behavioral patterns:

- For simple problems, the agent predominantly selects fast heuristics, conserving budget.

- Moderate difficulty tasks often trigger the deep chain-of-thought mode, balancing accuracy and cost.

- Complex tasks prompt the agent to invoke the precise tool solver despite its higher computational expense.

This adaptive behavior highlights the agent’s ability to calibrate its cognitive effort based on task demands.

Case Study: Meta-Selected Reasoning on a Challenging Task

Consider a multiplication problem with operands 47 and 18. The trained policy evaluates the task state and selects the most suitable reasoning mode. In this instance, the agent opts for the deep chain-of-thought approach, meticulously breaking down the multiplication into manageable steps. This detailed reasoning yields an accurate result while managing computational cost effectively.

Such examples illustrate the agent’s meta-cognitive capacity to introspect and choose reasoning pathways that optimize performance under constraints.

Summary: The Power of Meta-Cognitive Control in AI Reasoning

This exploration demonstrates how a neural meta-controller can dynamically govern an agent’s reasoning depth, adapting to task complexity and resource limitations. The agent learns to discern when quick approximations suffice, when thorough analysis is warranted, and when invoking precise solvers is justified. This meta-cognitive framework fosters more efficient, flexible, and intelligent problem-solving systems, paving the way for advanced AI capable of self-regulated cognition.

Explore further developments in adaptive reasoning and meta-cognition to enhance AI decision-making capabilities. Stay connected for updates on cutting-edge research and practical implementations in this exciting field.