How do Diffusion-based Large Language Models (LLMs) compare in power to traditional autoregressive LLMs when we analyze generation as an algorithmic process defined by time and space complexity, rather than merely a decoding technique? A recent study conducted by researchers from the Toyota Technological Institute at Chicago and MIT provides a rigorous theoretical framework addressing this question. Their work contrasts Auto-Regressive Models (ARM), Masked Diffusion Models (MDM), and introduces a novel class termed Any-Process Masked Diffusion Models (AP-MDM), leveraging complexity theory and carefully designed reasoning benchmarks.

Comparing ARM and MDM: Equivalent Expressiveness, Divergent Parallel Efficiency

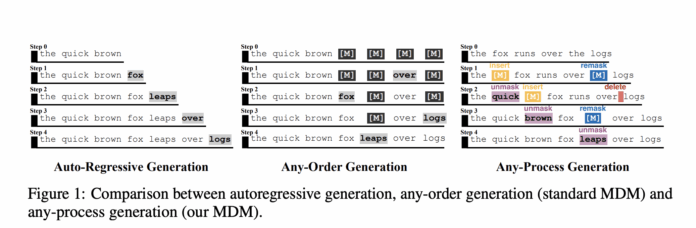

Autoregressive models generate sequences by predicting the next token strictly from left to right. Previous research has established that ARMs are Turing complete, meaning they can theoretically compute any function given sufficient context and computational resources.

In contrast, Masked Diffusion Models operate on sequences with masked tokens, starting from a fully masked input and progressively revealing tokens in multiple positions simultaneously and in arbitrary order. MDMs are conceptualized as encoder-only Transformers characterized by a context length S(n) and a number of decoding steps T(n) for an input of size n.

The study demonstrates that:

- MDMs can emulate any Parallel Random Access Machine (PRAM) algorithm with parallel time T(n) using O(T(n)) diffusion steps and context size S(n) proportional to the total computational work.

- This capability renders MDMs Turing complete and enables them to achieve optimal parallel runtimes on problems within the NC complexity class, such as graph connectivity and certain context-free language recognition tasks, where ARMs require time linear in sequence length.

Therefore, diffusion-based LLMs offer enhanced parallel efficiency on tasks amenable to parallelization, rather than fundamentally increasing computational expressiveness.

Does Any-Order Generation Confer Additional Computational Power?

A key inquiry is whether generating tokens in any order surpasses the capabilities of strict left-to-right generation.

To explore this, the researchers define an Any-Order MDM (AO-MDM) and a corresponding Masked ARM that share the same architecture and token budget but decode in a fixed left-to-right manner over sequences padded with mask tokens.

The principal finding is:

- Any computation performed by an AO-MDM with one token generated per step and context size S(n) can be transformed into a left-to-right decoding schedule and simulated by a Masked ARM with sequence length O(S(n)) plus a constant number of additional layers.

In essence, when controlling for parallelism and model architecture, any-order generation alone does not extend the class of solvable problems beyond what ARMs can achieve.

Both ARM and AO-MDM models share a space complexity constraint: with context length S(n), they cannot efficiently solve problems requiring more than approximately S(n)3 serial time. Consequently, with polynomially bounded context, they are effectively confined to problems in the complexity class P and cannot tackle general NP-hard problems solely by scaling at inference time.

Introducing Any-Process Generation: The AP-MDM Framework

To transcend these limitations, the authors propose Any-Process Generation, realized through the Any-Process Masked Diffusion Model (AP-MDM).

AP-MDM retains the masked diffusion paradigm but enriches the transition function with three novel operations beyond the standard unmasking:

- Remask: Reverts a previously decoded token back to the mask token

M, enabling backtracking and correction. - Insert: Adds a new mask token at a specified position, allowing dynamic sequence length expansion.

- Delete: Removes an existing mask token, permitting sequence length contraction.

These operations are governed by a 3-bit control vector per token position (ct,i = (ct,i[1], ct,i[2], ct,i[3])), predicted alongside content logits by the Transformer backbone.

- The first bit controls

remask, deciding whether to overwrite a token withM, facilitating iterative refinement. - The second and third bits manage

insertanddeleteoperations, enabling flexible sequence length adjustments during decoding.

Architecturally, AP-MDM requires only three additional lightweight linear heads atop an encoder-only Transformer, making it straightforward to integrate with existing diffusion LLM architectures.

Theoretical analysis reveals that:

- AP-MDM can simulate any PRAM algorithm with optimal parallel time and space complexity, using context proportional to the actual space S(n) rather than total computational work.

- With polynomially bounded context, AP-MDM attains computational power equivalent to PSPACE, surpassing the P-class limitations of standard MDM and ARM models under similar context constraints.

Moreover, the researchers provide evidence suggesting the existence of constant-depth AP-MDMs whose generation processes cannot be replicated by any constant-depth ARM or Masked ARM, assuming standard complexity-theoretic conjectures.

Experimental Validation: Challenging Reasoning Tasks

The empirical results align closely with theoretical predictions, highlighting the practical advantages of AP-MDM.

Sudoku Solving

Generalized Sudoku puzzles on n2 x n2 grids are known to be NP-complete.

- AP-MDM achieves an impressive 99.28% accuracy using approximately 1.2 million parameters and only 100 training examples.

- In contrast, an ARM baseline with fixed token ordering attains 87.18% accuracy but requires about 1.8 million training samples and nearly five times the parameter count.

- The best AO-MDM baseline reaches 89.49% accuracy under the same extensive data regime.

These findings underscore the critical role of editing operations, particularly remasking, in leveraging test-time scaling for complex reasoning challenges.

Dyck Languages and Syntax Constraints

The study also examines two-sided Dyck k languages, which abstractly represent matched parentheses and are fundamental to programming language syntax.

It is proven that fixed-order ARMs cannot guarantee valid generation for arbitrarily long sequences, whereas AP-MDMs employing insert and remask operations can precisely generate Dyck languages.

This reflects the necessity of structure-aware editing in code generation tasks, such as maintaining balanced brackets and consistent scoping.

Graph Generation and Structural Modifications

For graph editing tasks subject to global constraints, AP-MDM utilizes insert, delete, and remask operations to perform structured sequence edits on graph representations.

Accuracy remains near perfect as graph size increases, while ARM performance deteriorates with larger graphs.

Parity Computation and Length Generalization

On parity tasks, AP-MDM learns a local elimination strategy by iteratively deleting pairs of bits, guided by remask and delete operations.

Remarkably, trained only on sequences of length two, AP-MDM generalizes flawlessly to sequences of arbitrary length, achieving 100% accuracy.

ARM baselines struggle to generalize comparably, even when trained on much longer sequences.

Summary of Insights

- Any-order Masked Diffusion Models match the expressiveness of autoregressive models when architecture and parallelism are fixed, primarily offering gains in parallel efficiency rather than computational power.

- Masked Diffusion Models can simulate PRAM algorithms and deliver exponential speedups on parallelizable problems in NC, but with polynomial context they remain confined to the complexity class P, similar to ARMs.

- Any-Process MDM extends diffusion LLMs with remask, insert, and delete operations controlled by a three-bit vector per token, enabling simulation of PRAM algorithms with optimal parallel time and space, thus achieving PSPACE-level expressivity under polynomial context.

- On challenging reasoning benchmarks such as generalized Sudoku, Dyck languages, graph editing, and parity, AP-MDM demonstrates substantial empirical superiority, exemplified by near-perfect Sudoku accuracy with minimal training data and a smaller model size compared to ARM and AO-MDM baselines.

- For domains involving structured edits and revision histories-like programming, mathematics, and AI-driven scientific discovery-AP-MDM’s edit-based generation aligns more naturally with underlying processes than next-token prediction, and its editing operations are provably difficult to simulate with constant-depth autoregressive models.

Concluding Remarks

The introduction of Any-Process MDM marks a significant advancement by conceptualizing sequence generation as a comprehensive algorithmic procedure rather than a mere decoding order. While Masked Diffusion Models already achieve PRAM-level parallel time, they remain limited to P-class computations under polynomial context, akin to autoregressive models. By incorporating remask, insert, and delete operations, AP-MDM attains PSPACE-level computational expressiveness and exhibits strong empirical performance on complex reasoning tasks such as Sudoku, Dyck language generation, graph editing, and parity computation. This research strongly advocates for future state-of-the-art LLMs to embrace edit-based Any-Process Generation frameworks instead of relying solely on accelerated autoregressive decoding.