Introducing WeatherNext 2: A Breakthrough in AI-Powered Global Weather Forecasting

Google DeepMind has unveiled WeatherNext 2, an advanced artificial intelligence system designed for medium-range global weather prediction. This cutting-edge model now enhances weather forecasts across Google Search, Gemini, Pixel Weather, and the Google Maps Platform’s Weather API, with full Google Maps integration anticipated soon. By leveraging a novel Functional Generative Network (FGN) architecture combined with a large ensemble approach, WeatherNext 2 delivers probabilistic forecasts that are not only faster but also more precise and higher in resolution compared to its predecessor, WeatherNext. Additionally, this technology is accessible through data products on Earth Engine, BigQuery, and as an early access model on Vertex AI.

Revolutionizing Weather Forecasting: From Fixed Grids to Dynamic Functional Ensembles

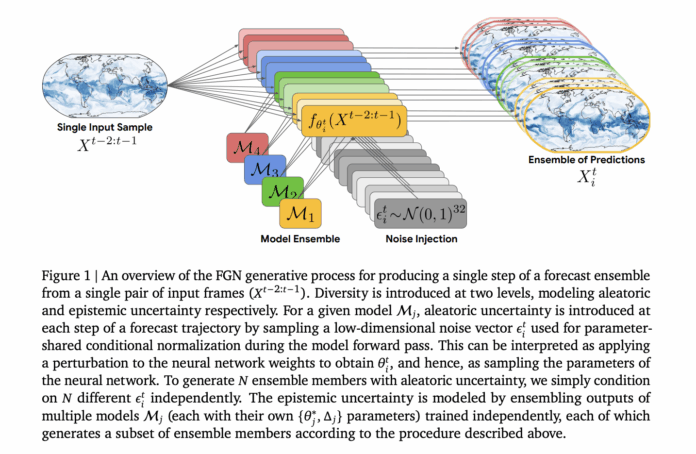

At the heart of WeatherNext 2 lies the innovative FGN model, which departs from traditional deterministic forecasting methods. Instead of producing a single forecast, it samples from the joint probability distribution of 15-day global weather trajectories. Each forecast state encompasses six atmospheric variables across 13 pressure levels, alongside six surface variables, all mapped on a 0.25-degree latitude-longitude grid with updates every six hours. The model is trained to approximate the conditional distribution of future weather states given past observations and generates ensemble trajectories autoregressively from two initial analysis frames.

The architecture of each FGN instance resembles the GenCast denoiser but is significantly scaled up. It employs a graph neural network encoder and decoder to translate between the regular grid and a latent space defined on a spherical icosahedral mesh refined sixfold. A graph transformer processes the mesh nodes, enabling sophisticated spatial reasoning. The production FGN model powering WeatherNext 2 contains approximately 180 million parameters per model seed, with a latent dimension of 768 and 24 transformer layers-substantially larger than GenCast’s 57 million parameters, latent dimension 512, and 16 layers. Moreover, WeatherNext 2 operates on a finer six-hour timestep, doubling the temporal resolution of GenCast’s 12-hour steps.

Separating and Capturing Uncertainty: Epistemic vs. Aleatoric in Functional Space

WeatherNext 2 distinctly models two types of uncertainty crucial for reliable forecasting. Epistemic uncertainty, arising from limited data and model imperfections, is addressed by deploying a deep ensemble of four independently trained FGN models. Each model seed generates an equal number of ensemble members, collectively capturing uncertainty about the model parameters.

Conversely, aleatoric uncertainty-the inherent randomness in atmospheric processes-is modeled through functional perturbations. At every forecast step, the system samples a 32-dimensional Gaussian noise vector, which modulates conditional normalization layers within the network. This mechanism effectively alters the network’s weights dynamically, producing diverse yet physically coherent forecast trajectories. Unlike random noise applied independently at each grid point, these perturbations yield ensemble members that represent plausible alternative weather scenarios consistent with the initial conditions.

Training Strategy: Leveraging Marginal Distributions and CRPS for Joint Coherence

One of the key innovations in WeatherNext 2’s training is its focus on marginal distributions rather than explicit multivariate targets. The model optimizes the Continuous Ranked Probability Score (CRPS), a proper scoring rule that encourages sharp and well-calibrated probabilistic forecasts. CRPS is computed fairly over ensemble samples at each grid point and averaged across variables, pressure levels, and time steps.

During advanced training phases, short autoregressive rollouts of up to eight steps are introduced, with backpropagation through these sequences enhancing long-term forecast stability. Despite training solely on marginal distributions, the model’s low-dimensional noise input and shared functional perturbations compel it to learn realistic spatial and cross-variable dependencies. This approach ensures that ensemble forecasts capture coherent regional patterns and derived meteorological quantities effectively.

Performance Highlights: Outperforming Previous Models and Traditional Baselines

WeatherNext 2 demonstrates significant improvements over the earlier GenCast-based WeatherNext system. It achieves superior CRPS scores in 99.9% of evaluated cases, with average improvements around 6.5% and peak gains nearing 18% for certain variables at shorter forecast horizons. Additionally, the ensemble mean root mean squared error (RMSE) is reduced, while maintaining a strong relationship between ensemble spread and forecast error, confirming reliable uncertainty quantification up to 15 days ahead.

To assess the model’s ability to capture joint spatial and multivariate structures, researchers evaluated CRPS over spatially pooled regions at various scales and on derived variables such as 10-meter wind speed and geopotential height differences between 300 hPa and 500 hPa levels. WeatherNext 2 consistently outperformed GenCast, indicating enhanced modeling of regional aggregates and complex meteorological relationships.

A critical application area is tropical cyclone tracking. Using an external tracking algorithm, WeatherNext 2 reduced ensemble mean track errors, effectively extending useful forecast lead times by approximately one day compared to GenCast. Even when constrained to a 12-hour timestep, WeatherNext 2 maintained superior performance beyond two days. Relative Economic Value analyses of track probability fields further confirmed its advantage, providing vital support for emergency planning and risk management decisions.

Summary of Key Innovations and Benefits

- Functional Generative Network Backbone: WeatherNext 2 employs a graph transformer ensemble that predicts comprehensive 15-day global weather trajectories on a 0.25° grid with six-hour intervals, covering multiple atmospheric and surface variables.

- Explicit Uncertainty Decomposition: The system integrates four independently trained FGN models to capture epistemic uncertainty and uses a shared 32-dimensional noise vector to model aleatoric uncertainty, ensuring each forecast sample is a coherent alternative scenario rather than random noise.

- Marginal-Based Training with Joint Structure Emergence: Despite training only on marginal distributions using CRPS, the model learns realistic spatial and cross-variable dependencies, improving joint forecast quality over previous diffusion-based models.

- Consistent Superior Accuracy: WeatherNext 2 surpasses GenCast and earlier WeatherNext versions in CRPS, RMSE, and economic value metrics, particularly excelling in extreme weather event prediction and tropical cyclone tracking.

Explore the latest advancements in AI-driven weather forecasting with WeatherNext 2, setting new standards for speed, accuracy, and reliability in global meteorological predictions.