As large language model (LLM) agents increasingly archive all their interactions, a critical question arises: can these agents enhance their decision-making policies during testing by learning from past experiences, rather than merely replaying stored context windows?

Introducing Evo-Memory: A New Paradigm for Test-Time Learning

Addressing this challenge, researchers from the University of Illinois Urbana-Champaign and Google DeepMind have developed Evo-Memory, a novel streaming benchmark and agent framework designed to bridge this gap. Evo-Memory rigorously assesses test-time learning capabilities through a mechanism called self-evolving memory. Unlike traditional methods that rely on static conversational histories, Evo-Memory investigates whether agents can accumulate, adapt, and apply strategies dynamically across continuous task sequences.

From Passive Recall to Active Experience Reuse

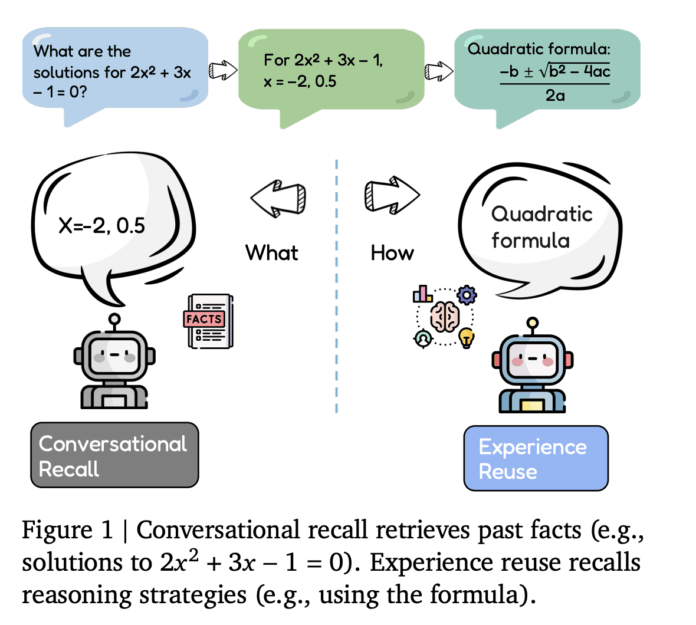

Current LLM agents predominantly utilize conversational recall, which involves storing dialogue logs, tool usage traces, and retrieved documents. These elements are then reintroduced into the context window to assist with subsequent queries. While effective for fact retrieval and step-by-step recall, this approach functions as a passive memory buffer and does not enable agents to actively refine their problem-solving strategies based on prior outcomes.

In contrast, Evo-Memory emphasizes experience reuse. Each interaction is encoded as a comprehensive experience record, capturing inputs, outputs, success indicators, and the effectiveness of applied strategies. The benchmark evaluates whether agents can retrieve these experiences in future tasks, utilize them as reusable procedural knowledge, and iteratively improve their memory representations over time.

Framework Architecture and Sequential Task Streams

The researchers formalize a memory-augmented agent as a four-component tuple: (F, U, R, C). Here, F represents the foundational model generating outputs; R is the retrieval system querying the memory store; C constructs the working prompt by integrating current inputs with retrieved memories; and U updates the memory by adding new experiences and evolving stored knowledge after each interaction.

Evo-Memory transforms traditional benchmarks into ordered task streams, where earlier tasks embed strategies beneficial for subsequent ones. The benchmark suite encompasses diverse datasets such as AIME 24, AIME 25, GPQA Diamond, and MMLU-Pro subsets focused on economics, engineering, and philosophy. It also includes ToolBench for tool utilization and multi-turn interactive environments from AgentBoard, featuring domains like AlfWorld, BabyAI, ScienceWorld, Jericho, and PDDL planning tasks.

Performance is measured across four key dimensions: exact match or answer accuracy for single-turn tasks; success and progress rates in embodied environments; step efficiency, which tracks the average number of steps per successful task; and sequence robustness, assessing stability when task order is altered.

ExpRAG: A Baseline for Minimal Experience Reuse

To establish a foundational benchmark, the team introduced ExpRAG, a straightforward experience reuse method. Each interaction is stored as a structured text record formatted as ⟨xi, yi^, fi⟩, where xi is the input, yi^ is the model’s output, and fi is feedback such as correctness. At each new step, the agent retrieves similar past experiences based on similarity scores and appends them as in-context examples alongside the current input. The new experience is then added to memory.

ExpRAG maintains the original agent control loop as a single-shot call to the backbone model but enriches it with explicitly stored prior task data. This simplicity ensures that any performance improvements on Evo-Memory stem from effective task-level experience retrieval rather than complex planning or tool integration.

ReMem: The Action-Think-Refine Memory Cycle

The centerpiece of this research is ReMem, an innovative pipeline that extends the traditional ReAct framework by incorporating an explicit action-think-memory refine cycle. At each internal step, the agent evaluates the current input, memory state, and prior reasoning traces to select one of three operations:

- Think: Generate intermediate reasoning steps that break down the task.

- Act: Produce an environment action or final answer visible to the user.

- Refine: Engage in meta-reasoning by retrieving, pruning, and reorganizing memory entries.

This iterative loop forms a Markov decision process where the state encompasses the query, memory contents, and ongoing thoughts. Multiple Think and Refine operations can be interleaved before an Act operation concludes the step. Unlike conventional ReAct agents with static memory buffers, ReMem treats memory as a dynamic entity that the agent actively manipulates during inference.

Empirical Findings Across Reasoning, Tool Use, and Interactive Environments

Experiments were conducted using Gemini 2.5 Flash and Claude 3.7 Sonnet models under a unified search-predict-evolve protocol, isolating the impact of memory architecture by keeping prompting, search, and feedback constant.

On single-turn benchmarks, memory-evolving methods yielded consistent, albeit moderate, improvements. For instance, ReMem achieved an average exact match score of 0.65 across AIME 24, AIME 25, GPQA Diamond, and MMLU Pro subsets, alongside 0.85 API and 0.71 accuracy on ToolBench. ExpRAG also demonstrated strong performance with an average of 0.60, surpassing several more complex memory designs such as Agent Workflow Memory and Dynamic Cheatsheet variants.

More pronounced benefits appeared in multi-turn environments. Using Claude 3.7 Sonnet, ReMem reached success and progress rates of 0.92 and 0.96 on AlfWorld, 0.73 and 0.83 on BabyAI, 0.83 and 0.95 on PDDL, and 0.62 and 0.89 on ScienceWorld, averaging 0.78 success and 0.91 progress across datasets. On Gemini 2.5 Flash, ReMem improved over history and ReAct baselines with average success and progress rates of 0.50 and 0.64.

Step efficiency also improved significantly. For example, in AlfWorld, the average number of steps to complete a task dropped from 22.6 with a history baseline to 11.5 using ReMem. Even lightweight approaches like ExpRecent and ExpRAG reduced step counts, indicating that simple task-level experience reuse can enhance efficiency without modifying the backbone architecture.

Further analysis revealed that performance gains correlate strongly with task similarity within datasets. By computing embedding distances from tasks to their cluster centers, the researchers found Pearson correlations of approximately 0.72 for Gemini 2.5 Flash and 0.56 for Claude 3.7 Sonnet between ReMem’s advantage and task similarity. Structured domains such as PDDL and AlfWorld exhibited larger improvements compared to more heterogeneous datasets like AIME 25 or GPQA Diamond.

Summary of Insights and Future Directions

- Evo-Memory offers a comprehensive streaming benchmark that transforms static datasets into ordered task sequences, enabling agents to retrieve, integrate, and update memories dynamically rather than relying solely on static conversational recall.

- The framework conceptualizes memory-augmented agents as a tuple (F, U, R, C) and evaluates over 10 diverse memory modules-including retrieval-based, workflow, and hierarchical memories-across 10 single-turn and multi-turn datasets spanning reasoning, question answering, tool use, and embodied environments.

- ExpRAG establishes a minimal yet effective baseline for experience reuse by storing each task interaction as a structured record and retrieving similar experiences as in-context examples, yielding consistent improvements over pure history-based methods.

- ReMem innovates by extending the ReAct loop with an explicit Think, Act, Refine memory control cycle, allowing agents to actively manage and refine their memory during inference, resulting in higher accuracy, success rates, and efficiency in both single-turn and long-horizon interactive tasks.

- Across Gemini 2.5 Flash and Claude 3.7 Sonnet backbones, self-evolving memory approaches like ExpRAG and especially ReMem enable smaller models to perform comparably to stronger agents at test time, enhancing exact match, success, and progress metrics without any retraining of base model parameters.

Concluding Remarks

Evo-Memory represents a significant advancement in evaluating and fostering self-evolving memory capabilities within LLM agents. By compelling models to operate on sequential task streams rather than isolated prompts, it provides a rigorous testbed for memory architectures. The demonstrated success of simple methods like ExpRAG and the more sophisticated ReMem pipeline highlights the potential for test-time policy evolution without retraining. This research paves the way for designing LLM agents that continuously learn and adapt from their experiences in real-time, marking a pivotal step toward more autonomous and intelligent systems.