Introducing SIMA 2: Google DeepMind’s Advanced Multi-World Gaming Agent

Google DeepMind has unveiled SIMA 2, an innovative AI agent designed to navigate and solve challenges across diverse 3D virtual environments. This development marks a significant stride toward creating versatile agents capable of handling complex, real-world robotic tasks.

Building on Gemini: A Leap in AI Capability

Unlike its predecessor, SIMA 2 leverages Gemini, DeepMind’s cutting-edge large language model, which substantially enhances its problem-solving and interaction skills. This integration enables SIMA 2 to perform intricate tasks autonomously, engage in natural conversations with users, and refine its abilities through iterative learning and trial-and-error approaches.

Complex Problem-Solving in Virtual Worlds

According to Joe Marino, a research scientist at DeepMind, even seemingly simple in-game actions-like lighting a lantern-require a sequence of coordinated steps, illustrating the complexity of tasks SIMA 2 must master. The agent’s ability to break down and execute such multi-step processes is a foundational capability for future AI systems.

From Virtual Games to Real-World Robotics

The overarching goal for DeepMind is to develop AI agents that can follow open-ended instructions and operate in environments far more intricate than web browsers or traditional games. Skills such as spatial navigation, tool usage, and collaborative problem-solving that SIMA 2 demonstrates are critical for the next generation of robotic assistants designed to interact seamlessly with humans.

Distinct Approach Compared to Past Game-Playing AIs

Unlike earlier DeepMind projects like AlphaZero, which mastered games with fixed objectives such as Go, or AlphaStar, which excelled at StarCraft 2, SIMA 2 is trained to operate in open-ended scenarios without predefined goals. Instead, it learns to interpret and execute instructions provided by human users, making it more adaptable to varied tasks.

Multimodal Interaction and Perception

SIMA 2 receives commands through multiple channels: text chat, voice input, and direct screen annotations. It processes the game’s visual data frame by frame, enabling it to determine the necessary actions to fulfill its objectives. This multimodal interface enhances its flexibility and responsiveness in dynamic virtual settings.

Training Regimen and Performance Enhancements

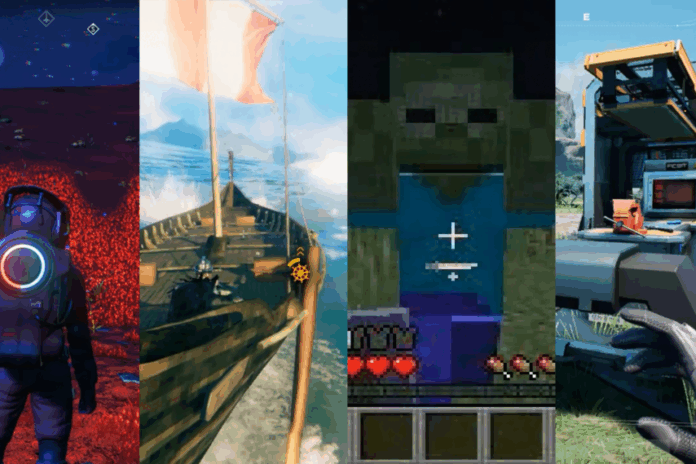

The agent was trained on gameplay footage from eight commercial titles, including No Man’s Sky and Goat Simulator 3, alongside three proprietary virtual worlds developed by DeepMind. By learning to associate keyboard and mouse inputs with in-game actions, SIMA 2 developed a robust understanding of diverse control schemes.

With Gemini’s support, SIMA 2 exhibits improved instruction-following capabilities, actively asking clarifying questions and providing progress updates. This feedback loop allows it to tackle increasingly complex challenges more effectively.

Adapting to Unseen Environments

In rigorous testing, SIMA 2 was placed in entirely new virtual worlds generated by Genie 3, DeepMind’s environment-creation system. Remarkably, the agent successfully navigated and executed tasks in these unfamiliar settings, demonstrating strong generalization skills.

Moreover, Gemini dynamically generated new tasks and offered strategic hints when SIMA 2 initially failed, enabling the agent to learn from repeated attempts and improve through trial and error-a process akin to human learning.

Current Limitations and Future Directions

Despite its advancements, SIMA 2 faces challenges with tasks requiring extended sequences of actions and longer completion times. To enhance responsiveness, the team limited its long-term memory, which currently restricts its ability to recall past interactions comprehensively. Additionally, its proficiency with mouse and keyboard controls remains inferior to that of human players.

Expert Perspectives on SIMA 2’s Impact

Julian Togelius, an AI and game design expert at New York University, acknowledges the difficulty of training a single AI to master multiple games purely from visual input, calling it “hard mode.” He notes that previous systems like DeepMind’s GATO struggled to transfer skills across diverse virtual environments, highlighting SIMA 2’s progress as noteworthy.

However, Togelius remains cautiously optimistic about the transition from virtual agents to real-world robots, emphasizing that physical robots benefit from consistent bodily feedback, unlike AI agents navigating varied and sometimes unpredictable game rules.

Conversely, Matthew Guzdial, an AI researcher at the University of Alberta, expresses skepticism regarding SIMA 2’s generalizability. He points out that many video games share similar control schemes, which may inflate the agent’s apparent versatility. Guzdial also stresses the complexity of interpreting real-world visual data compared to the simplified, human-friendly graphics in games, questioning how well SIMA 2’s skills will transfer to physical robotics.

Looking Ahead: Endless Virtual Training and Beyond

DeepMind plans to continue refining SIMA 2 within an evolving virtual training environment powered by Genie 3, where the agent can endlessly practice and learn from new challenges generated on the fly, guided by Gemini’s intelligent feedback. As Marino stated, this is just the beginning of exploring the vast potential of such adaptable AI agents.