Google DeepMind has unveiled SIMA 2, an advanced generalist embodied agent designed to explore the limits of autonomous interaction within intricate 3D gaming environments. Building upon its predecessor, SIMA (Scalable Instructable Multiworld Agent) now leverages the Gemini architecture to not only follow instructions but also to reason about objectives, articulate its strategies, and enhance its capabilities through self-directed learning across diverse virtual worlds.

Evolution from SIMA 1 to SIMA 2: A Leap in Embodied AI

Originally launched in early 2024, SIMA 1 mastered over 600 language-based commands such as “turn left,” “climb the ladder,” and “open the map.” It operated solely through pixel inputs and a virtual keyboard and mouse interface, without any direct access to the underlying game mechanics. Despite this, SIMA 1 achieved a task success rate of approximately 31%, compared to human players who averaged around 71% on the same challenges.

SIMA 2 retains the embodied interaction framework but replaces the core decision-making engine with the Gemini 2.5 Flash Lite model. This upgrade transforms the agent from a reactive instruction follower into a proactive collaborator that formulates internal plans, reasons through language, and executes complex action sequences. DeepMind characterizes this shift as evolving from a simple command executor to an interactive gaming partner that cooperates with users.

Gemini-Powered Architecture: Integrating Reasoning and Control

At the heart of SIMA 2 lies the Gemini model, which processes visual inputs and user commands to infer high-level goals before generating corresponding actions via the virtual keyboard and mouse. Training combines human gameplay demonstrations annotated with language labels and self-generated annotations from Gemini, enabling the agent to align its reasoning with both human intent and its own behavioral descriptions.

This training approach empowers SIMA 2 to transparently communicate its intentions, outline planned steps, and provide justifications for its decisions. Consequently, the agent can engage in meaningful dialogue about its objectives and reveal a clear, interpretable reasoning process grounded in the game environment.

Enhanced Generalization and Robust Performance

Performance evaluations reveal that SIMA 2 nearly doubles the task completion rate of its predecessor, achieving around 62% success on DeepMind’s primary benchmark suite, while human players maintain approximately 70%. More importantly, SIMA 2 significantly narrows the performance gap, demonstrating substantial progress in handling complex, language-driven missions.

When tested on unseen games like ASKA and MineDojo-titles excluded from its training data-SIMA 2 continues to outperform SIMA 1 by a wide margin. This improvement highlights genuine zero-shot generalization capabilities rather than mere overfitting. The agent also exhibits the ability to transfer abstract concepts across games, such as applying its understanding of “mining” in one environment to “harvesting” in another, showcasing flexible knowledge reuse.

Multimodal Command Interpretation: Beyond Text

SIMA 2 expands its instruction comprehension beyond written language, adeptly responding to spoken commands, interpreting hand-drawn sketches, and even executing tasks prompted solely by emojis. For instance, when instructed to “go to the house that is the color of a ripe tomato,” the Gemini core deduces that ripe tomatoes are red and navigates accordingly to the red house.

Moreover, the system supports multiple natural languages and can process mixed-modal inputs combining text and visual cues. This multimodal integration offers a valuable framework for robotics and physical AI developers, providing a unified representation that links language, audio, imagery, and control actions to ground abstract symbols in concrete behaviors.

Autonomous Learning and Continuous Self-Enhancement

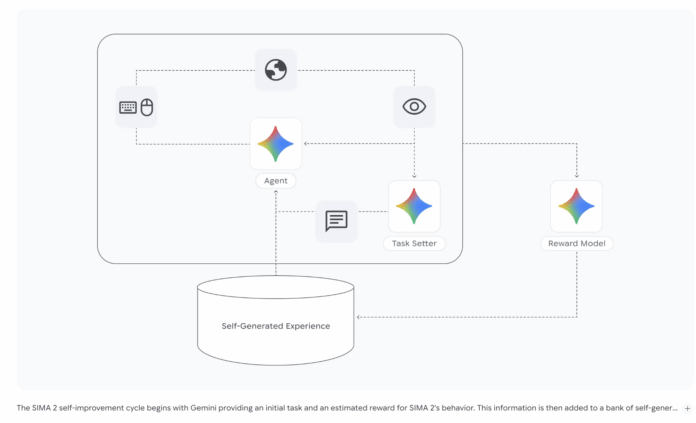

A standout feature of SIMA 2 is its self-improvement mechanism. After initial training on human gameplay data, the agent is deployed in new game environments where it learns exclusively from its own experiences. A dedicated Gemini-based model generates novel tasks, while a reward model evaluates the agent’s performance, creating a feedback loop that drives learning.

These self-generated gameplay trajectories are stored in a growing dataset, which subsequent versions of SIMA 2 utilize during training. This iterative process enables the agent to master tasks that earlier iterations could not, all without additional human input. This approach exemplifies a multitask, model-in-the-loop learning system where language models define objectives and provide feedback, and the embodied agent refines its policies accordingly.

Exploring New Frontiers with Genie 3 Worlds

To further challenge SIMA 2’s adaptability, DeepMind integrates it with Genie 3, a generative world model capable of creating interactive 3D environments from a single image or textual description. Within these procedurally generated worlds, the agent must orient itself, interpret instructions, and accomplish goals despite unfamiliar layouts and assets.

Results demonstrate that SIMA 2 can successfully navigate these novel environments, recognize objects like benches and trees, and perform coherent actions. This capability underscores the agent’s versatility, proving it can operate seamlessly across both commercial games and dynamically generated virtual spaces using a consistent reasoning core and control interface.

Summary of Key Innovations

- Gemini-Centric Design: SIMA 2 employs Gemini 2.5 Flash Lite as its central reasoning and planning engine, integrated with a visuomotor control system that translates pixel inputs into game actions via a virtual keyboard and mouse.

- Significant Performance Gains: The agent nearly doubles the task success rate of SIMA 1 on DeepMind’s benchmark, approaching human-level proficiency and excelling in previously unseen game environments.

- Advanced Multimodal Instruction Handling: SIMA 2 processes complex, compositional commands delivered through speech, sketches, emojis, and multiple languages, grounding these inputs in a shared representation that links perception and action.

- Self-Directed Learning Loop: Utilizing a Gemini-based task generator and reward evaluator, the agent continuously improves by learning from its own gameplay data, reducing reliance on human demonstrations.

- Robust Generalization with Genie 3: Integration with the Genie 3 world model enables SIMA 2 to transfer skills to procedurally generated 3D environments, marking a step toward versatile embodied agents capable of real-world robotic applications.

Final Thoughts

SIMA 2 represents a pivotal advancement in embodied AI systems, showcasing how the fusion of a streamlined Gemini model with multimodal perception, language-driven planning, and a self-improving training loop can yield a highly capable agent. Validated across both commercial games and procedurally generated worlds, this architecture lays a promising foundation for the development of general-purpose robotic agents that can operate effectively in complex, dynamic environments.