Google has unveiled what it describes as its most advanced artificial intelligence infrastructure yet, introducing a seventh-generation TPU (Tensor Processing Unit) system designed to address the rapidly growing need for AI model deployment. This marks a pivotal industry shift from primarily focusing on training AI models to efficiently serving them to billions of users worldwide.

The centerpiece of this announcement is Google’s latest custom AI accelerator chip, Ironwood, which will be available to customers in the near future. Highlighting the chip’s significance, Anthropic-the AI safety company behind the Claude series of models-revealed plans to utilize up to one million of these chips, a deal valued in the tens of billions of dollars and one of the largest AI infrastructure commitments recorded.

From Training to Serving: The New Era of AI Inference

Google executives emphasize that the AI industry is entering what they call “the age of inference,” where the primary focus shifts from developing and training cutting-edge models to deploying them at scale for real-world applications. This transition reflects the growing demand for AI-powered services that handle millions or even billions of user interactions daily.

Amin Vahdat, Google Cloud’s VP and GM of AI and Infrastructure, explained, “Leading models like Google’s Gemini, Veo, Imagen, and Anthropic’s Claude are trained and served on TPUs. However, many organizations are now prioritizing the deployment phase-delivering fast, reliable, and interactive AI experiences.”

Unlike training, which can tolerate batch processing and longer runtimes, inference requires ultra-low latency, high throughput, and consistent uptime. For example, an AI-powered virtual assistant that takes several seconds to respond or frequently times out quickly becomes impractical, regardless of the model’s sophistication.

Moreover, emerging agentic AI workflows-where systems autonomously perform tasks rather than just responding to queries-introduce additional infrastructure complexities. These workflows demand seamless integration between specialized AI accelerators and general-purpose computing resources.

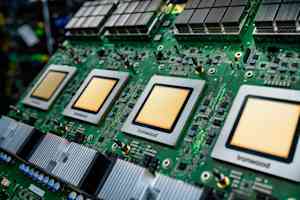

Ironwood Architecture: A Supercomputer Comprising 9,216 Chips

Ironwood represents a substantial leap beyond Google’s previous TPU generation. According to Google’s technical disclosures, it delivers over four times the performance for both training and inference workloads compared to its predecessor. This improvement stems from a holistic system-level co-design rather than merely increasing transistor density.

The most remarkable aspect of Ironwood is its scale. A single Ironwood pod integrates up to 9,216 TPU chips interconnected via Google’s proprietary Optical Circuit Switch (OCS) fabric, which operates at an astounding 9.6 terabits per second. To illustrate, this bandwidth is roughly equivalent to downloading the entire Library of Congress in under two seconds.

This vast interconnect enables the pod to share access to 1.77 petabytes of high-speed HBM (High Bandwidth Memory), capable of supporting thousands of processors simultaneously. This memory capacity is comparable to storing around 40,000 full-length 4K movies, all instantly accessible to the processing units. Google claims Ironwood pods deliver 118 times more FP8 ExaFLOPS than the nearest competitor.

The OCS technology also provides dynamic, reconfigurable data routing. In the event of hardware failures or maintenance, the system automatically reroutes data traffic within milliseconds, ensuring uninterrupted operation without noticeable impact on users.

Google’s experience with five prior TPU generations has informed a strong emphasis on reliability. Since 2020, their liquid-cooled TPU fleet has maintained an impressive 99.999% uptime, equating to less than six minutes of downtime annually.

Anthropic’s Multi-Billion Dollar Commitment Validates Google’s Custom Silicon Approach

Anthropic’s decision to secure access to up to one million Ironwood chips underscores the growing confidence in Google’s custom silicon strategy. This scale of deployment is extraordinary, especially considering that clusters of 10,000 to 50,000 accelerators are typically regarded as large in the AI infrastructure space.

Krishna Rao, Anthropic’s CFO, stated, “Our longstanding partnership with Google and this expansion will enable us to meet the surging compute demands necessary to push AI frontiers. Our clients-from Fortune 500 firms to AI-native startups-rely on Claude for mission-critical tasks, and this capacity growth is essential to support their exponential usage.”

Anthropic anticipates accessing over a gigawatt of power capacity by 2026, enough to energize a small city. The company highlighted the TPUs’ superior price-performance ratio and energy efficiency, alongside their extensive experience training and serving models on Google’s hardware, as key factors influencing their commitment.

Industry experts estimate that this infrastructure deal ranks among the largest cloud commitments ever made, factoring in chips, networking, power, and cooling. James Bradbury, Anthropic’s head of compute, emphasized Ironwood’s enhanced inference speed and training scalability as critical to maintaining customer expectations for speed and reliability.

Complementing AI Accelerators: Google’s Axion CPUs for General-Purpose Workloads

In addition to Ironwood, Google introduced expanded offerings in its Axion line of custom Arm-based CPUs. These processors are optimized for general-purpose tasks that support AI applications but do not require specialized accelerators.

The new N4A instance, currently in preview, targets workloads such as microservices, containerized applications, open-source databases, batch processing, data analytics, development environments, and web serving-essential components that enable AI applications to function effectively. Google claims N4A delivers up to twice the price-performance of comparable x86-based virtual machines.

Google also launched its first bare-metal Arm instance, designed for specialized workloads including Android development, automotive systems, and software with strict licensing constraints.

This dual approach-combining powerful AI accelerators like TPUs with efficient general-purpose CPUs-reflects a broader industry trend. While TPUs handle the heavy lifting of AI model computation, Axion processors manage data ingestion, preprocessing, application logic, API serving, and other critical tasks within AI stacks.

Early adopters report significant benefits: Vimeo observed a 30% performance boost in core transcoding workloads compared to x86 VMs, while ZoomInfo noted a 60% improvement in price-performance for Java-based data pipelines.

Maximizing Hardware Potential Through Advanced Software Ecosystems

Raw hardware power is only valuable if developers can effectively utilize it. Google integrates its TPU and Axion hardware into a unified platform called AI Hypercomputer, which combines compute, networking, storage, and software to optimize overall system performance and efficiency.

A recent IDC study from October 2025 found that customers using AI Hypercomputer achieved an average 353% return on investment over three years, alongside 28% reductions in IT costs and 55% improvements in IT team efficiency.

Google has introduced several software enhancements to fully leverage Ironwood’s capabilities. The TPU management system now supports advanced maintenance and topology-aware scheduling, enabling resilient and efficient cluster operations. Additionally, Google’s AI training framework supports cutting-edge techniques such as Supervised Fine-Tuning and Generative Reinforcement Policy Optimization.

For production environments, Google’s model serving platform intelligently balances inference requests across servers, reducing latency by up to 96% and cutting serving costs by as much as 30%. This is achieved through methods like prefix-cache-aware routing, which groups related requests to minimize redundant computation-particularly beneficial for conversational AI applications.

Overcoming Physical Infrastructure Challenges: Power and Cooling at Scale

Supporting such massive AI infrastructure requires addressing significant power and cooling demands. At a recent industry event, Google revealed plans to implement direct current (DC) power delivery systems capable of supplying up to one megawatt per server rack-approximately ten times the power density of typical data center racks.

Principal engineers Madhusudan Iyengar and Amber Huffman explained, “The AI era demands unprecedented power delivery capabilities. Machine learning workloads will require over 500 kW per rack by 2030.”

Google is collaborating with Meta and Microsoft to standardize high-voltage DC distribution interfaces, leveraging supply chains developed for electric vehicles to achieve economies of scale, improved manufacturing efficiency, and enhanced quality.

On the cooling front, Google will contribute its fifth-generation liquid cooling design to the Open Compute Project. Over the past seven years, Google has deployed liquid cooling at gigawatt scale across more than 2,000 TPU pods, maintaining fleet-wide availability of 99.999%. Water cooling is critical because it can transfer roughly 4,000 times more heat per unit volume than air, essential as individual AI chips dissipate upwards of 1,000 watts.

Challenging Nvidia’s Dominance with Custom Silicon Innovation

Google’s latest announcements arrive at a turning point in the AI infrastructure market. Nvidia currently dominates the AI accelerator space, controlling an estimated 80-95% of the market. However, major cloud providers are increasingly investing in custom silicon to differentiate their offerings and improve cost efficiency.

Amazon Web Services pioneered this trend with its Inferentia and Trainium chips, while Microsoft has developed its own AI accelerators and is reportedly expanding its efforts. Google now boasts the most extensive custom silicon portfolio among leading cloud providers.

Developing custom chips entails significant upfront costs-often in the billions-and faces challenges such as a less mature software ecosystem compared to Nvidia’s CUDA platform, which benefits from over 15 years of developer support. Additionally, rapid evolution in AI model architectures poses risks that hardware optimized for current models may become less effective as new techniques emerge.

Nonetheless, Google argues that its vertically integrated approach-combining model research, software, and hardware development-enables optimizations unattainable with off-the-shelf components. This strategy traces back to the original TPU introduced a decade ago, which helped enable the Transformer architecture that underpins much of modern AI.

Beyond Anthropic, early feedback from customers like Lightricks, a creative AI tools developer, has been positive. Yoav HaCohen, Lightricks’ research director, expressed enthusiasm about Ironwood’s potential to deliver more nuanced and higher-fidelity image and video generation for millions of users worldwide.

Looking Ahead: The Future of AI Infrastructure

Google’s bold investments raise critical questions for the AI industry: Can the current pace of infrastructure spending be sustained as AI companies collectively commit hundreds of billions of dollars? Will custom silicon prove more cost-effective than Nvidia GPUs in the long run? How will AI model architectures evolve to leverage or challenge existing hardware designs?

For now, Google remains committed to its longstanding philosophy of building custom infrastructure that enables applications beyond the reach of commodity hardware, while offering these capabilities to customers without requiring massive capital outlays.

As AI moves from experimental research to production systems serving billions globally, the underlying infrastructure-comprising silicon, software, networking, power, and cooling-will be as crucial as the AI models themselves.

Anthropic’s willingness to commit to up to one million TPU chips signals that Google’s tailored silicon strategy for the inference era may be hitting its stride just as demand reaches a critical inflection point.