Contents Overview

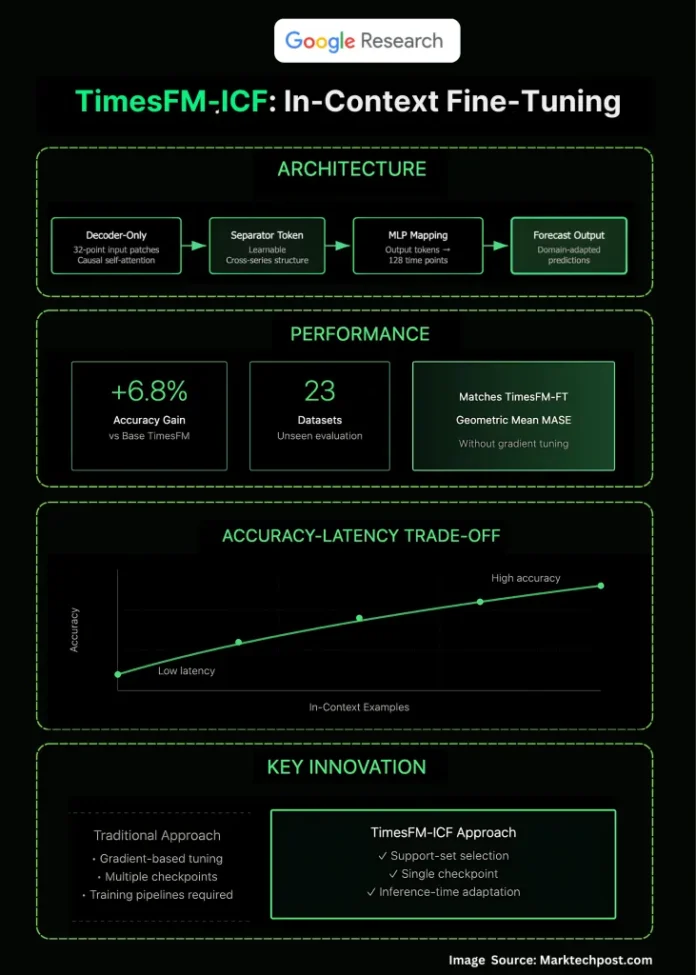

Google Research has unveiled a novel method called in-context fine-tuning (ICF) for enhancing time-series forecasting models, branded as TimesFM-ICF. This approach extends the pretraining of TimesFM, enabling it to dynamically incorporate multiple related time series directly within the inference prompt. The outcome is a few-shot forecasting model that rivals traditional supervised fine-tuning in accuracy, while achieving a notable 6.8% improvement over the original TimesFM on out-of-distribution (OOD) datasets-without the need for dataset-specific retraining.

Addressing Key Challenges in Time-Series Forecasting

In many real-world applications, forecasting workflows face a dilemma: either train a dedicated model per dataset through supervised fine-tuning, which yields high accuracy but demands complex MLOps, or rely on zero-shot foundation models that are easy to deploy but lack domain-specific adaptation. TimesFM-ICF offers a middle ground by maintaining a single pre-trained TimesFM model that adapts instantly at inference time using a few related series as context. This eliminates the overhead of maintaining separate training pipelines for each tenant or dataset.

Mechanics Behind In-Context Fine-Tuning

The foundation is TimesFM, a decoder-only transformer architecture that processes input as 32-point patches and generates 128-point outputs through a shared multilayer perceptron (MLP). The innovation lies in continuing its pretraining on sequences that interleave the target series with multiple “support” series. A crucial addition is a learnable separator token that delineates different series, enabling the model’s causal attention mechanism to extract meaningful patterns across examples without mixing their trends. The training objective remains next-token prediction, but the model learns to reason over multiple related series simultaneously during inference.

Understanding Few-Shot Learning in This Context

During inference, users provide the model with the target series history concatenated alongside k additional related time-series snippets-such as sales data from similar products or readings from neighboring sensors-each separated by the special token. The model’s attention layers, trained explicitly for this setup, leverage these in-context examples to improve forecasting accuracy. This paradigm shifts adaptation from costly parameter updates to strategic prompt design over structured numeric sequences, akin to few-shot prompting in large language models but tailored for time-series data.

Performance: Does It Rival Traditional Fine-Tuning?

Evaluated on a comprehensive 23-dataset OOD benchmark, TimesFM-ICF matches the accuracy of per-dataset supervised fine-tuning (TimesFM-FT) while outperforming the base TimesFM model by 6.8% in scaled mean absolute scaled error (MASE). The research also highlights a trade-off between accuracy and inference latency: incorporating more in-context examples enhances forecast quality but increases computational time. Notably, simply extending the context length without structured examples does not yield comparable gains, underscoring the importance of curated support series.

Distinguishing TimesFM-ICF from Other Approaches Like Chronos

While models like Chronos tokenize time-series values into discrete vocabularies and excel in zero-shot forecasting with efficient variants such as Chronos-Bolt, TimesFM-ICF’s breakthrough is enabling a time-series foundation model to perform few-shot learning by leveraging cross-series context during inference. This capability bridges the gap between traditional “train-time” adaptation and “prompt-time” adaptation, offering a flexible and efficient alternative for numeric forecasting tasks.

Key Architectural Innovations

The architecture incorporates several critical elements: (1) separator tokens to clearly mark boundaries between series, (2) causal self-attention mechanisms that operate over mixed sequences of target and support series, (3) persistent patching of input data and shared MLP output heads, and (4) continued pretraining designed to instill the model with the ability to interpret support series as informative exemplars rather than noise. Together, these features empower the model to effectively utilize related series as contextual guides during forecasting.

Concluding Insights

TimesFM-ICF represents a significant advancement in time-series forecasting by transforming a single pretrained model into a versatile few-shot learner. It achieves fine-tuning-level accuracy without the operational burden of dataset-specific retraining, making it ideal for multi-tenant environments and latency-sensitive applications. The primary control lever shifts to selecting appropriate support series, enabling tailored adaptation through prompt engineering rather than model updates.

Frequently Asked Questions

1) What is in-context fine-tuning (ICF) for time-series forecasting?

ICF is a continued pretraining strategy that conditions the TimesFM model to utilize multiple related time series embedded within the inference prompt, enabling few-shot adaptation without requiring gradient updates on new datasets.

2) How does ICF differ from conventional fine-tuning and zero-shot methods?

Unlike traditional fine-tuning, which updates model weights per dataset, and zero-shot methods that use a fixed model with only the target series, ICF keeps model parameters fixed at deployment but trains the model to exploit additional in-context examples during inference, achieving comparable accuracy to supervised fine-tuning.

3) What architectural or training modifications enable ICF?

The model is further pretrained on sequences that interleave the target series with support series, separated by special tokens. This setup allows the causal self-attention layers to capture cross-series relationships, while the core decoder-only transformer architecture remains unchanged.

4) How does TimesFM-ICF perform compared to existing baselines?

On out-of-domain datasets, TimesFM-ICF surpasses the base TimesFM and matches supervised fine-tuning performance. It has been benchmarked against strong time-series models like PatchTST and prior foundation models such as Chronos, demonstrating its effectiveness.