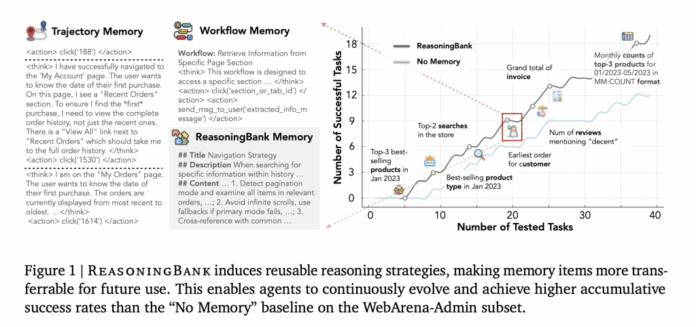

How can a large language model (LLM) agent effectively learn from its own experiences-both achievements and setbacks-without undergoing full retraining? Google Research introduces ReasoningBank, an innovative memory framework designed for AI agents that transforms their interaction histories-including failures as well as successes-into reusable, high-level reasoning strategies. These distilled strategies are then retrieved to inform future decision-making, creating a continuous feedback loop that enables the agent to self-improve over time. When combined with memory-aware test-time scaling (MaTTS), this method achieves up to a 34.2% relative boost in effectiveness and reduces interaction steps by 16% across benchmarks in web navigation and software engineering, outperforming previous memory systems that rely on raw logs or success-only workflows.

Understanding the Challenge: Why Current LLM Agents Struggle to Learn

LLM agents are increasingly tasked with complex, multi-step operations such as web browsing, software debugging, and repository-level code fixes. However, a significant limitation is their inability to effectively accumulate and leverage past experiences. Traditional memory mechanisms often store raw interaction logs or rigid procedural workflows, which tend to be fragile when applied across different environments. Moreover, these systems typically overlook the valuable insights embedded in failure cases, where much of the actionable knowledge resides. ReasoningBank addresses this by reimagining memory as a collection of concise, human-readable strategy units that encapsulate transferable reasoning patterns, making them adaptable across diverse tasks and domains.

How ReasoningBank Works: From Experience to Strategy

Each interaction-whether successful or not-is abstracted into a memory item comprising a title, a brief summary, and detailed content that outlines practical guidelines such as heuristics, validation checks, and operational constraints. The retrieval process is embedding-based, meaning that for any new task, the system identifies the most relevant memory items and incorporates them as system-level guidance. After task execution, new insights are distilled into additional memory items and integrated back into the memory bank. This cycle-retrieve, inject, evaluate, distill, and append-is deliberately streamlined to highlight the power of strategy abstraction rather than complex memory management.

Why This Approach Enables Transferability

Unlike conventional methods that memorize specific website elements or procedural steps, ReasoningBank encodes reasoning patterns such as “prioritize account pages for user-specific information,” “verify pagination modes,” “avoid infinite scroll traps,” and “cross-reference state with task specifications.” Failures are transformed into negative constraints like “avoid relying on search when indexing is disabled” or “always confirm save state before navigation,” which help prevent the repetition of past mistakes. This strategic abstraction allows the agent to generalize knowledge across different contexts effectively.

Introducing Memory-Aware Test-Time Scaling (MaTTS)

While increasing the number of rollouts or refinements during test time can enhance performance, its benefits are limited unless the system can learn from these additional attempts. To address this, the researchers propose Memory-aware test-time scaling (MaTTS), which integrates scaling with ReasoningBank’s memory framework:

- Parallel MaTTS: Executes multiple rollouts simultaneously and employs self-contrastive evaluation to refine the strategy memory.

- Sequential MaTTS: Iteratively improves a single trajectory by extracting intermediate insights as memory signals for ongoing refinement.

This synergy creates a virtuous cycle: richer exploration generates more informative memory, and enhanced memory guides exploration toward more promising solution paths. Empirical results demonstrate that MaTTS delivers more consistent and substantial improvements compared to traditional best-of-N approaches without memory integration.

Performance Highlights: How Effective Is This Framework?

- Improved Success Rates: The combination of ReasoningBank and MaTTS achieves up to a 34.2% relative increase in task success compared to agents without memory, surpassing previous memory designs that only reuse raw interaction traces or success-based routines.

- Greater Efficiency: The approach reduces the number of interaction steps by 16%, with the most significant reductions observed in successful trials, indicating a decrease in redundant actions rather than premature task termination.

Positioning ReasoningBank Within the AI Agent Ecosystem

ReasoningBank functions as a modular memory layer that can be integrated into interactive agents already employing ReAct-style decision loops or best-of-N test-time scaling. It does not replace existing verifiers or planners but rather enhances their capabilities by injecting distilled strategic knowledge at the prompt or system level. In practical applications, it complements frameworks like BrowserGym, WebArena, and Mind2Web for web-based tasks, and builds upon SWE-Bench-Verified environments for software engineering challenges.