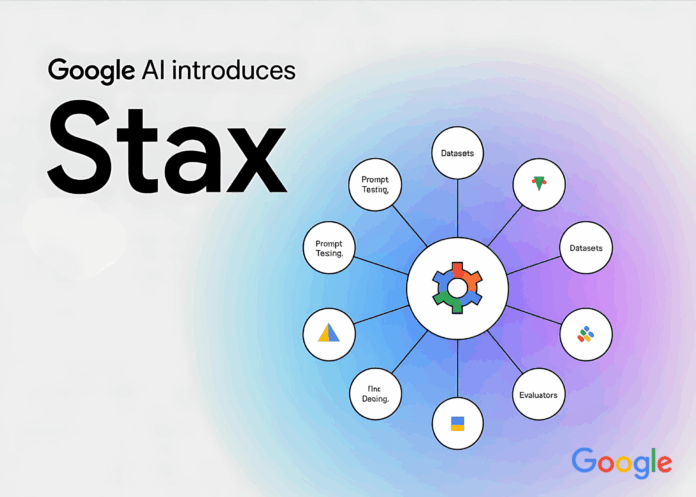

Assessing large language models (LLMs) presents unique challenges compared to conventional software testing. Since LLMs operate probabilistically, they can produce varying responses to the same input, complicating efforts to ensure consistency and reproducibility. To tackle this complexity, Stax emerges as an innovative developer tool designed to offer a structured framework for evaluating and benchmarking LLMs using both customizable and ready-made autoraters.

Stax is tailored for developers seeking to gauge how a particular model or prompt performs within their specific context, moving beyond generic benchmarks and leaderboard rankings.

Limitations of Conventional Evaluation Methods

While leaderboards and broad benchmarks provide a snapshot of overall model advancements, they often fail to capture the nuances of specialized applications. For instance, a model excelling in general reasoning tasks might struggle with domain-specific challenges such as regulatory-compliant summarization, nuanced legal document interpretation, or enterprise-focused question answering.

Stax empowers developers to craft evaluation criteria that align precisely with their unique needs, replacing vague aggregate scores with meaningful, context-driven metrics.

Core Features of Stax

Side-by-Side Prompt Evaluation

The Quick Compare functionality enables developers to simultaneously test multiple prompts across different models. This side-by-side comparison streamlines the process of identifying how prompt variations or model selections influence output quality, significantly cutting down on iterative guesswork.

Comprehensive Project and Dataset Management

For evaluations that extend beyond isolated prompts, the Projects & Datasets module facilitates large-scale testing. Developers can assemble well-structured test collections and apply uniform evaluation standards across numerous examples, enhancing reproducibility and simulating real-world usage scenarios more effectively.

Flexible Autoraters: Custom and Pre-Configured

At the heart of Stax lies the concept of autoraters. Users can either develop bespoke evaluators tailored to their specific requirements or leverage a suite of pre-configured evaluators that address common assessment dimensions, including:

- Fluency – assessing grammatical accuracy and overall readability.

- Groundedness – verifying factual alignment with source materials.

- Safety – ensuring outputs are free from harmful or inappropriate content.

This adaptability ensures that evaluations are closely aligned with practical, real-world standards rather than generic, one-size-fits-all metrics.

Insightful Analytics for Deeper Understanding

The Analytics Dashboard in Stax offers intuitive visualization tools that help developers interpret evaluation outcomes. It highlights performance trends, facilitates cross-evaluator comparisons, and reveals how different models behave on identical datasets. This approach prioritizes comprehensive insights over simplistic scoring.

Real-World Applications

- Prompt Refinement – iteratively improving prompts to enhance response consistency.

- Model Evaluation and Selection – systematically comparing multiple LLMs to identify the best fit for deployment.

- Domain-Specific Compliance Testing – validating outputs against industry regulations or organizational standards.

- Continuous Performance Monitoring – conducting ongoing assessments as data and requirements evolve over time.

Conclusion

Stax offers a comprehensive, methodical approach to evaluating generative language models with criteria that mirror actual application demands. By integrating rapid prompt comparisons, large-scale dataset evaluations, customizable autoraters, and detailed analytics, it equips developers with the tools necessary to transition from informal testing to rigorous, structured assessment.

For organizations deploying LLMs in production, Stax provides critical visibility into model behavior under specific conditions, enabling teams to ensure that generated outputs consistently meet the stringent standards required for practical use.