Developing a Unified Model to Master Physical Skills from Complex Real-World Robot Data Without Simulations

Introducing a groundbreaking class of embodied foundation models designed to learn directly from high-fidelity, raw physical interaction data collected from real robots, rather than relying on simulated environments or internet videos. This innovative approach aims to establish scaling principles for robotics akin to those that revolutionized natural language processing with large language models, but now firmly rooted in continuous sensorimotor data streams from robots functioning in diverse settings such as homes, warehouses, and industrial workplaces.

Simultaneous Perception and Action: The Principle of Harmonic Reasoning

The architecture known as GEN-θ represents a novel embodied foundation model that leverages the advancements of vision and language models while integrating innate capabilities for human-like reflexes and physical intuition. Central to its design is the concept of Harmonic Reasoning, where the model concurrently processes sensory inputs and generates actions in real time, handling asynchronous, continuous streams of perception and motor commands.

This approach addresses a fundamental challenge unique to robotics: unlike language models that can afford to pause and deliberate before responding, robots must continuously act as the physical environment evolves dynamically. Harmonic Reasoning enables a seamless, synchronized interaction between sensing and acting, allowing GEN-θ to scale to very large model sizes without the need for computationally expensive inference-time controllers or guidance systems.

Moreover, GEN-θ is designed to be embodiment-agnostic, successfully operating across various robotic platforms including 6DoF, 7DoF, and complex 16+DoF semi-humanoid systems. This versatility allows a single pre-training process to serve heterogeneous robot fleets, enhancing adaptability and efficiency.

Crossing the Intelligence Barrier in Robotics

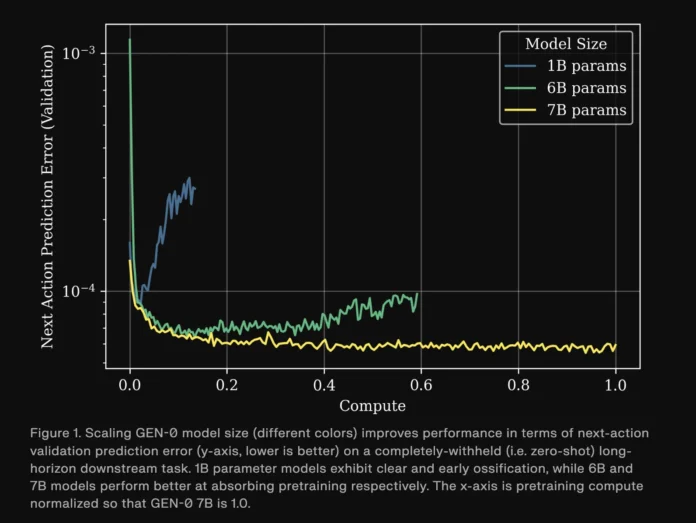

Research from the Generalist AI team reveals a distinct phase transition in robotic capabilities as GEN-θ scales within a high-volume data environment. Their experiments demonstrate that model size critically influences the ability to assimilate extensive physical interaction data.

- Models with approximately 1 billion parameters tend to plateau early during pretraining, showing limited capacity to integrate complex sensorimotor information-a phenomenon described as ossification.

- At around 6 billion parameters, models begin to exhibit significant improvements, demonstrating robust multitasking abilities.

- Models exceeding 7 billion parameters internalize large-scale robotic experience so effectively that only a few thousand fine-tuning steps on specific downstream tasks are needed for successful transfer learning.

These findings are illustrated by validation metrics tracking next-action prediction errors on withheld long-horizon tasks, where smaller models stagnate while larger ones continue to improve with increased pretraining. This aligns with Moravec’s Paradox, suggesting that mastering physical commonsense and dexterity demands substantially greater computational resources than abstract language reasoning. GEN-θ surpasses this critical threshold, marking a new milestone in embodied AI.

The team has scaled GEN-θ beyond 10 billion parameters, observing that larger variants require progressively less post-training to adapt to novel tasks, highlighting the efficiency gains from scale.

Establishing Scaling Laws for Robotic Learning

A key contribution of this research is the formulation of scaling laws that connect the volume of pre-training data and computational resources to downstream task performance. By sampling checkpoints from GEN-θ training runs on varying subsets of the pre-training dataset, followed by supervised fine-tuning on 16 diverse task sets-including dexterous manipulation like assembling complex mechanical parts, industrial workflows such as automated packaging, and generalization tasks involving flexible instruction interpretation-the team quantified performance improvements.

Results show that increased pre-training data consistently reduces validation loss and next-action prediction errors during fine-tuning. At sufficient model scale, the relationship between dataset size (D) and validation error (L(D)) adheres to a power law:

L(D) = (Dc / D)αD

Here, D represents the number of action trajectories in the pre-training set, and L(D) denotes the downstream validation error. This formula empowers robotics researchers to estimate the necessary pre-training data volume to achieve specific accuracy targets or to balance the trade-off between pre-training and labeled downstream data.

Robust Data Infrastructure Supporting Robotics at Scale

GEN-θ is trained on an extensive proprietary dataset comprising over 270,000 hours of real-world robotic manipulation trajectories, gathered from thousands of locations worldwide, including residential, commercial, and industrial environments. This dataset expands by approximately 10,000 hours weekly, making it one of the largest collections of real-world robotic interaction data to date.

To manage this massive data influx, the team developed specialized hardware, optimized data loaders, and high-bandwidth network infrastructure, including dedicated internet connections for distributed data acquisition sites. Their multi-cloud architecture, combined with custom upload systems and roughly 10,000 compute cores, supports continuous multimodal data processing. Advanced compression techniques and data-loading strategies adapted from state-of-the-art video foundation models enable the system to process the equivalent of nearly seven years of real-world robotic experience per day of training.

The Critical Role of Pre-Training Data Composition

Extensive ablation studies conducted by the Generalist AI team across eight distinct pre-training datasets and ten long-horizon task suites reveal that the quality and mixture of training data are as crucial as sheer volume. Different data combinations yield models with varying strengths across three primary task categories: dexterity, practical real-world applications, and generalization.

Model performance was evaluated using metrics such as validation mean squared error (MSE) for next-action predictions and reverse Kullback-Leibler (KL) divergence between the model’s policy and a Gaussian distribution centered on ground truth actions. Models exhibiting both low MSE and low reverse KL divergence are ideal candidates for supervised fine-tuning, while those with higher MSE but low reverse KL tend to have more diverse action distributions, making them better suited for reinforcement learning approaches.

Summary of Insights

- GEN-θ is a pioneering embodied foundation model trained exclusively on high-fidelity raw physical interaction data, employing Harmonic Reasoning to enable simultaneous perception and action in dynamic environments.

- Scaling experiments identify a critical intelligence threshold near 7 billion parameters, beyond which models continue to improve with additional pretraining, while smaller models experience stagnation.

- Clear scaling laws link pre-training dataset size to downstream task performance, providing a predictive framework for data and compute requirements in robotic learning.

- The model is trained on an unprecedented volume of real-world manipulation data-over 270,000 hours and growing weekly-supported by a custom-built, scalable multi-cloud infrastructure capable of processing years of robotic experience daily.

- Data quality and mixture design significantly influence model behavior, with different compositions favoring supervised fine-tuning or reinforcement learning, underscoring the importance of curated datasets alongside scale.

Concluding Perspective

GEN-θ represents a significant leap forward in embodied AI, demonstrating that scaling laws can be effectively applied to robotics through innovative concepts like Harmonic Reasoning and large-scale multimodal pretraining. By surpassing the intelligence threshold with models exceeding 7 billion parameters and leveraging vast real-world datasets, GEN-θ sets a new standard for robotic dexterity, adaptability, and generalization across a wide range of tasks and environments.