Since the mid-20th century, the quest to unravel the essence of intelligence has united the fields of artificial intelligence and neuroscience. Pioneers such as von Neumann and Turing laid the groundwork for understanding cognition, inspiring contemporary scientists to connect artificial language models with the intricate workings of biological neural networks. Despite remarkable advances, current AI models like GPT still struggle to extend chain-of-thought reasoning beyond the confines of their training data.

This limitation stems from fundamental differences in architecture. The human brain is a massively distributed system, comprising approximately 80 billion neurons interconnected by over 100 trillion synapses, relying on localized interactions and synaptic plasticity. In contrast, modern transformer models depend heavily on dense matrix multiplications and global attention mechanisms. This divergence results in artificial systems that, while powerful, lack the flexibility and interpretability inherent to biological intelligence.

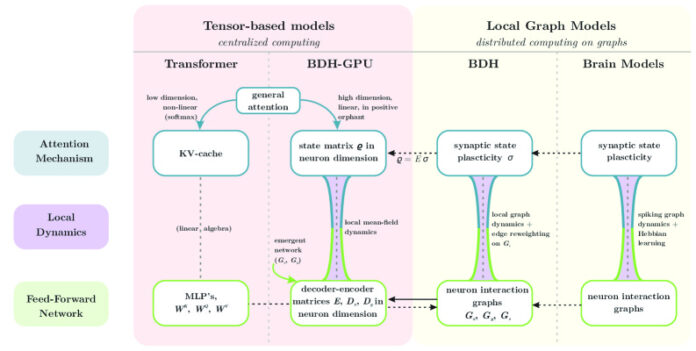

Addressing this gap, researchers have developed the Dragon Hatchling (BDH), an innovative framework that harmonizes theoretical rigor with biological plausibility, all while achieving performance on par with Transformer architectures. Unlike conventional neural networks, BDH models a scale-free network of neuron-like particles interacting locally, inspired by biological principles.

BDH’s unique strength lies in its dual capability: it functions as a GPU-efficient language model and simultaneously embodies a biologically realistic brain model. Its working memory is entirely driven by synaptic plasticity governed by Hebbian learning rules implemented through spiking neurons. Synaptic weights dynamically strengthen as the system processes specific concepts, establishing a direct analogy between artificial and biological learning mechanisms.

Integrating Logical Reasoning with Adaptive Learning

At the core of Dragon Hatchling is the fusion of logical inference with biologically grounded learning. The system operationalizes modus ponens: if a fact i holds true and a rule σ implies that i leads to j, then j is inferred. In practice, this is modeled as weighted beliefs where the strength of implication σ(i,j) modulates how belief in i influences belief in j.

Hebbian learning introduces adaptability by reinforcing synaptic connections when neurons activate concurrently-embodying the principle “neurons that fire together wire together.” The system incrementally adjusts the weight σ(i,j) whenever fact i supports evidence for j during inference.

This results in a hybrid reasoning framework with two categories of rules: fixed parameters G learned during training, akin to traditional model weights, and rapidly evolving rules σ that adapt dynamically during inference (fast weights). Maintaining a one-to-one ratio between trainable parameters and state variables is pivotal, shedding light on the success of both Transformers and state-space models.

The system naturally forms a graph structure: with n facts and approximately m = O(n²) possible connections, sparsity constraints ensure n ≤ m ≤ n². This yields a graph with n nodes and m edges, where edges carry both state and trainable parameters, facilitating communication between nodes.

Key Innovations Bridging AI and Neuroscience

The Dragon Hatchling introduces three groundbreaking contributions that unify artificial and biological intelligence. First, it encodes all model parameters as the topology and weights of communication graphs, with inference states represented by edge reweighting. This constructs a programmable interacting particle system where particles correspond to graph nodes and scalar state variables reside on edges.

Second, BDH’s local kernel maps seamlessly onto graph-based spiking neural networks incorporating Hebbian learning, excitatory, and inhibitory circuits. This is not a superficial analogy but a faithful representation of the computational mechanisms essential for language understanding and reasoning.

Third, BDH-GPU offers a tensor-optimized implementation via mean-field approximation. Instead of explicit graph communication, particles interact through a “radio broadcast” mechanism, enabling efficient GPU training while preserving mathematical equivalence to the graph model. The system scales primarily along a single neuronal dimension n, with three parameter matrices E, Dₓ, Dᵧ containing approximately (3 + o(1)) n d parameters.

Empirical evaluations reveal that BDH matches GPT-2’s performance on language modeling and translation tasks across parameter ranges from 10 million to 1 billion, using identical training datasets. The architecture adheres to expected scaling laws and offers unprecedented interpretability through its biological grounding.

From Distributed Graph Dynamics to Neural Computation

Unlike traditional models relying on global matrix operations, BDH operates through localized, distributed graph dynamics. The system comprises n neuron particles communicating via weighted graph edges, with inference governed by edge reweighting processes termed the “equations of reasoning.”

Mathematically, the model employs interaction kernels with programmable rulesets. For a system with z species and state vector (q₁, …, q_z), transition rates dictate species interactions as:

q_j’ := (1 – d_j) q_j + Σ_i r_{i,j} q_i q_j

This formulation restricts to edge-reweighting kernels suitable for distributed implementation while retaining sufficient expressiveness to model attention-based language processing.

A scheduler executes these kernels in a round-robin manner, alternating between local neuron computations and communication over synaptic connections. State variables X(i), Y(i), A(i) represent rapid neuronal pulse dynamics, while σ(i,j) encodes synaptic plasticity between connected neuron pairs.

Decoding Attention as Logical Micro-Inference

Conventional attention mechanisms operate on vector transformations involving keys, queries, and values. BDH uncovers a finer-grained interpretation: attention emerges as logical inference between individual neurons. Each attention weight σ(i,j) embodies an inductive bias, quantifying the likelihood that implication i → j guides the system’s next inference step.

This aligns with logical axioms such as:

(X → (i → j)) → ((X → i) → (X → j))

Here, the weight σ(i,j) does not represent absolute truth but rather utility-based biases that steer inference from known facts toward intermediate concepts, effectively serving as logical shortcuts.

Chains of implications activate pathways through the system’s graph, with attention selectively enabling synapses to participate in reasoning. This heuristic evaluation mirrors informal human reasoning, dynamically prioritizing the most plausible facts for subsequent consideration.

Neurons Modeled as Oscillatory Particles

BDH can be conceptualized as a physical dynamical system of interacting oscillatory particles. Imagine n particles arranged in a ring, connected by elastic links representing synaptic weights σ(i,j). The system exhibits dual timescales: slow evolution of tension along connectors and rapid pulse activations at nodes.

Initially, elastic connectors have zero displacement. When a pulse x(i) occurs at node i, tension accumulates in adjacent connectors σ(i,·), activating perturbations Gᵧ that influence connected nodes. If perturbations exceed a threshold, they trigger activations y(j) at node j, which propagate through connections Gₓ to modify pulses at other nodes.

A key Hebbian mechanism emerges: temporal correlations between pulses y(j’) followed by x(i’) increase tension σ(i’, j’) on connectors, even absent direct causality. This models synaptic strengthening through coincident activity.

From the connectors’ perspective, existing tension propagates through a three-hop pathway i → j → i’, enabling complex state evolution that supports reasoning and memory formation.

Bridging Biological Models and GPU-Efficient Implementations

Transitioning from BDH’s biologically inspired formulation to the practical BDH-GPU implementation preserves mathematical fidelity while enabling scalable training. BDH-GPU models the n-particle system via mean-field interactions, avoiding explicit graph communication overhead.

Each particle i maintains a state vector ρ_i(t) in ℝ^d for each layer. Particle interactions depend on a tuple Z_i comprising current state, encoder E(i,·), and decoders Dₓ(·,i), Dᵧ(·,i). The system scales uniformly with neuron count n, grouping neurons into k-tuples when employing block-diagonal matrices such as RoPE (k=2) or ALiBi (k=1).

Communication follows a broadcast paradigm: each particle computes a local message m_i ∈ ℝ^d, broadcasts it, receives the mean-field message m̄ = Σ m_i, and updates its activation and state accordingly. This design eliminates communication bottlenecks while preserving essential particle dynamics.

From an engineering standpoint, transformations between length-n vectors pass through intermediate dimension d representations. The encoder E compresses n-dimensional inputs to d dimensions, while decoders Dₓ, Dᵧ reconstruct back to n dimensions. This low-rank factorization maintains parameter efficiency at O(nd) while supporting high-dimensional reasoning.

Performance Benchmarks: Interpretable Models Rivaling GPT-2

BDH-GPU achieves competitive results on language modeling and translation benchmarks, retaining the parallel training capabilities, attention mechanisms, and scaling behaviors characteristic of Transformers. Crucially, it adds a layer of biological interpretability and novel reasoning capabilities.

Compared to GPT-2, BDH employs fewer parameter matrices, enabling more compact interpretation. It scales predominantly with neuron count n, aligns key-value state and parameter matrix dimensions, imposes no context length restrictions, supports linear attention in high dimensions, and utilizes positive sparse activation vectors.

Scaling experiments demonstrate Transformer-like loss reductions as parameter counts increase. BDH-GPU often achieves faster convergence per data token, excelling on both natural language tasks and synthetic reasoning challenges.

Inference FLOPS scale as O(ndL) per token, with each parameter accessed approximately O(L) times per token, where L is the number of layers-typically fewer than in Transformers. State access requires constant time per token with small overhead. Current implementations do not exploit activation sparsity, indicating potential for further efficiency improvements.

Emergence of Hierarchical Modular Structures

Large-scale reasoning systems benefit from hierarchical modularity, which BDH demonstrates emerges organically through local graph dynamics during training rather than explicit design.

Information propagation graphs naturally develop modular structures optimizing the tradeoff between efficiency and accuracy. This emergent modularity offers advantages over rigid partitions: nodes can belong to multiple overlapping communities, act as bridges, and dynamically adjust scales and inter-community relationships as task demands evolve.

Historical parallels include the evolution of the World Wide Web-from early directory-based systems like DMOZ and Craigslist to organically grown knowledge networks such as Wikipedia, Reddit’s interlinked communities, and Google’s PageRank algorithm. Theoretical frameworks like Newman modularity and Stochastic Block Models formalize these phenomena.

BDH exhibits scale-free properties indicative of operation near criticality-balancing stability for short-term information retrieval with adaptability to sudden shifts when new knowledge invalidates prior reasoning. This criticality is characterized by power-law distributions in the likelihood of new information affecting n’ nodes, consistent with empirical observations.

ReLU-Lowrank Blocks: Enhancing Noise Reduction and Affinity Representation

BDH-GPU introduces ReLU-lowrank feedforward blocks that differ from conventional low-rank approximations in machine learning. These blocks effectively reduce noise and faithfully represent affinity functions on sparse positive vectors, making them well-suited for linear attention mechanisms.

The ReLU-lowrank operation maps an input vector z ∈ ℝ^n to f_{DE}(z) := ReLU(D E z), where encoder E compresses n-dimensional inputs to d dimensions, decoder D reconstructs back to n dimensions, and ReLU ensures non-negativity. This contrasts with standard multilayer perceptrons but maintains comparable expressiveness within the positive orthant.

Error analysis shows that the low-rank approximation G ≈ D E achieves pointwise error on the order of O(√(log n / d)) for matrices with bounded norms. Incorporating ReLU suppresses noise further, enabling closer approximations of positive transformations such as Markov chain propagations z ↦ G’ z for stochastic matrices G’.