Introducing DeepSeek-V3.2 and DeepSeek-V3.2-Speciale: Advanced Reasoning Models for Extended Contexts and Agent Workflows

How can one achieve GPT-5 caliber reasoning capabilities on extensive context and tool-driven tasks without incurring the prohibitive quadratic attention and GPU expenses that typically hinder such systems? DeepSeek Research offers a compelling solution with their latest models, DeepSeek-V3.2 and DeepSeek-V3.2-Speciale. These models prioritize reasoning quality, support long context windows, and are optimized for agent-based workflows. Both come with open weights and production-ready APIs, delivering performance on par with GPT-5. Notably, DeepSeek-V3.2-Speciale attains reasoning proficiency comparable to Gemini 3.0 Pro on public benchmarks and competitive challenges.

Revolutionizing Attention: Sparse Attention with Near-Linear Complexity

At the core of both DeepSeek-V3.2 and DeepSeek-V3.2-Speciale lies the DeepSeek-V3 Mixture of Experts (MoE) transformer architecture, boasting approximately 671 billion parameters in total, with 37 billion active parameters engaged per token. This design builds upon the V3.1 Terminus model, introducing a pivotal innovation: DeepSeek Sparse Attention (DSA), integrated through continued pre-training.

DSA decomposes the attention mechanism into two distinct parts. First, a lightning indexer employs a limited number of low-precision attention heads to evaluate relevance scores across all token pairs rapidly. Then, a fine-grained selector identifies the top-k key-value pairs per query, enabling the main attention pathway to execute Multi-Query Attention and Multi-Head Latent Attention on this reduced, sparse subset.

This approach transforms the computational complexity from the traditional quadratic O(L²) to a near-linear O(kL), where L represents the sequence length and k (much smaller than L) is the number of selected tokens. Benchmark results demonstrate that DeepSeek-V3.2 maintains accuracy comparable to the dense Terminus baseline while halving the inference cost for long-context tasks. Additionally, it achieves faster throughput and reduced memory consumption on hardware like the NVIDIA H800 and software backends such as vLLM and SGLang.

Progressive Pre-Training Strategy for Sparse Attention

The introduction of DeepSeek Sparse Attention is realized through a two-phase continued pre-training regimen atop the DeepSeek-V3.2 Terminus model. Initially, during the dense warm-up phase, dense attention remains active, and all backbone parameters are frozen. Only the lightning indexer is trained using a Kullback-Leibler divergence loss to align its output with the dense attention distribution over sequences up to 128,000 tokens. This phase requires a relatively modest 2 billion tokens and a limited number of training steps, sufficient for the indexer to learn effective relevance scoring.

Subsequently, in the sparse training phase, the selector retains 2048 key-value pairs per query, the backbone model is unfrozen, and training continues on an extensive dataset of approximately 944 billion tokens. The indexer’s gradients are still derived solely from the alignment loss with dense attention on the selected positions. This training schedule ensures that DSA functions as a seamless substitute for dense attention, delivering comparable quality with significantly reduced computational overhead for long sequences.

Enhancing Performance with Group Relative Policy Optimization (GRPO)

Building upon the sparse attention framework, DeepSeek-V3.2 incorporates Group Relative Policy Optimization (GRPO) as its primary reinforcement learning (RL) technique. Impressively, the RL compute budget surpasses 10% of the original pre-training compute, underscoring the emphasis on fine-tuning.

RL training is segmented into specialized domains, including mathematics, competitive programming, logical reasoning, web browsing, agentic tasks, and safety. Dedicated training runs for each domain are distilled into a unified 685 billion parameter base model powering both DeepSeek-V3.2 and DeepSeek-V3.2-Speciale. GRPO employs an unbiased Kullback-Leibler estimator, off-policy sequence masking, and mechanisms that maintain consistent Mixture of Experts routing and sampling masks between training and inference, ensuring stable and efficient learning.

Agent-Centric Dataset, Thinking Modes, and Tool Integration Protocol

The DeepSeek team has curated an extensive synthetic agent dataset, encompassing over 1,800 distinct environments and more than 85,000 challenging tasks. These span code agents, search agents, general-purpose tools, and code interpreter configurations. Tasks are designed to be difficult to solve yet straightforward to verify, serving as robust RL targets alongside real-world coding and search interaction traces.

During inference, DeepSeek-V3.2 introduces explicit thinking and non-thinking modes. By default, the deepseek-reasoner endpoint operates in thinking mode, where the model generates an internal chain of thought prior to delivering the final response. The reasoning process persists across tool invocations and is reset only upon receiving a new user input. Tool calls and their results remain within the context, even when reasoning text is truncated to manage budget constraints.

The chat interface has been updated to reflect this behavior. The DeepSeek-V3.2 Speciale package provides Python-based encoder and decoder utilities instead of traditional Jinja templates. Messages can include a reasoning_content field alongside the main content, controlled via a thinking parameter. A dedicated developer role is reserved exclusively for search agents and is blocked in general chat flows through the official API, preventing accidental misuse.

Benchmark Achievements and Competitive Excellence

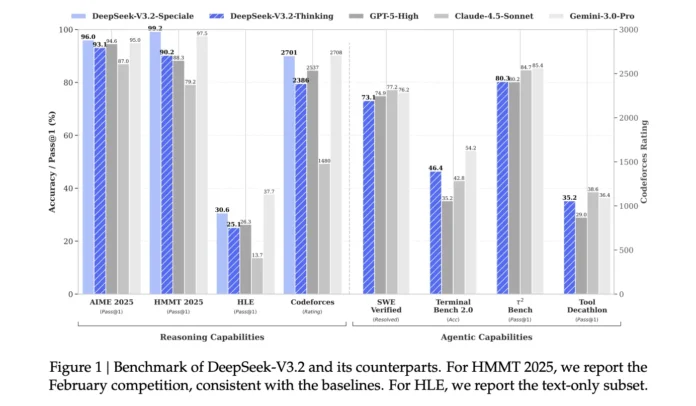

On a variety of standard reasoning and coding benchmarks, DeepSeek-V3.2 and particularly DeepSeek-V3.2-Speciale demonstrate performance comparable to GPT-5 and approach the capabilities of Gemini 3.0 Pro. They excel on test suites such as AIME 2025, HMMT 2025, GPQA, and LiveCodeBench, all while offering enhanced cost efficiency for long-context workloads.

In formal competitions, DeepSeek-V3.2-Speciale has achieved gold medal-level results at prestigious events including the International Mathematical Olympiad 2025, the Chinese Mathematical Olympiad 2025, and the International Olympiad in Informatics 2025. It also delivers competitive gold medal-level performance at the ICPC World Finals 2025, underscoring its prowess in complex problem-solving scenarios.

Summary of Innovations and Benefits

- DeepSeek Sparse Attention introduces a near-linear attention mechanism with O(kL) complexity, reducing long-context API costs by approximately 50% compared to previous dense models, while maintaining accuracy on par with DeepSeek-V3.1 Terminus.

- The model family retains a massive 671 billion parameter MoE backbone with 37 billion active parameters per token and supports a full 128,000-token context window in production APIs, enabling practical handling of lengthy documents, multi-step reasoning chains, and extensive tool interaction histories.

- Post-training leverages Group Relative Policy Optimization (GRPO) with a reinforcement learning compute budget exceeding 10% of pre-training, focusing on specialized domains such as mathematics, coding, reasoning, browsing, agent tasks, and safety, with contest-style specialist datasets released for external validation.

- DeepSeek-V3.2 pioneers the integration of explicit thinking modes within tool usage, supporting both reflective and direct tool interactions. Its protocol ensures internal reasoning continuity across tool calls and resets only upon new user inputs, enhancing agentic workflow coherence.