How can an artificial intelligence system effectively solve intricate olympiad-level mathematics problems using clear, natural language explanations while simultaneously verifying the accuracy of its own reasoning? DeepSeek AI has introduced DeepSeekMath-V2, a large language model with open weights, specifically fine-tuned for natural language theorem proving combined with self-verification capabilities. This model is based on the DeepSeek-V3.2-Exp-Base architecture, operates as a 685 billion parameter mixture of experts, and is publicly accessible on Hugging Face under the Apache 2.0 license.

In rigorous testing, DeepSeekMath-V2 has demonstrated exceptional performance, achieving gold medal level results on the IMO 2025 and CMO 2024, and scoring an impressive 118 out of 120 points on the Putnam 2024 exam when leveraging enhanced computational resources during evaluation.

Limitations of Rewarding Only Final Answers

Contemporary mathematical reasoning models predominantly rely on reinforcement learning frameworks that reward solely the final numeric answer on competitions like AIME and HMMT. This strategy has rapidly advanced model capabilities from rudimentary baselines to near-peak performance on short-answer contests within a year.

Nonetheless, the DeepSeek team identifies two fundamental shortcomings in this approach:

- A correct numerical result does not necessarily reflect valid reasoning; errors in intermediate steps can cancel out, leading to a right answer for the wrong reasons.

- Many complex tasks, such as olympiad-level proofs and formal theorem proving, demand comprehensive, logically coherent arguments expressed in natural language rather than a single numeric solution, rendering final-answer-based rewards insufficient.

To overcome these challenges, DeepSeekMath-V2 prioritizes proof integrity and logical soundness over mere answer correctness. The system assesses whether a proof is thorough and logically consistent, using this evaluation as the primary feedback signal during training.

Building a Verifier Before the Proof Generator

The foundational concept behind DeepSeekMath-V2 is a “verifier-first” training paradigm. The team developed a large language model verifier capable of analyzing a given problem alongside a candidate proof, producing both a detailed natural language critique and a discrete quality score from the set {0, 0.5, 1}.

Initial reinforcement learning data was sourced from 17,503 proof-centric problems collected from various olympiads, team selection tests, and post-2010 contests that explicitly require formal proofs. Candidate solutions were generated by the DeepSeek-V3.2 reasoning model, which iteratively refined its own proofs, increasing detail but also introducing imperfections. Expert mathematicians then annotated these proofs using the 0, 0.5, 1 scoring rubric based on rigor and completeness.

The verifier was trained using Group Relative Policy Optimization (GRPO), with a reward function comprising two elements:

- Format reward: Ensures the verifier’s output adheres to a strict template, including a comprehensive analysis section and a final score enclosed in a box.

- Score reward: Penalizes discrepancies between the verifier’s predicted score and the expert-assigned score.

This process yielded a verifier capable of consistently grading olympiad-style proofs with high reliability.

Introducing Meta Verification to Mitigate Fabricated Critiques

Despite its strengths, a verifier might manipulate the reward system by assigning correct final scores while fabricating issues in its analysis, undermining the trustworthiness of its explanations.

To counteract this, DeepSeek introduced a meta verifier that evaluates the fidelity of the verifier’s critique. It examines the original problem, the proof, and the verifier’s analysis to determine whether the critique accurately reflects the proof’s quality. The meta verifier assesses factors such as the restatement of proof steps, identification of genuine flaws, and consistency between the narrative and the final score.

Trained also with GRPO, the meta verifier applies its own format and score rewards. Its output-a meta quality score-is incorporated as an additional reward for the base verifier. Analyses that invent problems receive low meta scores, even if the proof score is accurate. Experimental results show this approach boosts the average meta-evaluated quality of analyses from approximately 0.85 to 0.96 on validation data, while maintaining stable proof scoring accuracy.

Self-Verification in Proof Generation and Iterative Refinement

With a robust verifier in place, the DeepSeek team proceeded to train the proof generator. This generator produces both a solution and a self-assessment following the same rubric as the verifier.

The generator’s reward function integrates three components:

- The verifier’s score on the generated proof.

- The alignment between the generator’s self-reported score and the verifier’s evaluation.

- The meta verifier’s assessment of the generator’s self-analysis.

Mathematically, the reward combines these signals with weights α = 0.76 for the proof score and β = 0.24 for the self-analysis score, multiplied by a format compliance term. This incentivizes the generator to produce proofs that the verifier accepts and to honestly acknowledge any remaining flaws. Overstating the quality of a flawed proof results in penalties due to score disagreement and low meta scores.

DeepSeekMath-V2 leverages the model’s extensive 128,000 token context window to handle complex problems. Since a single pass may not suffice to correct all issues without exceeding context limits, the system employs sequential refinement. It iteratively generates a proof and self-analysis, feeds them back as context, and prompts the model to improve the proof by addressing previously identified problems. This loop continues until the context budget is exhausted.

Automated Labeling and Scalable Verification

As the proof generator advances, it produces increasingly challenging proofs that are expensive to label manually. To maintain up-to-date training data, the team developed an automatic labeling pipeline based on scaled verification.

For each candidate proof, multiple independent verifier analyses are sampled and then evaluated by the meta verifier. If several high-quality analyses converge on the same critical issues, the proof is marked incorrect. Conversely, if no valid issues remain after meta verification, the proof is labeled correct. In later training stages, this automated labeling replaces human annotations, with spot checks confirming strong agreement with expert judgments.

Benchmarking and Competitive Performance

DeepSeekMath-V2 was assessed across multiple challenging benchmarks:

On an internal dataset of 91 CNML-level problems spanning algebra, geometry, number theory, combinatorics, and inequalities, DeepSeekMath-V2 outperformed competitors such as Gemini 2.5 Pro and GPT-5 Thinking High in every category, as measured by its own verifier.

On the IMO Shortlist 2024, the sequential refinement process with self-verification enhanced both the “pass at 1” and “best of 32” quality metrics as the number of refinement iterations increased.

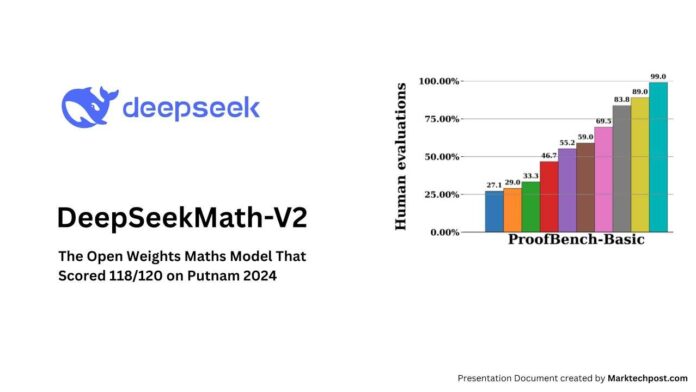

In the IMO ProofBench expert evaluation, DeepSeekMath-V2 surpassed DeepMind’s DeepThink IMO Gold on the Basic problem subset and remained highly competitive on the Advanced subset, while clearly outperforming other large language models.

Competition highlights include:

- IMO 2025: Successfully solved 5 out of 6 problems, achieving gold medal caliber performance.

- CMO 2024: Fully solved 4 problems and earned partial credit on another, reaching gold medal standards.

- Putnam 2024: Completed 11 of 12 problems flawlessly and made minor errors on the last, scoring 118 out of 120 points, surpassing the top human score of 90.

Summary of Innovations and Impact

- DeepSeekMath-V2 is a 685 billion parameter model built upon DeepSeek V3.2 Exp Base, designed for natural language theorem proving with integrated self-verification, and released openly under the Apache 2.0 license.

- The key advancement lies in a verifier-first training framework utilizing GRPO-trained verifiers and meta verifiers that evaluate proofs based on logical rigor rather than solely on final answers, addressing the disconnect between correct results and valid reasoning.

- The proof generator is trained against these verifiers with a composite reward system that balances proof quality, self-evaluation consistency, and analysis authenticity, enhanced by sequential refinement within a 128K token context to iteratively improve proofs.

- With scaled computational resources during testing, DeepSeekMath-V2 attains gold-level achievements on IMO 2025 and CMO 2024, and an outstanding 118/120 score on Putnam 2024, exceeding the best human performance that year.