Cisco, in collaboration with Splunk, has unveiled the Cisco Time Series Model, a pioneering univariate zero-shot foundation model tailored for time series data, specifically targeting observability and security metrics. This model is openly accessible as a weight checkpoint on Hugging Face under the Apache 2.0 license, designed to handle forecasting tasks without requiring task-specific fine-tuning. Building upon the TimesFM 2.0 framework, it introduces a sophisticated multiresolution architecture that seamlessly integrates both coarse and fine historical data within a single context window.

Understanding the Need for Multiresolution Context in Observability

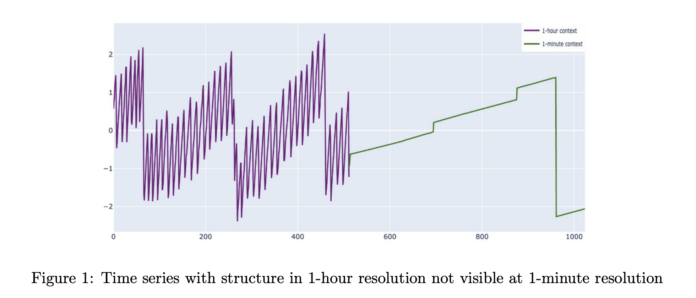

Time series data in production environments are inherently complex, exhibiting patterns across multiple temporal scales. For instance, weekly cycles, long-term trends, and saturation effects become apparent only when viewed at broader, coarser resolutions. Conversely, critical events such as traffic surges, saturation points, and incident dynamics manifest at finer granularities, often at one- or five-minute intervals. Traditional time series foundation models typically operate on a single resolution, with context windows ranging from 512 to 4096 data points. Although TimesFM 2.5 extends this to 16,384 points, this still limits the historical coverage to a few weeks at best for one-minute data.

This limitation poses challenges in observability scenarios where data retention policies often aggregate older data, preserving only coarse summaries while discarding fine-grained samples. Typically, detailed data is retained for a short period before being rolled up into hourly aggregates. The Cisco Time Series Model is architected to address this reality by treating coarse historical data as a primary input, enhancing the accuracy of fine-resolution forecasts. Unlike models that assume a uniform temporal grid, this model directly processes multiresolution contexts, reflecting the true nature of observability data storage.

Multiresolution Inputs and Forecasting Strategy

The Cisco Time Series Model ingests two distinct context sequences: a coarse context (xc) and a fine context (xf), each with a maximum length of 512 points. The temporal spacing of the coarse context is set to be 60 times that of the fine context, aligning with typical observability data setups-such as 512 hours of hourly aggregates paired with 512 minutes of one-minute granularity data. Both sequences conclude at the same forecast cutoff point. The model then forecasts 128 future points at the fine resolution, providing both mean predictions and quantile estimates ranging from 0.1 to 0.9, enabling probabilistic forecasting.

Innovative Architecture: Enhancing TimesFM with Resolution Awareness

At its core, the Cisco Time Series Model leverages the TimesFM patch-based decoder architecture. Input sequences undergo normalization and are segmented into non-overlapping patches before passing through a residual embedding layer. The transformer backbone comprises 50 decoder-only layers, culminating in a residual block that projects tokens onto the forecast horizon. Notably, the model discards traditional positional embeddings, instead encoding sequence structure through patch ordering, multiresolution design, and novel resolution embeddings.

Two key architectural enhancements enable multiresolution processing. First, a special separator token (referred to as ST) is inserted between the coarse and fine token streams, marking the boundary within the sequence space. Second, resolution embeddings (RE) are introduced in the model space, assigning distinct embedding vectors to all coarse and fine tokens respectively. Ablation studies confirm that these components significantly boost forecasting performance, particularly for long context windows.

The decoding process itself is multiresolution-aware. As the model generates predictions for the fine resolution horizon, newly forecasted fine points are appended to the fine context. Simultaneously, aggregated summaries of these predictions update the coarse context, creating an autoregressive feedback loop where both resolutions evolve in tandem during forecasting.

Training Methodology and Dataset Composition

The Cisco Time Series Model is developed through continued pretraining atop the existing TimesFM weights, culminating in a model with approximately 500 million parameters. Training employs the AdamW optimizer for biases, normalization layers, and embeddings, while utilizing the Muon optimizer for hidden layers, all governed by cosine learning rate schedules. The loss function combines mean squared error on the mean forecast with quantile loss across quantiles from 0.1 to 0.9. The training regimen spans 20 epochs, with the optimal checkpoint selected based on validation loss.

The training corpus is extensive and heavily skewed towards observability data. Splunk contributed roughly 400 million metric time series from their Observability Cloud, sampled at one-minute intervals over 13 months and partially aggregated to five-minute intervals. Overall, the dataset encompasses over 300 billion unique data points, distributed as approximately 35% one-minute observability data, 16.5% five-minute observability data, 29.5% GIFT Eval pretraining data, 4.5% Chronos datasets, and 14.5% synthetic KernelSynth series.

Performance Evaluation on Observability and General Forecasting Benchmarks

The model’s efficacy was assessed on two primary benchmarks: an observability dataset derived from Splunk metrics at one- and five-minute resolutions, and a filtered version of the GIFT Eval benchmark, which excludes datasets overlapping with TimesFM 2.0 training data.

On the observability dataset at one-minute resolution with 512 fine steps, the Cisco Time Series Model achieved a mean absolute error (MAE) of 0.4788, significantly outperforming TimesFM 2.5 (0.6265) and TimesFM 2.0 (0.6315). Similar improvements were observed in mean absolute scaled error and continuous ranked probability score metrics, with comparable gains at the five-minute resolution. The model also surpassed other baselines such as Chronos 2, Chronos Bolt, Toto, and AutoARIMA under the normalized evaluation metrics.

On the filtered GIFT Eval benchmark, the Cisco Time Series Model demonstrated performance on par with the base TimesFM 2.0 and remained competitive with TimesFM 2.5, Chronos 2, and Toto. The primary achievement lies in maintaining robust general forecasting capabilities while delivering substantial advantages in long-context and observability-specific scenarios.

Summary of Key Insights

- The Cisco Time Series Model is a univariate zero-shot foundation model that enhances the TimesFM 2.0 decoder backbone with a multiresolution architecture, specifically optimized for observability and security metric forecasting.

- It processes dual-resolution contexts-coarse and fine-each up to 512 steps, with the coarse resolution spaced 60 times wider than the fine, and forecasts 128 fine-resolution points with both mean and quantile outputs.

- Trained on a massive dataset exceeding 300 billion data points, predominantly from observability sources including Splunk machine data, GIFT Eval, Chronos, and synthetic KernelSynth series, the model contains roughly 500 million parameters.

- It delivers superior accuracy on observability benchmarks at one- and five-minute resolutions compared to TimesFM 2.0, Chronos, and other baselines, while preserving competitive performance on general-purpose forecasting tasks.