Cerebras has introduced the MiniMax-M2-REAP-162B-A10B, a streamlined Sparse Mixture-of-Experts (SMoE) causal language model derived from the original MiniMax-M2. This new variant leverages the innovative Router weighted Expert Activation Pruning (REAP) technique to reduce the number of active experts and memory footprint, while maintaining the performance characteristics of the original 230 billion total parameters with 10 billion active MiniMax-M2 model. It is optimized for deployment scenarios such as coding assistants and tool integration workflows.

Model Design and Technical Highlights

The MiniMax-M2-REAP-162B-A10B model features the following specifications:

- Foundation: Based on the MiniMax-M2 architecture

- Compression Approach: REAP (Router weighted Expert Activation Pruning)

- Total Parameters: 162 billion

- Active Parameters per Token: 10 billion

- Transformer Layers: 62 blocks

- Attention Heads per Layer: 48

- Experts: 180, reduced from an original 256 through pruning

- Experts Activated per Token: 8

- Maximum Context Length: 196,608 tokens

- License: Modified MIT, derived from MiniMaxAI MiniMax M2

This SMoE architecture stores 162 billion parameters in total, but each token is routed through only a subset of experts, resulting in an effective computational cost comparable to a dense 10 billion parameter model. The original MiniMax-M2 was designed specifically for coding and agentic tasks, with 230 billion parameters and 10 billion active per token, a trait preserved in this compressed version.

REAP: A Novel Expert Pruning Strategy

The MiniMax-M2-REAP-162B-A10B model is produced by applying the REAP method uniformly across all mixture-of-experts blocks, pruning approximately 30% of the experts. REAP calculates a saliency score for each expert by combining two key metrics:

- Router Gate Values: Frequency and strength of expert selection by the router

- Expert Activation Norms: Magnitude of the expert’s output when active

Experts with minimal contribution based on this combined score are pruned, while the remaining experts retain their original weights and router gates. Notably, this pruning is a one-shot process without subsequent fine-tuning.

Theoretical analysis reveals that merging experts by summing their gates leads to functional subspace collapse, where the router loses its ability to independently control experts based on input, causing irreducible errors. In contrast, pruning preserves independent routing control over surviving experts, with errors proportional to the importance of the removed experts. This distinction underpins REAP’s superior performance.

Extensive evaluations across SMoE models ranging from 20 billion to 1 trillion parameters demonstrate that REAP consistently outperforms expert merging and other pruning methods on generative tasks such as code synthesis, mathematical problem solving, and tool invocation, especially at compression rates up to 50%.

Performance Retention at 30% Expert Pruning

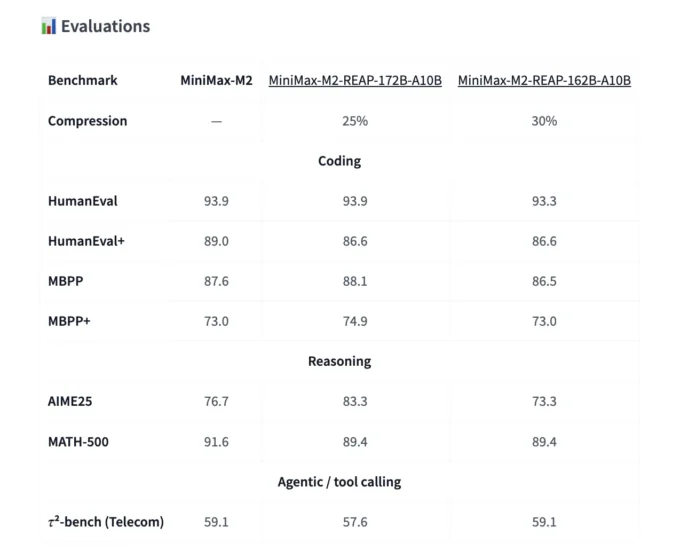

The MiniMax-M2-REAP-162B-A10B was benchmarked against:

- The original MiniMax-M2 (230B parameters)

- A 172B parameter REAP variant with 25% pruning

- The 162B parameter REAP variant with 30% pruning

On coding benchmarks including HumanEval, HumanEval Plus, MBPP, and MBPP Plus, the 162B REAP model closely matches the base model’s performance, achieving around 90% on HumanEval and approximately 80% on MBPP. Both REAP variants track the original MiniMax-M2 within a narrow margin.

For reasoning tasks such as AIME 25 and MATH 500, minor fluctuations occur between models, but the 30% pruned checkpoint remains competitive without significant degradation.

In tool-calling and agentic evaluations, exemplified by the τ² benchmark in telecommunications, the 162B REAP model maintains parity with the full MiniMax-M2, confirming that pruning does not compromise practical utility.

These findings align with broader research indicating that REAP enables near lossless compression for code generation and tool use across large-scale SMoE architectures.

Deployment Insights and Resource Efficiency

Cerebras offers a ready-to-use vLLM serving example, positioning MiniMax-M2-REAP-162B-A10B as a seamless replacement for existing MiniMax-M2 deployments. The model supports tensor parallelism and automatic tool selection, facilitating integration into production pipelines.

vllm serve cerebras/MiniMax-M2-REAP-162B-A10B

--tensor-parallel-size 8

--tool-call-parser minimax_m2

--reasoning-parser minimax_m2_append_think

--trust-remote-code

--enable_expert_parallel

--enable-auto-tool-choice

To manage GPU memory constraints, it is recommended to adjust the --max-num-seqs parameter, for example reducing it to 64, to control batch size and ensure stable operation.

Summary of Key Advantages

- Efficient SMoE Architecture: The model balances a large total parameter count (162B) with a low active parameter count per token (10B), delivering high capacity at a computational cost similar to smaller dense models.

- Effective Expert Pruning via REAP: By pruning 30% of experts based on router gate activity and activation norms, the model retains the original routing structure and expert weights, preserving behavior.

- Minimal Accuracy Loss: Across coding, reasoning, and tool invocation benchmarks, the pruned model performs nearly identically to the full 230B MiniMax-M2, demonstrating robust compression.

- Superior to Expert Merging: REAP avoids the pitfalls of functional subspace collapse inherent in expert merging, resulting in better generative performance across a wide range of large SMoE models.

Comparative Overview

The following table summarizes the performance and specifications of the MiniMax-M2 variants, highlighting the trade-offs between parameter count, pruning rate, and benchmark results:

Final Thoughts

The launch of MiniMax-M2-REAP-162B-A10B underscores the maturity of Router weighted Expert Activation Pruning as a practical tool for deploying large-scale SMoE models. By achieving approximately 30% expert pruning without sacrificing performance, Cerebras demonstrates a viable path toward more memory-efficient, scalable language models tailored for complex coding and agentic applications. This advancement marks a significant step in transforming expert pruning from theoretical research into production-ready technology for next-generation AI systems.