News

Featured

Tech Careers in 2026 and Beyond: Inside the Jobs, Skills, and...

Transforming Africa's Technology Workforce: A New Era Unfolds

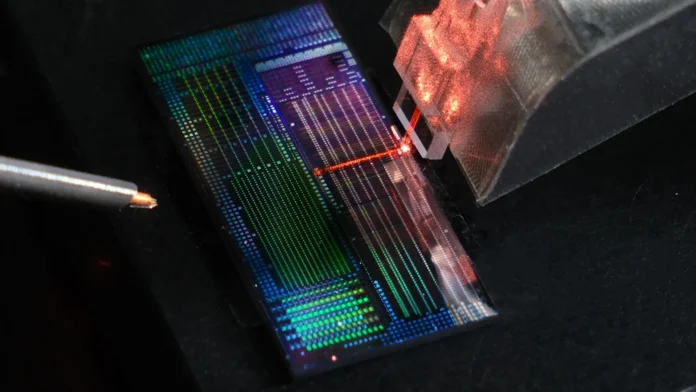

Africa's technology sector is at a pivotal crossroads. What was once a distant vision involving artificial intelligence, cloud services, and digital employment has now become an immediate reality, fundamentally altering recruitment strategies, government policies, and the career aspirations of the continent's youth....