Unveiling Privacy Risks: A Novel Approach to Detecting AI Training Data Exposure

Recent advancements have introduced a groundbreaking technique that exposes privacy weaknesses by identifying whether specific data was incorporated into the training of AI models.

Introducing CAMIA: A Context-Sensitive Membership Inference Attack

Developed by a team of experts, the new method, dubbed CAMIA (Context-Aware Membership Inference Attack), significantly outperforms earlier strategies aimed at probing the internal memory of AI systems. Unlike traditional attacks, CAMIA leverages the unique generative characteristics of modern language models to detect data leakage with greater precision.

Why Data Memorization in AI Models Raises Alarms

As AI models grow in complexity and scale, concerns about inadvertent data retention intensify. This phenomenon, known as “data memorization,” occurs when models unintentionally store sensitive information from their training datasets, which can later be extracted. For instance, in the medical field, AI trained on patient records might unintentionally reveal confidential health details. Similarly, corporate AI systems trained on proprietary communications risk exposing internal emails or strategic documents if exploited.

These privacy issues have gained heightened attention following announcements from major platforms like LinkedIn, which plans to utilize user data to enhance its generative AI capabilities. Such initiatives raise critical questions about the potential resurfacing of private content in AI-generated outputs.

Understanding Membership Inference Attacks (MIAs)

To evaluate whether AI models leak training data, cybersecurity researchers employ Membership Inference Attacks. Essentially, MIAs probe the model by asking: “Was this particular data point part of your training set?” Successfully answering this question indicates that the model retains identifiable traces of its training data, posing a significant privacy threat.

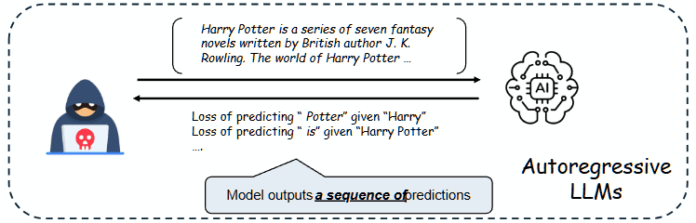

These attacks exploit the tendency of models to respond differently to familiar versus novel inputs. However, conventional MIAs have struggled against the sophisticated architectures of today’s generative AI, which produce text sequentially, token by token, rather than providing a single output per input.

Why Traditional MIAs Fall Short Against Generative AI

Earlier MIAs were designed for simpler classification models that output a single prediction per input. In contrast, large language models (LLMs) generate text one token at a time, with each token influenced by the preceding context. This dynamic process means that analyzing overall confidence scores for entire text blocks misses the nuanced, moment-to-moment shifts where data leakage can occur.

CAMIA’s Innovative Approach: Leveraging Contextual Uncertainty

The core breakthrough of CAMIA lies in recognizing that AI memorization is highly dependent on context. Specifically, models rely more heavily on memorized data when uncertain about the next token to generate.

For example, consider the phrase “Harry Potter is… written by… The world of Harry…” In this scenario, a model can confidently predict the next word “Potter” through general knowledge and contextual clues, without needing to recall specific training data. Conversely, if the prompt is simply “Harry,” predicting “Potter” becomes challenging unless the model has memorized exact sequences from its training set. A confident prediction in this ambiguous context strongly suggests memorization.

Token-Level Analysis: Tracking the Shift from Guesswork to Recall

CAMIA operates at the token level, monitoring how the model’s uncertainty changes throughout text generation. This fine-grained analysis distinguishes between low uncertainty caused by repetitive patterns and genuine memorization. By capturing these subtle transitions, CAMIA identifies privacy leaks that other methods overlook.

Empirical Validation: Superior Performance on Benchmark Models

Testing CAMIA on the MIMIR benchmark with various Pythia and GPT-Neo models demonstrated its effectiveness. For instance, when applied to a 2.8 billion parameter Pythia model trained on the ArXiv dataset, CAMIA nearly doubled the true positive detection rate-from 20.11% to 32.00%-while maintaining a minimal false positive rate of just 1%.

Moreover, CAMIA is computationally efficient, capable of processing 1,000 samples in roughly 38 minutes on a single NVIDIA A100 GPU, making it a practical tool for real-world model auditing.

Implications for AI Development and Privacy Protection

This research underscores the urgent need for the AI community to address privacy vulnerabilities inherent in training large-scale models on extensive, uncurated datasets. The authors hope their findings will inspire the creation of enhanced privacy-preserving mechanisms, striking a balance between AI utility and safeguarding user confidentiality.

Stay Informed on AI and Data Privacy Trends

For professionals eager to deepen their understanding of AI, big data, and privacy challenges, numerous industry conferences and webinars offer valuable insights. These events bring together leading experts to discuss the latest innovations and regulatory developments shaping the future of AI technology.