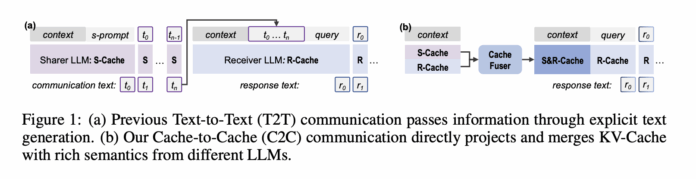

Is it possible for large language models (LLMs) to collaborate effectively without exchanging any textual tokens? A collaborative research effort from Tsinghua University, Infinigence AI, The Chinese University of Hong Kong, Shanghai AI Laboratory, and Shanghai Jiao Tong University introduces an innovative communication framework called Cache-to-Cache (C2C). This approach enables LLMs to share information directly through their key-value (KV) caches, bypassing the traditional reliance on generated text.

Limitations of Text-Based Communication in Multi-LLM Systems

Currently, most multi-LLM architectures depend on text as the medium for inter-model communication. One model generates a textual explanation or response, which another model then reads and interprets as context. While intuitive, this method introduces several inefficiencies:

- Semantic Compression: Internal activations within the KV-Cache are condensed into brief natural language messages, causing a significant loss of rich semantic information that never leaves the cache.

- Ambiguity of Natural Language: Even with structured protocols, nuances such as HTML tag roles or syntactic markers may be lost or misinterpreted when converted into text, leading to degraded communication fidelity.

- Latency from Token Decoding: Each communication step requires sequential token-by-token decoding, which substantially increases response times, especially during extended analytical interactions.

These challenges raise a fundamental question: can KV-Cache itself serve as a direct communication channel between LLMs?

Exploring KV-Cache as a Communication Medium: Oracle Experiments

To investigate the viability of KV-Cache for inter-model communication, the researchers conducted two oracle-style experiments designed to evaluate its effectiveness.

Cache Enrichment Oracle

This experiment compared three configurations on multiple-choice benchmarks:

- Direct: Prefilling the cache with the question only.

- Few-Shot: Prefilling with exemplars plus the question, resulting in a longer cache.

- Oracle: Prefilling with exemplars and question, then discarding the exemplar portion to keep the cache length equal to the Direct setup, focusing only on the question-aligned cache slice.

Results showed that the Oracle method improved accuracy from 58.42% to 62.34% without increasing cache length, while Few-Shot achieved 63.39%. This indicates that enriching the question-aligned KV-Cache segment alone enhances performance. Further layer-wise analysis revealed that selectively enriching certain layers outperforms enriching all layers, inspiring the development of a gating mechanism.

Cache Transformation Oracle

The second experiment tested whether KV-Cache from one model could be transformed to fit the cache space of another. A three-layer multilayer perceptron (MLP) was trained to map KV-Cache from a Qwen3 4B model to a Qwen3 0.6B model. t-SNE visualizations confirmed that the transformed cache resides within the target model’s cache manifold, albeit in a subregion, validating the feasibility of cross-model cache translation.

Cache-to-Cache (C2C): Direct Semantic Exchange via KV-Cache

Building on these findings, the team formalized the Cache-to-Cache communication protocol, involving a Sharer model and a Receiver model. Both models process the same input during the prefill phase, generating layer-wise KV-Caches. For each Receiver layer, C2C selects a corresponding Sharer layer and applies a specialized C2C Fuser to merge the caches. During decoding, the Receiver generates tokens conditioned on this fused cache rather than its original cache.

Architecture of the C2C Fuser

The C2C Fuser integrates the caches through a residual connection and consists of three key components:

- Projection Module: Concatenates the Sharer and Receiver KV-Cache vectors, followed by a projection layer and a feature fusion layer to blend information.

- Dynamic Weighting Module: Adjusts attention heads dynamically based on input, allowing some heads to prioritize Sharer information.

- Learnable Gate: Implements a per-layer gating mechanism that determines whether to incorporate Sharer context. This gate uses a Gumbel sigmoid during training and becomes binary during inference.

Handling Model Differences

Since Sharer and Receiver models may differ in architecture and size, C2C includes:

- Token Alignment: Receiver tokens are decoded to strings and re-encoded using the Sharer tokenizer, selecting Sharer tokens that maximize string coverage.

- Layer Alignment: A terminal pairing strategy matches top layers first and proceeds backward until the shallower model’s layers are fully aligned.

During training, both LLMs remain frozen; only the C2C module is optimized using next-token prediction loss on Receiver outputs. The main C2C fusers were trained on the first 500,000 samples of the OpenHermes2.5 dataset and evaluated on benchmarks including OpenBookQA, ARC Challenge, MMLU Redux, and C-Eval.

Performance Gains: Accuracy and Latency Improvements

Testing across various Sharer-Receiver pairs from Qwen2.5, Qwen3, Llama3.2, and Gemma3 families demonstrated consistent benefits of C2C over traditional text-based communication:

- C2C improved average accuracy by approximately 8.5% to 10.5% compared to individual models.

- It outperformed text-based communication by 3% to 5% on average.

- Latency was reduced by about 50%, with C2C delivering roughly twice the speed of text communication due to elimination of token decoding overhead.

For instance, using Qwen3 0.6B as Receiver and Qwen2.5 0.5B as Sharer on the MMLU Redux benchmark, the Receiver alone scored 35.53%, text-to-text communication reached 41.03%, and C2C achieved 42.92%. The average query time for text communication was 1.52 units, whereas C2C maintained a low latency of 0.40 units, close to single-model inference speed. Similar trends were observed on OpenBookQA, ARC Challenge, and C-Eval.

On the LongBenchV1 dataset, C2C consistently outperformed text communication across all sequence length ranges. For sequences between 0 and 4,000 tokens, text communication scored 29.47%, while C2C reached 36.64%. Performance gains persisted for longer sequences up to 8,000 tokens and beyond.

Summary of Key Insights

- Cache-to-Cache Communication: Enables direct information transfer between LLMs via KV-Cache, eliminating the need for intermediate text and reducing semantic loss and token bottlenecks.

- Oracle Studies: Demonstrated that enriching the question-aligned cache slice enhances accuracy without increasing cache length, and that KV-Cache can be mapped across models through learned transformations.

- C2C Fuser Design: Combines projection, dynamic attention weighting, and learnable gating in a residual framework, allowing selective integration of Sharer semantics without disrupting Receiver representations.

- Consistent Improvements: Across multiple model families, C2C delivers 8.5% to 10.5% accuracy boosts over single models, 3% to 5% gains over text communication, and approximately 2x faster inference times.

Concluding Remarks

Cache-to-Cache communication redefines multi-LLM collaboration as a direct semantic fusion challenge rather than a prompt engineering task. By leveraging neural projection and fusion of KV-Caches with learnable gating, C2C harnesses the deep, specialized knowledge embedded in each model while avoiding the latency and information bottlenecks inherent in text generation. With significant accuracy enhancements and latency reductions, C2C represents a promising advancement toward native KV-Cache-based cooperation among large language models.