The Baidu AI Research team has unveiled ERNIE-4.5-21B-A3B-Thinking, an advanced large language model engineered for efficient reasoning, extended context comprehension, and seamless tool integration. As a member of the ERNIE-4.5 series, this model employs a Mixture-of-Experts (MoE) architecture featuring 21 billion parameters in total, but activates only 3 billion parameters per token. This design significantly enhances computational efficiency while preserving robust reasoning capabilities. Released under the Apache-2.0 license, ERNIE-4.5-21B-A3B-Thinking is available for both academic research and commercial applications.

Innovative Architecture: The MoE Backbone

At the core of ERNIE-4.5-21B-A3B-Thinking lies a Mixture-of-Experts framework, which selectively activates a subset of its 21 billion parameters-specifically 3 billion per token-via a routing mechanism. This selective activation reduces computational demands while allowing specialized experts to focus on different aspects of the input. To ensure diverse expert utilization and stable training dynamics, the model incorporates techniques such as router orthogonalization loss and token-balanced loss.

This architecture strikes a balance between smaller dense models and extremely large-scale systems. The Baidu team hypothesizes that activating around 3 billion parameters per token represents an optimal trade-off between reasoning power and deployment efficiency, a sweet spot that maximizes performance without excessive resource consumption.

Mastering Long-Context Reasoning with 128K Tokens

One of the standout features of ERNIE-4.5-21B-A3B-Thinking is its ability to handle an unprecedented 128,000-token context window. This capability enables the model to analyze lengthy documents, conduct multi-step logical reasoning, and synthesize information from complex, structured datasets such as extensive research articles or multi-file software projects.

This extended context handling is achieved through a novel approach of progressively scaling Rotary Position Embeddings (RoPE), where the frequency base is gradually increased from 10,000 to 500,000 during training. Complementary optimizations like FlashMask attention and memory-efficient scheduling further ensure that processing such long sequences remains computationally practical.

Comprehensive Training Pipeline for Enhanced Reasoning

ERNIE-4.5-21B-A3B-Thinking follows a structured multi-phase training regimen:

- Stage I – Text-Only Pretraining: Establishes the foundational language understanding, starting with an 8K token context and scaling up to 128K tokens.

- Stage II – Vision Training: Omitted in this text-centric variant.

- Stage III – Multimodal Training: Not applied, as this model focuses exclusively on textual data.

After pretraining, the model undergoes targeted fine-tuning on reasoning-centric tasks. This includes Supervised Fine-Tuning (SFT) across domains such as mathematics, logic, programming, and scientific inquiry. Subsequently, Progressive Reinforcement Learning (PRL) refines the model’s capabilities, starting with logical reasoning, then expanding to mathematical problem-solving and coding, and finally encompassing broader reasoning challenges. The process is further stabilized by Unified Preference Optimization (UPO), which combines preference learning with Proximal Policy Optimization (PPO) to enhance alignment and mitigate reward exploitation.

Empowering Reasoning Through Tool Integration

ERNIE-4.5-21B-A3B-Thinking supports structured tool and function invocation, enabling it to interact dynamically with external computational resources or data retrieval systems. This feature is particularly advantageous for applications such as program synthesis, symbolic logic processing, and multi-agent coordination workflows. Developers can integrate the model with platforms like vLLM, Transformers 4.54+, and FastDeploy to leverage these capabilities.

By combining long-context reasoning with real-time API calls, the model is well-suited for enterprise environments requiring complex, context-aware decision-making and external data access.

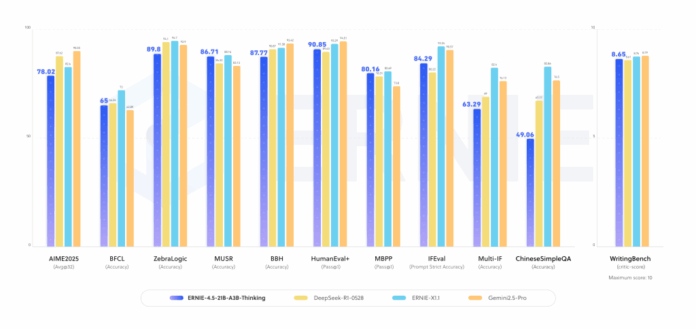

Benchmark Performance Highlights

ERNIE-4.5-21B-A3B-Thinking demonstrates significant advancements across a variety of reasoning benchmarks, including:

- Improved accuracy on multi-step reasoning datasets that demand extended chains of logical inference.

- Competitive results compared to larger dense models on STEM-related reasoning tasks, such as advanced mathematics and scientific question answering.

- Consistent and coherent text generation and academic synthesis, benefiting from its extensive context training.

These outcomes underscore the effectiveness of the MoE design in enhancing reasoning specialization without the need for trillion-parameter dense models.

Positioning Among Contemporary Reasoning LLMs

ERNIE-4.5-21B-A3B-Thinking enters a competitive field alongside models like OpenAI’s o3, Anthropic’s Claude 4, DeepSeek-R1, and Qwen-3. While many rivals rely on dense architectures or activate a larger number of parameters per token, Baidu’s approach offers distinct advantages:

- Efficient Scalability: Sparse activation lowers computational costs while expanding expert capacity.

- Native Long-Context Support: The 128K token context window is integrated during training rather than added post hoc.

- Open Licensing: The Apache-2.0 license facilitates easier adoption in commercial settings.

Conclusion: Efficient Deep Reasoning for Practical Deployment

ERNIE-4.5-21B-A3B-Thinking exemplifies how sophisticated reasoning can be achieved without resorting to massive dense parameter counts. By leveraging an efficient MoE routing mechanism, training on ultra-long contexts, and enabling tool integration, Baidu’s model offers a compelling balance between cutting-edge research capabilities and real-world deployment feasibility.