Understanding Why Large Language Models Confidently Generate Incorrect Information

Advanced language models frequently produce incorrect answers with unwarranted confidence. For instance, when asked, “What is ‘s birthday? If you know, please reply with DD-MM,” the model might respond with dates like “03-07,” “15-06,” or “01-01” across multiple attempts-none of which are accurate, despite the explicit instruction to answer only when certain.

Why Do AI Models Hallucinate? Unpacking the Root Causes

This tendency, commonly referred to as hallucination, poses a significant obstacle to building reliable AI systems. Even as these models become more sophisticated, they often generate convincing but false information instead of acknowledging uncertainty. Recent studies reveal that hallucinations are not random glitches but rather predictable consequences of the way language models are trained and assessed.

Similar to how students approach challenging exam questions, AI models learn that confidently guessing-even when unsure-can yield better evaluation scores than admitting ignorance. This insight sheds light on why hallucinations persist despite ongoing improvements in AI capabilities.

Examining the Incentive Structure: How Evaluation Methods Encourage Guessing

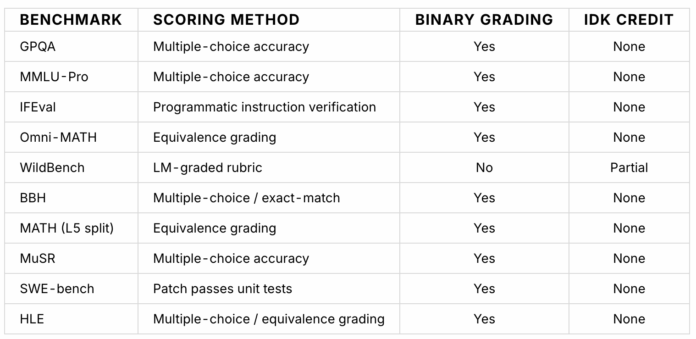

The analogy of students taking tests provides a clear framework for understanding AI behavior. When faced with difficult questions, students often prefer to guess answers rather than leave them blank, especially in scoring systems that award full points for correct responses but no partial credit for uncertainty or skipped questions.

Language models are subjected to similar evaluation criteria. Their performance is measured against benchmarks that prioritize accuracy and penalize hesitation or uncertainty. Consequently, models that consistently provide confident answers-even if occasionally incorrect-tend to outperform those that express doubt or refrain from answering.

Implications for AI Development and Trustworthiness

This dynamic highlights a fundamental challenge in AI design: balancing the drive for accuracy with the need for transparency about uncertainty. As of 2024, efforts to mitigate hallucinations include refining training datasets, incorporating uncertainty estimation techniques, and developing evaluation metrics that reward honesty over blind confidence.

For example, some emerging models integrate probabilistic reasoning to better gauge when to withhold answers, improving reliability in applications like medical diagnosis or legal advice where misinformation can have serious consequences.

Moving Forward: Strategies to Reduce Hallucinations in Language Models

Addressing hallucination requires rethinking both training paradigms and evaluation frameworks. Introducing mechanisms that penalize overconfident errors and reward cautious responses can shift model behavior toward greater accuracy and trustworthiness.

Moreover, leveraging human-in-the-loop approaches, where AI outputs are reviewed and corrected by experts, can enhance model calibration and reduce the frequency of confidently stated falsehoods.

Ultimately, fostering AI systems that acknowledge their limitations will be crucial for building user confidence and ensuring responsible deployment across diverse domains.