Introducing Olmo 3: A Fully Transparent Open-Source LLM Family by Allen Institute for AI

The Allen Institute for AI (AI2) has unveiled Olmo 3, a comprehensive open-source large language model (LLM) family that offers complete transparency throughout the entire development pipeline. This release includes everything from raw datasets and training code to intermediate checkpoints and deployment-ready models, empowering researchers and developers with full visibility and reproducibility.

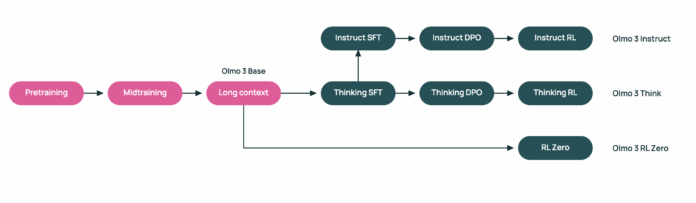

Olmo 3 Model Variants and Architecture

Olmo 3 comprises a suite of dense transformer models available in two sizes: 7 billion and 32 billion parameters. The lineup features four main variants: Olmo 3-Base, Olmo 3-Think, Olmo 3-Instruct, and Olmo 3-RL Zero. Both the 7B and 32B models support an extensive context window of 65,536 tokens and are trained using a consistent multi-stage curriculum, ensuring uniformity across the family.

Dolma 3: The Foundation Dataset for Olmo 3

Central to Olmo 3’s training is the Dolma 3 data suite, a meticulously curated collection designed to fuel the model’s learning process. Dolma 3 is segmented into three subsets:

- Dolma 3 Mix: A massive 5.9 trillion token dataset combining diverse sources such as web text, scientific papers, and open-source code repositories.

- Dolma 3 Dolmino Mix: A refined 100 billion token subset emphasizing complex tasks like mathematics, coding, instruction following, and critical reasoning.

- Dolma 3 Longmino Mix: Focused on long-form content, this subset adds 50 billion tokens for the 7B model and 100 billion tokens for the 32B model, featuring extensive scientific documents processed through the olmOCR pipeline.

This staged data curriculum is instrumental in enabling Olmo 3’s unprecedented 65K token context length while maintaining model stability and performance.

High-Performance Training Infrastructure

Training Olmo 3 leverages cutting-edge hardware, utilizing clusters of NVIDIA H100 GPUs. The 7B Olmo 3-Base model is trained on 1,024 H100 devices, achieving an impressive throughput of approximately 7,700 tokens per device per second. Subsequent training phases employ 128 H100s for mid-stage Dolmino training and 256 H100s for the Longmino long-context extension, showcasing a scalable and efficient training strategy.

Benchmarking Olmo 3 Base Models

Olmo 3-Base 32B stands out as a top-tier open-source base model, demonstrating competitive or superior performance compared to other prominent open-weight models like Qwen 2.5 and Gemma 3. Across a broad spectrum of benchmarks, Olmo 3-Base 32B consistently ranks near or above its peers, all while maintaining full transparency of its data and training methodologies.

Olmo 3-Think: Advanced Reasoning Capabilities

Building upon the base models, Olmo 3-Think variants (7B and 32B) are optimized for enhanced reasoning tasks. These models undergo a three-phase post-training regimen involving supervised fine-tuning, Direct Preference Optimization (DPO), and Reinforcement Learning with Verifiable Rewards (RLVR) within the OlmoRL framework. Notably, Olmo 3-Think 32B narrows the performance gap with Qwen 3 32B reasoning models while utilizing approximately six times fewer training tokens, highlighting its efficiency.

Olmo 3-Instruct: Tailored for Conversational AI and Tool Integration

Olmo 3-Instruct 7B is fine-tuned specifically for rapid instruction adherence, multi-turn dialogue, and seamless tool usage. Starting from the Olmo 3-Base 7B foundation, it incorporates the Dolci Instruct dataset and training pipeline, which includes supervised fine-tuning, DPO, and RLVR tailored for conversational and function-calling tasks. This variant reportedly matches or surpasses open models such as Qwen 2.5, Gemma 3, and Llama 3.1, and competes closely with Qwen 3 models on various instruction and reasoning benchmarks.

Olmo 3-RL Zero: A Clean Slate for Reinforcement Learning Research

Designed for researchers focused on reinforcement learning (RL) with language models, Olmo 3-RL Zero 7B offers a fully open RL training pathway. It is built atop Olmo 3-Base and utilizes Dolci RL Zero datasets that are carefully decontaminated to exclude overlap with Dolma 3 pretraining data. This ensures a clean separation between pretraining and RL data, facilitating rigorous RLVR research in domains like mathematics, coding, and instruction following.

Comparative Overview of Olmo 3 Variants

| Model Variant | Training Data | Main Application | Competitive Position |

|---|---|---|---|

| Olmo 3 Base 7B | Dolma 3 Mix, Dolma 3 Dolmino Mix, Dolma 3 Longmino Mix | General-purpose foundation model with long-context reasoning, coding, and math capabilities | Robust open 7B base, foundation for advanced variants, competitive with leading open 7B models |

| Olmo 3 Base 32B | Same as 7B with extended Longmino tokens | High-performance base for research, long-context tasks, and RL applications | Top open 32B base, rivals Qwen 2.5 32B and Gemma 3 27B, outperforms Marin, Apertus, LLM360 |

| Olmo 3 Think 7B | Olmo 3 Base 7B + Dolci Think SFT, DPO, RL | Reasoning-centric 7B model with internal thought tracing | Efficient open reasoning model enabling chain-of-thought and RL research on modest hardware |

| Olmo 3 Think 32B | Olmo 3 Base 32B + Dolci Think SFT, DPO, RL | Flagship reasoning model with extended thinking capabilities | Strongest open reasoning model, competitive with Qwen 3 32B using 6x fewer tokens |

| Olmo 3 Instruct 7B | Olmo 3 Base 7B + Dolci Instruct SFT, DPO, RL | Instruction following, conversational AI, function calling, tool integration | Outperforms Qwen 2.5, Gemma 3, Llama 3.1; narrows gap to Qwen 3 at similar scale |

| Olmo 3 RL Zero 7B | Olmo 3 Base 7B + Dolci RLZero datasets (decontaminated) | Clean RLVR research on math, code, instruction, and mixed tasks | Fully open RL pathway enabling rigorous benchmarking on clean data |

Essential Highlights of Olmo 3

- Complete Transparency: Olmo 3 offers an end-to-end open pipeline, from dataset creation with Dolma 3, through multi-stage pretraining and post-training with Dolci, to reinforcement learning and evaluation tools, fostering reproducible and debuggable LLM research.

- Large Context Windows: Both 7B and 32B models support an extraordinary 65,536 token context length, enabled by a carefully designed staged training curriculum.

- Competitive Open Models: Olmo 3 Base 32B ranks among the best open base models, while Olmo 3 Think 32B leads in open reasoning models, achieving high performance with significantly fewer training tokens.

- Specialized Variants for Diverse Tasks: Olmo 3 Instruct excels in conversational and tool-using scenarios, and Olmo 3 RL Zero provides a clean, open framework for reinforcement learning research.

Final Thoughts

Olmo 3 represents a pioneering step in open-source LLM development by fully operationalizing transparency across all stages-from data curation and training to evaluation and reinforcement learning. This comprehensive openness addresses common challenges related to data quality, long-context training, and reasoning-focused RL, establishing a solid foundation for future research and innovation. By setting a new standard for reproducibility and clarity, Olmo 3 paves the way for more accessible and trustworthy large language model research.