Alibaba Unveils Qwen3-ASR-Flash: A New Benchmark in AI Speech Transcription

Alibaba’s latest innovation in AI speech recognition, the Qwen3-ASR-Flash model, is set to redefine industry standards with its cutting-edge capabilities. Leveraging the advanced Qwen3-Omni architecture and trained on an extensive dataset comprising tens of millions of hours of diverse speech recordings, this model promises exceptional accuracy even in challenging acoustic scenarios and with complex linguistic nuances.

Outstanding Accuracy Across Languages and Dialects

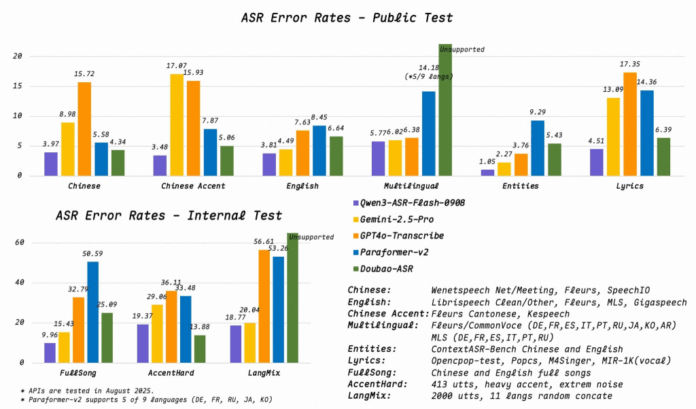

Recent evaluations conducted in August 2025 highlight Qwen3-ASR-Flash’s superior performance compared to leading competitors. In a public benchmark test for standard Chinese, the model achieved a remarkably low word error rate (WER) of 3.97%, significantly outperforming Gemini-2.5-Pro’s 8.98% and GPT4o-Transcribe’s 15.72%. This demonstrates its advanced proficiency in Mandarin transcription.

Moreover, the model excels in recognizing various Chinese dialects, registering an error rate of just 3.48%. Its English transcription capabilities are equally impressive, with a WER of 3.81%, surpassing Gemini’s 7.63% and GPT4o’s 8.45%. This level of accuracy across multiple languages and accents underscores its versatility and robustness.

Revolutionizing Music Transcription

One of the most challenging tasks for speech recognition systems is accurately transcribing song lyrics. Qwen3-ASR-Flash sets a new standard in this domain, achieving a 4.51% error rate on lyric recognition tests. Internal assessments on full-length songs further confirm its prowess, with a WER of 9.96%, dramatically better than Gemini-2.5-Pro’s 32.79% and GPT4o-Transcribe’s 58.59%. This breakthrough opens new possibilities for applications in music analytics and entertainment technology.

Innovative Contextual Biasing for Enhanced Customization

Beyond raw accuracy, Qwen3-ASR-Flash introduces a novel approach to contextual biasing that simplifies user interaction. Unlike traditional systems requiring meticulously formatted keyword lists, this model accepts background text in virtually any format-ranging from simple keyword arrays to entire documents or even unstructured text. This flexibility allows users to tailor transcription outputs effortlessly without complex preprocessing.

The model intelligently leverages contextual information to boost transcription precision, yet maintains stable performance even when provided with irrelevant or noisy context data. This adaptability marks a significant advancement in user-friendly AI transcription technology.

Comprehensive Multilingual and Dialect Support

Alibaba aims to position Qwen3-ASR-Flash as a universal speech transcription solution. The model supports 11 languages, encompassing a wide array of dialects and regional accents. Chinese language coverage is particularly extensive, including Mandarin and prominent dialects such as Cantonese, Sichuanese, Minnan (Hokkien), and Wu.

For English, the model accurately transcribes various accents including British, American, and other regional variants. Additional supported languages include French, German, Spanish, Italian, Portuguese, Russian, Japanese, Korean, and Arabic, making it a truly global tool.

Furthermore, Qwen3-ASR-Flash features automatic language identification, enabling it to detect which of the supported languages is being spoken in real-time. It also effectively filters out non-speech elements like silence and background noise, delivering cleaner and more reliable transcription results than previous AI models.

Looking Ahead: The Future of AI Transcription

With its blend of high accuracy, flexible contextual integration, and broad linguistic coverage, Qwen3-ASR-Flash is poised to become a leading choice for enterprises and developers seeking advanced speech-to-text solutions. As AI transcription technology continues to evolve, models like this will play a crucial role in enhancing communication, accessibility, and content analysis across industries.