Alibaba’s Qwen team has introduced Qwen3-Max-Preview (Instruct), a groundbreaking large language model boasting over one trillion parameters, marking the largest model they have developed so far. This advanced LLM is accessible via Qwen Chat, Alibaba Cloud’s API, OpenRouter, and is integrated as the default model in Hugging Face’s AnyCoder platform.

Positioning Qwen3-Max in the Current AI Model Ecosystem

While the AI industry increasingly favors compact, resource-efficient models, Alibaba’s launch of a trillion-parameter LLM signals a strategic pivot towards scaling up. This move underscores the company’s robust engineering capabilities and its dedication to pushing the boundaries of trillion-parameter research, contrasting with the prevailing trend of model downsizing.

Specifications and Contextual Capacity of Qwen3-Max

- Model Size: Exceeds 1 trillion parameters, making it one of the largest LLMs available.

- Context Window: Supports an unprecedented 262,144 tokens in total, divided into 258,048 tokens for input and 32,768 tokens for output, enabling extensive document comprehension and multi-turn dialogue.

- Performance Optimization: Incorporates a context caching mechanism designed to accelerate processing during extended conversational sessions.

Benchmarking Qwen3-Max Against Contemporary Models

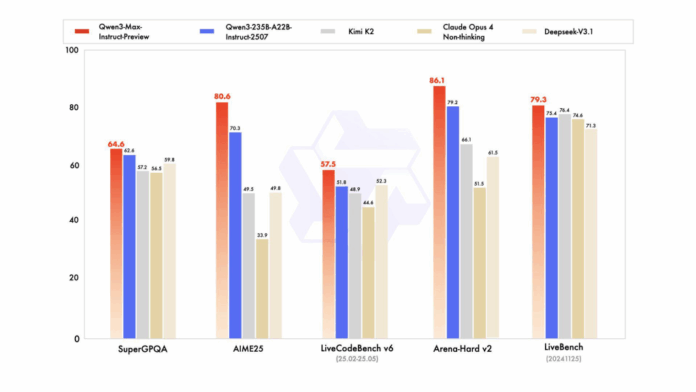

In rigorous evaluations, Qwen3-Max outshines its predecessor, Qwen3-235B-A22B-2507, and holds its own against prominent competitors such as Claude Opus 4, Kimi K2, and Deepseek-V3.1. It demonstrates superior performance across diverse benchmarks including SuperGPQA, AIME25, LiveCodeBench v6, Arena-Hard v2, and LiveBench, showcasing its versatility in reasoning, coding, and general language tasks.

Cost Structure and Usage Pricing

Alibaba Cloud employs a tiered pricing model based on token consumption:

- 0 to 32,000 tokens: $0.861 per million input tokens, $3.441 per million output tokens.

- 32,001 to 128,000 tokens: $1.434 per million input tokens, $5.735 per million output tokens.

- 128,001 to 252,000 tokens: $2.151 per million input tokens, $8.602 per million output tokens.

This pricing scheme makes Qwen3-Max cost-effective for smaller-scale applications but can become expensive for tasks requiring extensive context lengths.

Implications of a Closed-Source Model Release

Unlike previous Qwen iterations, Qwen3-Max is not open-weight and is accessible exclusively through APIs and select partner platforms. This approach reflects Alibaba’s focus on commercial deployment and monetization but may limit adoption within academic research and open-source communities, where transparency and modifiability are highly valued.

Essential Highlights

- First Trillion-Parameter Qwen Model: Qwen3-Max represents Alibaba’s largest and most sophisticated language model to date.

- Exceptional Context Handling: With a 262K token window and caching, it supports processing of lengthy documents and extended conversations beyond most commercial LLMs.

- Strong Competitive Edge: Surpasses earlier Qwen models and rivals leading LLMs like Claude Opus 4 and Kimi K2 in multiple benchmark tests.

- Emergent Reasoning Abilities: Although not explicitly designed as a reasoning engine, it exhibits promising structured reasoning on complex challenges.

- Closed-Source with Tiered Pricing: Available via API with a token-based cost model that favors smaller tasks but may restrict accessibility for large-scale use cases.

Conclusion

Qwen3-Max-Preview sets a new standard in the realm of commercial large language models by combining a trillion-parameter scale with an ultra-long context window and competitive performance metrics. While its closed-source nature and escalating costs for high-context applications may pose barriers to widespread adoption, the model clearly demonstrates Alibaba’s advanced technical prowess and commitment to expanding the frontiers of AI capabilities.