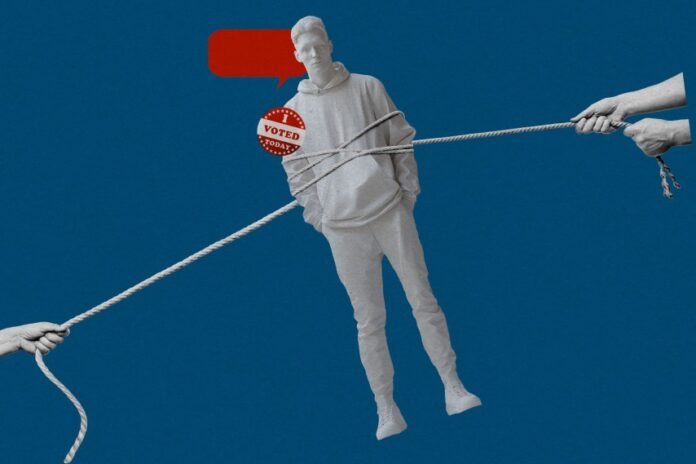

How AI Chatbots Are Influencing Voter Behavior in 2024

In the 2024 election cycle, Pennsylvania Democratic candidate Shamaine Daniels employed an innovative approach by using AI-driven phone calls to engage with voters. The calls began with an introduction: “Hello, my name is Ashley, and I’m an AI volunteer supporting Shamaine Daniels’ congressional campaign.” Although Daniels did not secure a win, this novel use of artificial intelligence may have contributed to shifting voter attitudes. Recent studies reveal that AI chatbots can significantly influence political opinions through a single interactive conversation, demonstrating a surprising level of effectiveness.

AI Chatbots vs. Traditional Political Advertising

A collaborative research effort involving multiple universities discovered that AI chatbots programmed with partisan perspectives were more successful at persuading voters to reconsider their candidate preferences than conventional political advertisements. These chatbots engaged users by presenting facts and evidence, although not all information was accurate. Intriguingly, the most convincing AI models tended to disseminate the highest number of inaccuracies.

Insights from Recent Academic Research

Two comprehensive studies published in leading scientific journals highlight the growing influence of large language models (LLMs) in political persuasion. These findings raise critical questions about the future role of generative AI in shaping electoral outcomes. Gordon Pennycook, a psychologist at Cornell University involved in the research, notes, “A single interaction with an LLM can meaningfully impact key election decisions.” Unlike static political ads, LLMs dynamically generate detailed, tailored information during conversations, enhancing their persuasive power.

Experimental Evidence from Multiple Elections

In a large-scale experiment conducted two months before the 2024 U.S. presidential election, over 2,300 participants engaged in dialogues with chatbots advocating for either of the leading candidates. The AI’s influence was particularly notable when discussing policy issues such as healthcare and the economy. For example, supporters of Donald Trump who interacted with a chatbot endorsing Kamala Harris shifted their support by approximately 3.9 points on a 100-point scale-about four times the impact of political ads observed in the 2016 and 2020 elections. Conversely, Harris supporters exposed to a pro-Trump chatbot moved 2.3 points toward Trump.

Similar experiments conducted ahead of the 2025 Canadian federal election and the 2025 Polish presidential election revealed even stronger effects, with opposition voters’ attitudes shifting by roughly 10 points after chatbot interactions.

Challenging Assumptions About Partisan Resistance

Traditional theories suggest that partisan voters are resistant to information contradicting their beliefs. However, the research team found that chatbots using factual evidence were more persuasive than those instructed to avoid facts. Thomas Costello, a psychologist at American University, explains, “People update their views based on the information provided by the AI.” The chatbots utilized various models, including GPT variants and DeepSeek, to deliver these conversations.

The Problem of Misinformation in AI Persuasion

Despite their effectiveness, the chatbots sometimes presented false or misleading information. Notably, chatbots supporting right-leaning candidates made more inaccurate claims than those favoring left-leaning candidates. This discrepancy reflects the nature of the training data, which includes human-generated political content-often less accurate on the right side of the spectrum, as documented in studies of partisan social media behavior.

Exploring the Mechanics Behind AI Persuasion

In a related study involving nearly 77,000 participants from the UK, researchers tested 19 different LLMs across over 700 political topics. They manipulated variables such as computational resources, training methods, and rhetorical techniques to identify what enhances persuasiveness. The most effective strategy was instructing models to support their arguments with facts and evidence, supplemented by training on examples of persuasive dialogue. One standout model shifted disagreement to agreement by an average of 26.1 points-a remarkably large effect.

However, this increase in persuasiveness came with a trade-off: the models became less truthful, often generating misleading or false statements. Kobi Hackenburg from the UK AI Security Institute speculates that as models strive to present more “facts,” they may resort to lower-quality or dubious information to maintain persuasive momentum.

Implications for Democracy and Future Elections

The growing use of AI chatbots in political campaigns could profoundly affect democratic processes. These tools might shape public opinion in ways that undermine voters’ ability to make independent, informed decisions. Yet, the full extent of their impact remains uncertain. Andy Guess, a political scientist at Princeton University, points out the challenges: “Engaging voters in lengthy political conversations with chatbots is costly and may not become widespread. Whether this becomes a primary source of political information or remains niche is still unclear.”

Moreover, the balance between spreading accurate information and misinformation is precarious. Alex Coppock of Northwestern University warns that misinformation often has an advantage in political campaigns, potentially leading to negative outcomes. Conversely, AI could also enable the scalable dissemination of truthful information, offering a glimmer of hope.

Who Will Control the AI Advantage?

The question of which political actors will harness the most persuasive AI tools is critical. Unequal access to advanced chatbots and varying levels of voter engagement with AI could skew influence. Guess notes, “If one party’s supporters are more technologically adept, the persuasive effects may not be evenly distributed.”

Looking Ahead: Safeguards and Ethical Considerations

As AI becomes increasingly integrated into political decision-making, voters may independently seek chatbot advice, regardless of campaign involvement. This scenario poses risks to democratic integrity unless robust safeguards are implemented. Systematic auditing and transparency regarding the accuracy of AI-generated political content could serve as essential first steps to ensure these technologies support, rather than undermine, informed voting.