Decoding Visual Perception: Insights from AI and Human Brain Comparisons

Exploring the Intersection of Artificial and Biological Vision

One of neuroscience’s most captivating puzzles is understanding how the human brain constructs internal images of the visual environment. In recent years, advances in deep learning have revolutionized computer vision, creating neural networks that not only rival human accuracy in image recognition but also exhibit processing patterns reminiscent of the brain’s own mechanisms. This convergence prompts a compelling inquiry: can artificial intelligence models illuminate the processes by which the brain learns to interpret visual stimuli?

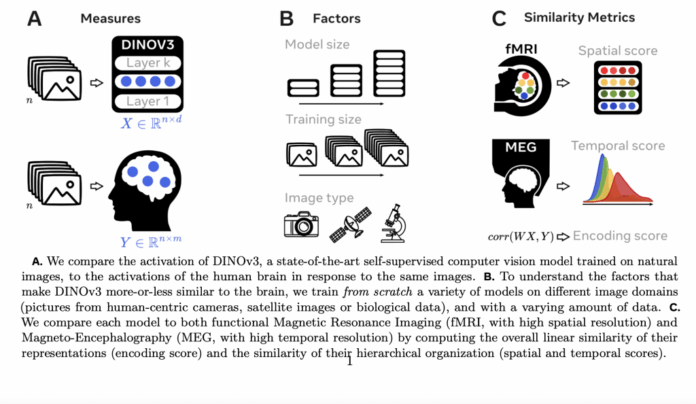

To investigate this, scientists from Meta AI and École Normale Supérieure examined DINOv3, a self-supervised vision transformer trained on billions of natural images. They analyzed how DINOv3’s internal neural activations corresponded with human brain activity elicited by the same images, employing two complementary neuroimaging methods. Functional Magnetic Resonance Imaging (fMRI) offered detailed spatial maps of cortical activation, while Magnetoencephalography (MEG) provided millisecond-level temporal resolution of brain responses. This dual approach enabled a comprehensive understanding of visual information processing in the brain.

Investigating Factors Influencing Brain-AI Alignment

The research focused on three critical variables potentially affecting the similarity between brain activity and AI model responses: the scale of the model, the volume of training data, and the nature of the images used during training. By systematically varying these parameters across multiple DINOv3 versions, the team sought to unravel their individual and combined impacts on brain-model correspondence.

Correlations Between Neural Networks and Brain Activity

Findings revealed a significant convergence between DINOv3’s activations and human brain responses. The model’s internal signals predicted fMRI-measured activity in both primary visual areas and higher-order cortical regions, with peak voxel correlations reaching R = 0.45. MEG data indicated that this alignment began as early as 70 milliseconds post-stimulus and persisted for up to three seconds. Notably, early layers of DINOv3 corresponded with primary visual cortex regions such as V1 and V2, whereas deeper layers aligned with advanced cortical areas, including segments of the prefrontal cortex.

Training Dynamics Reflect Developmental Patterns

Tracking the evolution of brain-model similarity throughout training revealed a developmental-like progression. Early visual alignments emerged rapidly after limited training, while higher-level cortical correspondences required exposure to billions of images. This trajectory mirrors human brain maturation, where sensory cortices develop earlier than associative regions. Temporal alignment appeared first, followed by spatial alignment, with representational encoding similarity developing at an intermediate pace, underscoring the layered complexity of visual processing development.

Impact of Model Size, Data Volume, and Image Content

Model scale played a pivotal role, with larger DINOv3 variants consistently achieving stronger alignment, especially in higher-order brain areas. Extended training durations enhanced similarity across all regions, with the most pronounced benefits observed in complex representations. The type of training images was equally influential: models trained on human-centric photographs exhibited the highest brain alignment, whereas those trained on satellite or microscopic cellular images showed limited convergence, primarily restricted to early visual areas. This highlights the importance of ecologically relevant data in capturing the full spectrum of human-like visual representations.

Connections to Cortical Structure and Function

The timing of DINOv3’s representational emergence correlated with anatomical and functional cortical features. Brain regions characterized by greater developmental expansion, increased cortical thickness, or slower intrinsic timescales aligned later during training. Conversely, highly myelinated areas, known for rapid information transmission, showed earlier alignment. These associations suggest that AI models can provide valuable insights into the biological principles governing cortical organization.

Balancing Innate Architecture and Experiential Learning

The study underscores a nuanced interplay between inherent structural design and experiential learning. While DINOv3’s hierarchical architecture predisposes it to process visual information in stages, achieving brain-like similarity necessitated extensive training on ecologically valid datasets. This dynamic reflects longstanding debates in cognitive science regarding the roles of nativism and empiricism in brain development.

Mirroring Human Brain Development

The parallels between DINOv3’s training progression and human cortical maturation are striking. Sensory regions in the brain mature rapidly, akin to early training stages where DINOv3 aligns with primary visual areas. In contrast, associative and prefrontal cortices develop more slowly, paralleling later training phases where deeper model layers correspond with these regions. This suggests that large-scale AI training trajectories may serve as computational analogues for the staged development of human cognitive functions.

Extending Beyond Visual Processing

Interestingly, DINOv3’s alignment extended beyond classical visual pathways, encompassing prefrontal and multimodal brain regions. This raises intriguing possibilities that such models might capture higher-order cognitive features relevant to reasoning and decision-making. Although this investigation focused solely on DINOv3, it opens avenues for leveraging AI to probe broader aspects of brain organization and functional development.

Summary and Future Directions

This research demonstrates that self-supervised vision transformers like DINOv3 transcend their role as advanced computer vision tools, approximating key aspects of human visual processing. The study highlights how model size, training duration, and data relevance collectively shape the convergence between artificial and biological vision systems. By examining how AI models learn to “see,” we gain profound insights into the developmental and organizational principles underlying human visual perception.