Developing resilient AI agents represents a distinct challenge compared to traditional software engineering, as it revolves around managing probabilistic model outputs rather than fixed, deterministic code paths. This article offers an impartial exploration of strategies to craft AI agents that are dependable, flexible, and safe, focusing on defining clear operational boundaries, shaping effective behaviors, and ensuring secure user interactions.

Understanding Agentic AI Design

Agentic design involves building AI systems that can autonomously operate within specified limits. Unlike classic programming, which dictates precise responses to inputs, agentic AI requires designers to specify desired behavioral outcomes and rely on the model’s ability to interpret and execute these behaviors dynamically.

Embracing Response Variability in AI

Conventional software consistently produces the same output for identical inputs. In contrast, agentic AI systems, powered by probabilistic models, generate diverse yet contextually relevant responses each time they are queried. This inherent variability enhances the naturalness of interactions but also demands carefully crafted prompts and guidelines to maintain safety and coherence.

For instance, when a user requests, “Can you assist me with resetting my password?”, the AI might respond with variations such as “Sure! Could you provide your username?”, “Absolutely, let’s begin-what’s your registered email?”, or “I’m here to help. Do you recall your account ID?” This diversity mimics human conversational nuances, improving user engagement. However, it also necessitates robust guardrails to ensure consistent, secure, and policy-compliant replies.

The Importance of Precise Instruction

Language models interpret instructions as guidelines rather than executing them verbatim. Ambiguous directives like:

agent.create_guideline(

condition="User shows frustration",

action="Attempt to cheer them up"

)can result in unpredictable or unsafe outcomes, such as making unintended promises. Instead, instructions should be explicit and focused on safe, actionable steps:

agent.create_guideline(

condition="User is upset due to delayed shipment",

action="Acknowledge the delay, apologize sincerely, and provide an updated delivery status"

)This clarity ensures the AI’s behavior aligns with organizational standards and user expectations.

Implementing Multi-Layered Compliance Controls

While large language models cannot be fully controlled, their behavior can be effectively guided through layered constraints.

Layer 1: Behavioral Guidelines

Establish clear rules to shape typical agent responses.

await agent.create_guideline(

condition="User inquires about topics beyond the agent's scope",

action="Politely decline and redirect to relevant resources"

)Layer 2: Predefined Responses

For sensitive or high-stakes scenarios-such as legal or medical inquiries-deploy pre-approved, canned replies to guarantee consistency and safety.

await agent.create_canned_response(

template="I can assist with account-related questions, but for detailed policy information, I will connect you with a specialist."

)This tiered approach reduces risk by preventing the agent from improvising in critical contexts.

Effective Tool Integration: Navigating Ambiguity

When AI agents interact with external tools like APIs or functions, the complexity extends beyond simple command execution. Consider a user request: “Schedule a meeting with Sarah next week.” The agent must resolve ambiguities such as which Sarah is meant, the exact date and time, and the calendar to use.

This exemplifies the Parameter Inference Challenge, where the agent must deduce missing details. To mitigate this, tools should be designed with clear descriptions, parameter hints, and contextual examples. Consistent naming conventions and standardized parameter types further assist the agent in accurately selecting and filling inputs. Such thoughtful tool design enhances precision, minimizes errors, and streamlines user-agent interactions.

The Iterative Nature of Agent Development

Unlike static software, AI agent behavior evolves through continuous cycles of monitoring, assessment, and refinement. Development typically starts by addressing common, straightforward user scenarios-often called “happy paths”-where expected responses are well understood and easy to validate.

After deployment in controlled environments, the agent’s outputs are scrutinized for anomalies, user confusion, or policy violations. Identified issues are addressed by adding targeted rules or adjusting existing logic. For example, if an agent persistently suggests an upsell despite repeated user refusals, a specific guideline can be introduced to suppress such offers within the same session. Through this iterative tuning, the agent matures into a sophisticated conversational partner that balances responsiveness, reliability, and compliance.

Crafting Clear and Actionable Guidelines

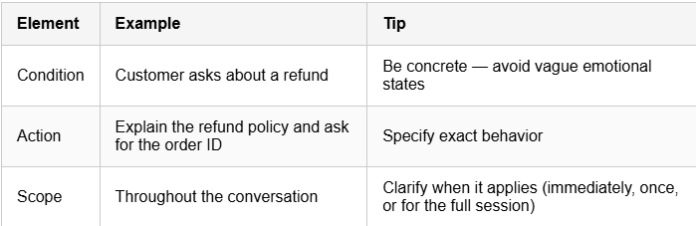

Each guideline should encompass three essential components:

- Condition: The specific scenario or trigger.

- Action: The precise response or behavior expected.

- Tools: Any external functions or APIs involved.

Example:

await agent.create_guideline(

condition="User requests an unavailable appointment time",

action="Suggest the three nearest available alternatives",

tools=[get_available_slots]

)Designing Structured Conversational Flows with Journeys

For intricate tasks like appointment scheduling, onboarding, or troubleshooting, simple guidelines may fall short. Structured conversational flows, known as Journeys, provide a framework to guide users through multi-step processes while preserving a natural dialogue.

For example, a booking journey might start by detecting when a user wants to schedule an appointment. The conversation then progresses through stages: identifying the service type, checking availability via a tool, and finally presenting available time slots. This method balances flexibility with control, enabling the agent to manage complex interactions efficiently without sacrificing conversational fluidity.

Example: Appointment Booking Journey

booking_journey = await agent.create_journey(

title="Appointment Scheduling",

conditions=["User wants to book an appointment"],

description="Assist user through the booking process"

)

t1 = await booking_journey.initial_state.transition_to(

chat_state="Ask for the type of service needed"

)

t2 = await t1.target.transition_to(

tool_state=check_service_availability

)

t3 = await t2.target.transition_to(

chat_state="Present available time slots"

)Striking the Right Balance: Flexibility vs. Predictability

Designing AI agents requires a careful equilibrium between natural conversational flexibility and operational predictability. Overly rigid instructions-such as “Say exactly: ‘Our premium plan costs $99 per month'”-can make interactions feel robotic. Conversely, vague directives like “Help the user understand pricing” may lead to inconsistent or unclear responses.

A balanced guideline might be: “Clearly explain pricing tiers, emphasize value, and inquire about the user’s needs to recommend the most suitable option.” This approach maintains reliability while fostering engaging, human-like conversations.

Adapting to Real-World Conversational Dynamics

Unlike linear web forms, real conversations are dynamic and non-linear. Users may change topics abruptly, skip steps, or introduce unexpected queries. To handle this complexity, AI agents should incorporate several key design principles:

- Context Retention: Maintain awareness of prior information to provide coherent responses.

- Progressive Disclosure: Introduce information or options gradually to avoid overwhelming users.

- Error Recovery: Gracefully manage misunderstandings by rephrasing or gently steering the conversation back on track.

These strategies help create interactions that feel intuitive, adaptable, and user-centric.

In summary, effective agentic AI design begins with focusing on core functionalities and common user scenarios, followed by vigilant monitoring and iterative refinement based on real-world usage. Clear, actionable guidelines establish safe boundaries while allowing conversational flexibility. For complex workflows, structured journeys guide multi-step interactions seamlessly. Transparency about the agent’s capabilities and limitations sets appropriate user expectations. This comprehensive approach fosters the development of reliable, engaging, and safe AI agents tailored for practical applications.