Summary: Researchers from Stanford University, SambaNova Systems, and UC Berkeley have unveiled a novel approach called ACE (Agentic Context Engineering) that enhances large language model (LLM) capabilities by dynamically modifying and expanding the input context rather than altering the model’s parameters. This method treats context as an evolving “playbook” managed through three distinct roles-Generator, Reflector, and Curator-which collaboratively produce and refine incremental delta items to prevent issues like brevity bias and context degradation. ACE demonstrates significant improvements, including a 10.6% boost on AppWorld agent benchmarks, an 8.6% increase in financial reasoning tasks, and an impressive average latency reduction of nearly 87% compared to leading context-adaptation techniques. As of September 20, 2025, ACE-powered ReAct+ACE achieved a competitive 59.4% score on the AppWorld leaderboard, closely matching IBM’s GPT-4.1-based CUGA at 60.3%, while utilizing the more efficient DeepSeek-V3.1 model.

Revolutionizing LLM Adaptation: From Weight Updates to Context Evolution

Traditional methods for improving LLM performance often rely on fine-tuning model weights, which can be computationally expensive and inflexible. ACE introduces a paradigm shift by prioritizing context engineering as a primary mechanism for adaptation. Instead of condensing instructions into brief prompts, ACE systematically accumulates and organizes domain-specific strategies over time, enhancing the “context density.” This richer context is particularly beneficial for complex agentic tasks that involve tool usage, multi-turn interactions, and error handling.

The ACE Framework: Roles and Workflow

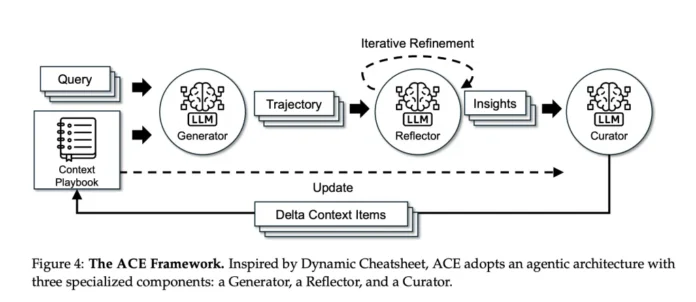

ACE’s architecture is built around a three-stage process that incrementally refines the input context:

- Generator: Executes tasks and generates detailed trajectories, including reasoning steps and tool invocations, highlighting both effective and ineffective actions.

- Reflector: Analyzes these trajectories to extract actionable insights and lessons learned.

- Curator: Transforms these insights into structured delta items-annotated with helpful or harmful indicators-and integrates them into the evolving playbook through deterministic merging, deduplication, and pruning to maintain relevance and focus.

This incremental update strategy, combined with a “grow-and-refine” approach, preserves valuable historical context and avoids the pitfalls of wholesale context replacement, such as “context collapse.” To ensure that improvements stem solely from context modifications, the same base LLM (DeepSeek-V3.1) is consistently employed across all roles, isolating the effect of context engineering from model parameter changes.

Performance Highlights Across Diverse Benchmarks

AppWorld Agent Tasks

On the AppWorld benchmark, which evaluates agentic reasoning and tool use, ACE-enhanced ReAct outperforms several strong baselines, including In-Context Learning (ICL), GEPA, and Dynamic Cheatsheet. It achieves an average improvement of 10.6% over these methods and a 7.6% gain over Dynamic Cheatsheet in online adaptation scenarios. Notably, on the September 2025 leaderboard, ReAct+ACE scored 59.4%, nearly matching IBM’s GPT-4.1-based CUGA at 60.3%. ACE even surpasses CUGA on the more challenging test-challenge subset, despite relying on a smaller, open-source base model.

Financial Reasoning: FiNER and XBRL Formula Tasks

In the financial domain, ACE demonstrates robust improvements on token tagging (FiNER) and numerical reasoning with XBRL formulas. It delivers an average performance increase of 8.6% over baseline models when ground-truth labels are available for offline adaptation. Furthermore, ACE remains effective when only execution feedback is provided, although the quality of these signals significantly influences the outcome.

Efficiency Gains: Reducing Latency and Computational Costs

One of ACE’s standout advantages is its ability to drastically cut adaptation overhead through non-LLM merges and localized context updates:

- Offline Adaptation (AppWorld): Achieves an 82.3% reduction in latency and a 75.1% decrease in rollout counts compared to GEPA.

- Online Adaptation (FiNER): Cuts latency by 91.5% and token usage costs by 83.6% relative to Dynamic Cheatsheet.

These efficiency improvements highlight ACE’s practical benefits over reflective-rewrite baselines, which often suffer from persistent memory overhead and complex prompt evolution strategies.

Key Insights and Implications

- Context-First Adaptation: ACE pioneers a method where LLMs self-improve by continuously updating a curated, evolving playbook of task-specific tactics, rather than relying on costly parameter tuning.

- Demonstrated Effectiveness: The approach yields substantial accuracy gains across agentic and financial reasoning benchmarks, rivaling state-of-the-art models while using more efficient base architectures.

- Significant Cost Savings: By minimizing adaptation latency and token consumption, ACE offers a scalable solution for real-world applications requiring frequent model updates.

Final Thoughts: The Future of Self-Improving Language Models

ACE establishes context engineering as a compelling alternative to traditional fine-tuning, enabling LLMs to “self-tune” through an ever-growing, carefully managed repository of domain knowledge. This strategy not only delivers measurable performance enhancements but also dramatically reduces the computational burden associated with model adaptation. While the effectiveness of ACE depends on the quality of feedback and task complexity, its deterministic merging and long-context-aware serving mechanisms make it a practical and scalable solution. As this approach gains traction, future agent systems may increasingly rely on evolving contexts rather than frequent retraining, marking a significant shift in how AI models learn and adapt.