Contemporary large language models (LLMs) have evolved well beyond mere text generation capabilities. Today, many cutting-edge applications demand that these AI systems interact with external resources-such as APIs, databases, and software libraries-to tackle intricate problems. But how can we accurately evaluate whether an AI agent can strategize, reason, and orchestrate multiple tools as effectively as a human assistant? This is precisely the challenge addressed by MCP-Bench.

Limitations of Current Evaluation Methods

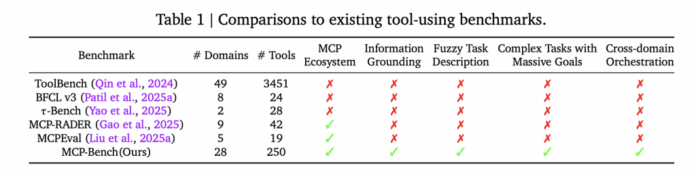

Existing benchmarks for tool-using LLMs often concentrate on isolated API invocations or narrowly defined, artificially constructed workflows. Even the more sophisticated tests seldom assess an agent’s ability to identify and chain together appropriate tools based on ambiguous, real-world instructions. Moreover, they rarely evaluate cross-domain coordination or the capacity to ground responses in verifiable evidence. Consequently, while many models excel in contrived scenarios, they frequently falter when confronted with the complexity and uncertainty inherent in practical applications.

Introducing MCP-Bench: A New Standard for Real-World AI Evaluation

Researchers at Accenture have developed MCP-Bench, a benchmark grounded in the Model Context Protocol (MCP), designed to rigorously test LLM agents by connecting them to 28 authentic servers. These servers provide access to a diverse array of 250 tools spanning multiple sectors, including finance, healthcare, scientific research, travel, and more. The benchmark’s architecture demands that agents execute workflows requiring both sequential and parallel tool usage, often involving multiple servers simultaneously, thereby simulating realistic task complexity.

Distinctive Characteristics of MCP-Bench

- Realistic Task Design: Tasks mirror genuine user scenarios, such as organizing a multi-destination hiking expedition that integrates geospatial data, weather forecasts, and park regulations, performing biomedical data analysis, or converting units in advanced scientific computations.

- Ambiguous Instructions: Instead of explicit tool names or step-by-step guidance, tasks are presented in natural, sometimes imprecise language, compelling the agent to deduce the appropriate course of action much like a human assistant would.

- Wide Range of Tools: The benchmark encompasses a broad spectrum of utilities, from medical calculators and scientific libraries to financial modeling tools, icon repositories, and even esoteric services like I Ching divination.

- Robust Quality Assurance: Tasks are generated automatically and then filtered to ensure they are solvable and relevant. Each task is available in two formats: a detailed technical specification for evaluation purposes and a conversational, fuzzy version presented to the agent.

- Comprehensive Evaluation Framework: Assessment combines automated metrics-such as correct tool usage and parameter accuracy-with evaluations by LLM-based judges that appraise planning, reasoning, and evidence grounding.

Evaluation Process for AI Agents

When an agent engages with MCP-Bench, it receives a task prompt-for example, “Plan a detailed camping trip to Yosemite, including logistics and weather updates.” The agent must then determine, stepwise, which tools to invoke, in what sequence, and how to interpret their outputs. These interactions may span multiple rounds, requiring the agent to integrate information into a coherent, evidence-supported final response.

Agents are assessed on several critical aspects:

- Appropriateness of Tool Selection: Did the agent identify the correct tools for each component of the task?

- Accuracy of Input Parameters: Were the inputs to each tool complete and precise?

- Workflow Planning and Coordination: Did the agent effectively manage task dependencies and parallel operations?

- Evidence-Based Responses: Does the final output reference tool results directly, avoiding unsupported assertions?

Insights from MCP-Bench Evaluations

Testing 20 leading LLMs across 104 diverse tasks revealed several key trends:

- Competent Basic Tool Usage: Most models successfully invoked tools and handled complex parameter schemas, even in specialized domains.

- Challenges in Complex Planning: Models struggled with extended, multi-step workflows that required nuanced decisions about sequencing, parallelism, and error handling.

- Performance Gap for Smaller Models: As task complexity increased, especially when spanning multiple servers, smaller models were prone to errors, redundant actions, or overlooked subtasks.

- Varied Efficiency: Some agents required significantly more tool calls and interaction rounds to complete tasks, indicating inefficiencies in their planning and execution strategies.

- Human Oversight Remains Vital: Despite automation, human validation ensures task realism and solvability, underscoring the ongoing need for expert involvement in evaluation.

Significance of MCP-Bench in AI Development

MCP-Bench offers a pragmatic framework to measure how effectively AI agents can function as “digital assistants” in real-world environments, where instructions are often imprecise and solutions depend on synthesizing information from multiple sources. By highlighting current limitations in planning, cross-domain reasoning, and evidence-based synthesis, this benchmark provides valuable insights for advancing AI deployment in business, scientific research, and specialized professional fields.

Conclusion

As a comprehensive, large-scale evaluation platform, MCP-Bench challenges AI agents with authentic tools and tasks, avoiding artificial shortcuts. It reveals both the strengths and weaknesses of today’s models, serving as an essential reference point for developers and researchers aiming to build more capable and reliable AI assistants.