In this tutorial, we demonstrate how to build a multi-step, intelligent query-handling agent using LangGraph and Gemini 1.5 Flash. The core idea is to structure AI reasoning as a stateful workflow, where an incoming query is passed through a series of purposeful nodes: routing, analysis, research, response generation, and validation. Each node operates as a functional block with a well-defined role, making the agent not just reactive but analytically aware. Using LangGraph’s StateGraph, we orchestrate these nodes to create a looping system that can re-analyze and improve its output until the response is validated as complete or a max iteration threshold is reached.

!pip install langgraph langchain-google-genai python-dotenvFirst, the command !pip install langgraph langchain-google-genai python-dotenv installs three Python packages essential for building intelligent agent workflows. langgraph enables graph-based orchestration of AI agents, langchain-google-genai provides integration with Google’s Gemini models, and python-dotenv allows secure loading of environment variables from .env files.

import os

from typing import Dict, Any, List

from dataclasses import dataclass

from langgraph.graph import Graph, StateGraph, END

from langchain_google_genai import ChatGoogleGenerativeAI

from langchain.schema import HumanMessage, SystemMessage

import json

os.environ["GOOGLE_API_KEY"] = "Use Your API Key Here"We import essential modules and libraries for building agent workflows, including ChatGoogleGenerativeAI for interacting with Gemini models and StateGraph for managing conversational state. The line os.environ[“GOOGLE_API_KEY”] = “Use Your API Key Here” assigns the API key to an environment variable, allowing the Gemini model to authenticate and generate responses.

@dataclass

class AgentState:

"""State shared across all nodes in the graph"""

query: str = ""

context: str = ""

analysis: str = ""

response: str = ""

next_action: str = ""

iteration: int = 0

max_iterations: int = 3Check out the

This AgentState dataclass defines the shared state that persists across different nodes in a LangGraph workflow. It tracks key fields, including the user’s query, retrieved context, any analysis performed, the generated response, and the recommended next action. It also includes an iteration counter and a max_iterations limit to control how many times the workflow can loop, enabling iterative reasoning or decision-making by the agent.

@dataclass

class AgentState:

"""State shared across all nodes in the graph"""

query: str = ""

context: str = ""

analysis: str = ""

response: str = ""

next_action: str = ""

iteration: int = 0

max_iterations: int = 3

This AgentState dataclass defines the shared state that persists across different nodes in a LangGraph workflow. It tracks key fields, including the user's query, retrieved context, any analysis performed, the generated response, and the recommended next action. It also includes an iteration counter and a max_iterations limit to control how many times the workflow can loop, enabling iterative reasoning or decision-making by the agent.

class GraphAIAgent:

def __init__(self, api_key: str = None):

if api_key:

os.environ["GOOGLE_API_KEY"] = api_key

self.llm = ChatGoogleGenerativeAI(

model="gemini-1.5-flash",

temperature=0.7,

convert_system_message_to_human=True

)

self.analyzer = ChatGoogleGenerativeAI(

model="gemini-1.5-flash",

temperature=0.3,

convert_system_message_to_human=True

)

self.graph = self._build_graph()

def _build_graph(self) -> StateGraph:

"""Build the LangGraph workflow"""

workflow = StateGraph(AgentState)

workflow.add_node("router", self._router_node)

workflow.add_node("analyzer", self._analyzer_node)

workflow.add_node("researcher", self._researcher_node)

workflow.add_node("responder", self._responder_node)

workflow.add_node("validator", self._validator_node)

workflow.set_entry_point("router")

workflow.add_edge("router", "analyzer")

workflow.add_conditional_edges(

"analyzer",

self._decide_next_step,

{

"research": "researcher",

"respond": "responder"

}

)

workflow.add_edge("researcher", "responder")

workflow.add_edge("responder", "validator")

workflow.add_conditional_edges(

"validator",

self._should_continue,

{

"continue": "analyzer",

"end": END

}

)

return workflow.compile()

def _router_node(self, state: AgentState) -> Dict[str, Any]:

"""Route and categorize the incoming query"""

system_msg = """You are a query router. Analyze the user's query and provide context.

Determine if this is a factual question, creative request, problem-solving task, or analysis."""

messages = [

SystemMessage(content=system_msg),

HumanMessage(content=f"Query: {state.query}")

]

response = self.llm.invoke(messages)

return {

"context": response.content,

"iteration": state.iteration + 1

}

def _analyzer_node(self, state: AgentState) -> Dict[str, Any]:

"""Analyze the query and determine the approach"""

system_msg = """Analyze the query and context. Determine if additional research is needed

or if you can provide a direct response. Be thorough in your analysis."""

messages = [

SystemMessage(content=system_msg),

HumanMessage(content=f"""

Query: {state.query}

Context: {state.context}

Previous Analysis: {state.analysis}

""")

]

response = self.analyzer.invoke(messages)

analysis = response.content

if "research" in analysis.lower() or "more information" in analysis.lower():

next_action = "research"

else:

next_action = "respond"

return {

"analysis": analysis,

"next_action": next_action

}

def _researcher_node(self, state: AgentState) -> Dict[str, Any]:

"""Conduct additional research or information gathering"""

system_msg = """You are a research assistant. Based on the analysis, gather relevant

information and insights to help answer the query comprehensively."""

messages = [

SystemMessage(content=system_msg),

HumanMessage(content=f"""

Query: {state.query}

Analysis: {state.analysis}

Research focus: Provide detailed information relevant to the query.

""")

]

response = self.llm.invoke(messages)

updated_context = f"{state.context}nnResearch: {response.content}"

return {"context": updated_context}

def _responder_node(self, state: AgentState) -> Dict[str, Any]:

"""Generate the final response"""

system_msg = """You are a helpful AI assistant. Provide a comprehensive, accurate,

and well-structured response based on the analysis and context provided."""

messages = [

SystemMessage(content=system_msg),

HumanMessage(content=f"""

Query: {state.query}

Context: {state.context}

Analysis: {state.analysis}

Provide a complete and helpful response.

""")

]

response = self.llm.invoke(messages)

return {"response": response.content}

def _validator_node(self, state: AgentState) -> Dict[str, Any]:

"""Validate the response quality and completeness"""

system_msg = """Evaluate if the response adequately answers the query.

Return 'COMPLETE' if satisfactory, or 'NEEDS_IMPROVEMENT' if more work is needed."""

messages = [

SystemMessage(content=system_msg),

HumanMessage(content=f"""

Original Query: {state.query}

Response: {state.response}

Is this response complete and satisfactory?

""")

]

response = self.analyzer.invoke(messages)

validation = response.content

return {"context": f"{state.context}nnValidation: {validation}"}

def _decide_next_step(self, state: AgentState) -> str:

"""Decide whether to research or respond directly"""

return state.next_action

def _should_continue(self, state: AgentState) -> str:

"""Decide whether to continue iterating or end"""

if state.iteration >= state.max_iterations:

return "end"

if "COMPLETE" in state.context:

return "end"

if "NEEDS_IMPROVEMENT" in state.context:

return "continue"

return "end"

def run(self, query: str) -> str:

"""Run the agent with a query"""

initial_state = AgentState(query=query)

result = self.graph.invoke(initial_state)

return result["response"]Check out the

The GraphAIAgent class defines a LangGraph-based AI workflow using Gemini models to iteratively analyze, research, respond, and validate answers to user queries. It utilizes modular nodes, such as router, analyzer, researcher, responder, and validator, to reason through complex tasks, refining responses through controlled iterations.

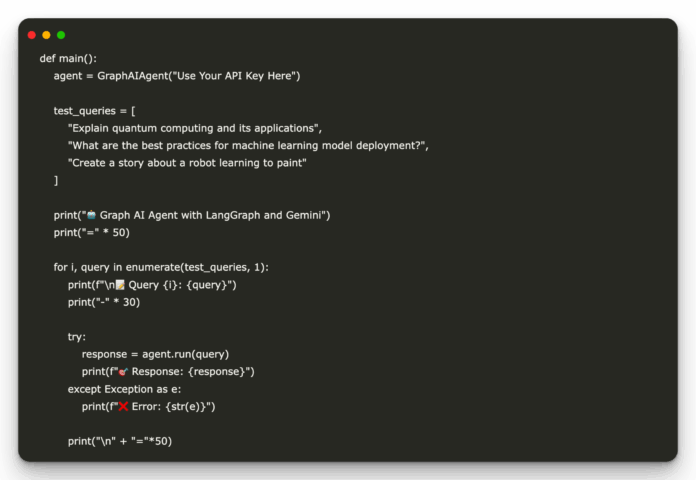

def main():

agent = GraphAIAgent("Use Your API Key Here")

test_queries = [

"Explain quantum computing and its applications",

"What are the best practices for machine learning model deployment?",

"Create a story about a robot learning to paint"

]

print(" Graph AI Agent with LangGraph and Gemini")

print("=" * 50)

for i, query in enumerate(test_queries, 1):

print(f"n

Graph AI Agent with LangGraph and Gemini")

print("=" * 50)

for i, query in enumerate(test_queries, 1):

print(f"n Query {i}: {query}")

print("-" * 30)

try:

response = agent.run(query)

print(f"

Query {i}: {query}")

print("-" * 30)

try:

response = agent.run(query)

print(f" Response: {response}")

except Exception as e:

print(f"

Response: {response}")

except Exception as e:

print(f" Error: {str(e)}")

print("n" + "="*50)

if __name__ == "__main__":

main()

Error: {str(e)}")

print("n" + "="*50)

if __name__ == "__main__":

main()

Finally, the main() function initializes the GraphAIAgent with a Gemini API key and runs it on a set of test queries covering technical, strategic, and creative tasks. It prints each query and the AI-generated response, showcasing how the LangGraph-driven agent processes diverse types of input using Gemini’s reasoning and generation capabilities.

In conclusion, by combining LangGraph’s structured state machine with the power of Gemini’s conversational intelligence, this agent represents a new paradigm in AI workflow engineering, one that mirrors human reasoning cycles of inquiry, analysis, and validation. The tutorial provides a modular and extensible template for developing advanced AI agents that can autonomously handle various tasks, ranging from answering complex queries to generating creative content.

Check out the . All credit for this research goes to the researchers of this project.

Graph AI Agent with LangGraph and Gemini")

print("=" * 50)

for i, query in enumerate(test_queries, 1):

print(f"n

Graph AI Agent with LangGraph and Gemini")

print("=" * 50)

for i, query in enumerate(test_queries, 1):

print(f"n Query {i}: {query}")

print("-" * 30)

try:

response = agent.run(query)

print(f"

Query {i}: {query}")

print("-" * 30)

try:

response = agent.run(query)

print(f" Response: {response}")

except Exception as e:

print(f"

Response: {response}")

except Exception as e:

print(f" Error: {str(e)}")

print("n" + "="*50)

if __name__ == "__main__":

main()

Error: {str(e)}")

print("n" + "="*50)

if __name__ == "__main__":

main()