Comprehensive Guide to Hierarchical Bayesian Regression Using NumPyro

This article presents a detailed walkthrough of hierarchical Bayesian regression implemented with NumPyro, illustrating the entire modeling pipeline from data creation to inference and validation. We begin by synthesizing multi-level data that reflects both overarching trends and subgroup-specific deviations. Next, we construct a probabilistic model that captures these hierarchical structures, followed by sampling from the posterior using the No-U-Turn Sampler (NUTS). Finally, we evaluate model performance through posterior summaries and predictive diagnostics, gaining insights into the model’s ability to represent complex data patterns.

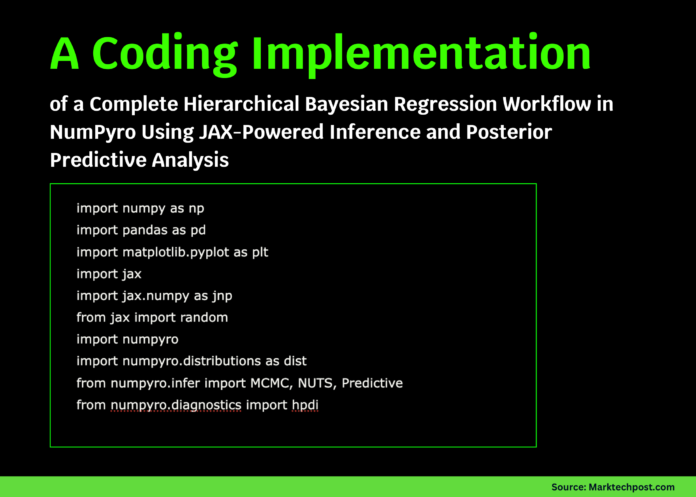

Setting Up the Environment for Bayesian Hierarchical Modeling

To start, we ensure that the necessary libraries are installed and imported. NumPyro, built on JAX, provides a powerful framework for scalable Bayesian inference, while supporting libraries like NumPy, Pandas, and Matplotlib facilitate data manipulation and visualization. This setup guarantees a smooth workflow for hierarchical modeling tasks.

try:

import numpyro

except ImportError:

!pip install -q "llvmlite>=0.45.1" "numpyro[cpu]" matplotlib pandas

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import jax

import jax.numpy as jnp

from jax import random

import numpyro

import numpyro.distributions as dist

from numpyro.infer import MCMC, NUTS, Predictive

from numpyro.diagnostics import hpdi

numpyro.set_host_device_count(1)

Simulating Hierarchical Data with Group-Level Variability

We generate synthetic data that mimics real-world scenarios where observations are nested within groups, each exhibiting unique intercepts and slopes. This approach models both the global regression parameters and the variability across groups, providing a realistic testbed for hierarchical inference.

def create_synthetic_data(key, num_groups=8, samples_per_group=40):

k1, k2, k3, k4 = random.split(key, 4)

base_intercept = 1.0

base_slope = 0.6

intercept_sd_group = 0.8

slope_sd_group = 0.5

noise_sd = 0.7

group_indices = np.repeat(np.arange(num_groups), samples_per_group)

total_samples = num_groups * samples_per_group

intercept_offsets = random.normal(k1, (num_groups,)) * intercept_sd_group

slope_offsets = random.normal(k2, (num_groups,)) * slope_sd_group

predictors = random.normal(k3, (total_samples,)) * 2.0

noise = random.normal(k4, (total_samples,)) * noise_sd

intercepts = base_intercept + intercept_offsets[group_indices]

slopes = base_slope + slope_offsets[group_indices]

responses = intercepts + slopes * predictors + noise

data_frame = pd.DataFrame({

"response": np.array(responses),

"predictor": np.array(predictors),

"group": group_indices

})

true_params = {

"base_intercept": base_intercept,

"base_slope": base_slope,

"intercept_sd_group": intercept_sd_group,

"slope_sd_group": slope_sd_group,

"noise_sd": noise_sd

}

return data_frame, true_params

key = random.PRNGKey(0)

data, true_values = create_synthetic_data(key)

x = jnp.array(data["predictor"].values)

y = jnp.array(data["response"].values)

group_ids = jnp.array(data["group"].values)

num_groups = int(data["group"].nunique())

Formulating the Hierarchical Regression Model

Our model incorporates global parameters for intercept and slope means, along with group-specific deviations modeled as random effects. We assign weakly informative priors to these parameters to allow the data to inform the posterior while maintaining regularization. The observation noise is modeled with an exponential prior to ensure positivity.

def hierarchical_bayesian_model(x, group_idx, n_groups, y=None):

mu_intercept = numpyro.sample("mu_intercept", dist.Normal(0.0, 5.0))

mu_slope = numpyro.sample("mu_slope", dist.Normal(0.0, 5.0))

sigma_intercept = numpyro.sample("sigma_intercept", dist.HalfCauchy(2.0))

sigma_slope = numpyro.sample("sigma_slope", dist.HalfCauchy(2.0))

with numpyro.plate("groups", n_groups):

intercept_group = numpyro.sample("intercept_group", dist.Normal(mu_intercept, sigma_intercept))

slope_group = numpyro.sample("slope_group", dist.Normal(mu_slope, sigma_slope))

sigma_noise = numpyro.sample("sigma_noise", dist.Exponential(1.0))

intercepts = intercept_group[group_idx]

slopes = slope_group[group_idx]

mean_response = intercepts + slopes * x

with numpyro.plate("observations", x.shape[0]):

numpyro.sample("obs", dist.Normal(mean_response, sigma_noise), obs=y)

nuts_kernel = NUTS(hierarchical_bayesian_model, target_accept_prob=0.9)

mcmc_sampler = MCMC(nuts_kernel, num_warmup=1000, num_samples=1000, num_chains=1, progress_bar=True)

mcmc_sampler.run(random.PRNGKey(1), x=x, group_idx=group_ids, n_groups=num_groups, y=y)

posterior_samples = mcmc_sampler.get_samples()

Summarizing Posterior Estimates and Conducting Predictive Checks

We extract key posterior summaries such as means and 90% highest posterior density intervals (HPDIs) to quantify uncertainty around parameter estimates. Posterior predictive checks are performed by simulating new data from the posterior predictive distribution, allowing us to visually assess how well the model reproduces observed patterns within a selected group.

def summarize_parameters(samples_array):

samples_array = np.asarray(samples_array)

mean_val = samples_array.mean()

lower, upper = hpdi(samples_array, prob=0.9)

return mean_val, float(lower), float(upper)

parameters_of_interest = ["mu_intercept", "mu_slope", "sigma_intercept", "sigma_slope", "sigma_noise"]

for param in parameters_of_interest:

mean_est, low_ci, high_ci = summarize_parameters(posterior_samples[param])

print(f"{param}: mean={mean_est:.3f}, 90% HPDI=[{low_ci:.3f}, {high_ci:.3f}]")

predictive_model = Predictive(hierarchical_bayesian_model, posterior_samples, return_sites=["obs"])

ppc_samples = predictive_model(random.PRNGKey(2), x=x, group_idx=group_ids, n_groups=num_groups)

y_simulated = np.asarray(ppc_samples["obs"])

selected_group = 0

group_mask = data["group"].values == selected_group

x_group = data.loc[group_mask, "predictor"].values

y_group = data.loc[group_mask, "response"].values

y_sim_group = y_simulated[:, group_mask]

sorted_indices = np.argsort(x_group)

x_sorted = x_group[sorted_indices]

y_sim_sorted = y_sim_group[:, sorted_indices]

median_pred = np.median(y_sim_sorted, axis=0)

lower_pred, upper_pred = np.percentile(y_sim_sorted, [5, 95], axis=0)

plt.figure(figsize=(8, 5))

plt.scatter(x_group, y_group, label="Observed Data")

plt.plot(x_sorted, median_pred, color="red", label="Median Prediction")

plt.fill_between(x_sorted, lower_pred, upper_pred, color="red", alpha=0.3, label="90% Predictive Interval")

plt.xlabel("Predictor")

plt.ylabel("Response")

plt.title(f"Posterior Predictive Check for Group {selected_group}")

plt.legend()

plt.show()

Visualizing Group-Level Parameter Estimates

To better understand how the model captures group-specific effects, we plot the posterior means of intercepts and slopes for each group alongside the true values used in data generation. This comparison highlights the model’s ability to recover underlying hierarchical structure and adapt to group heterogeneity.

mean_intercepts = np.asarray(posterior_samples["intercept_group"]).mean(axis=0)

mean_slopes = np.asarray(posterior_samples["slope_group"]).mean(axis=0)

fig, axs = plt.subplots(1, 2, figsize=(12, 4))

axs[0].bar(range(num_groups), mean_intercepts, color="skyblue")

axs[0].axhline(true_values["base_intercept"], color="black", linestyle="--", label="True Intercept")

axs[0].set_title("Estimated Group Intercepts")

axs[0].set_xlabel("Group")

axs[0].set_ylabel("Intercept")

axs[0].legend()

axs[1].bar(range(num_groups), mean_slopes, color="lightgreen")

axs[1].axhline(true_values["base_slope"], color="black", linestyle="--", label="True Slope")

axs[1].set_title("Estimated Group Slopes")

axs[1].set_xlabel("Group")

axs[1].set_ylabel("Slope")

axs[1].legend()

plt.tight_layout()

plt.show()

Final Thoughts on Hierarchical Bayesian Modeling with NumPyro

This tutorial demonstrated how NumPyro facilitates the construction and inference of hierarchical Bayesian regression models with clarity and computational efficiency. By leveraging JAX’s automatic differentiation and NumPyro’s flexible modeling syntax, we obtained posterior distributions that reveal both global trends and group-specific nuances. Posterior predictive checks confirmed the model’s capacity to replicate observed data patterns, reinforcing confidence in its applicability to complex, multilevel datasets. This framework empowers analysts and researchers to embrace Bayesian hierarchical modeling for richer, more nuanced data analysis.