Harnessing Self-Supervised Learning with SimCLR: A Comprehensive Guide

This tutorial delves into the capabilities of self-supervised learning by leveraging the SimCLR framework. We start by constructing a SimCLR model designed to learn rich image representations without relying on labeled data. Following this, we generate embeddings and visualize them using advanced dimensionality reduction techniques such as UMAP and t-SNE. We then explore coreset selection strategies to intelligently curate datasets, simulate an active learning scenario, and finally evaluate the advantages of transfer learning through a linear probe assessment. Throughout this practical walkthrough, we utilize Google Colab to train, visualize, and compare coreset-based sampling against random selection, illustrating how self-supervised learning can dramatically enhance data efficiency and model accuracy.

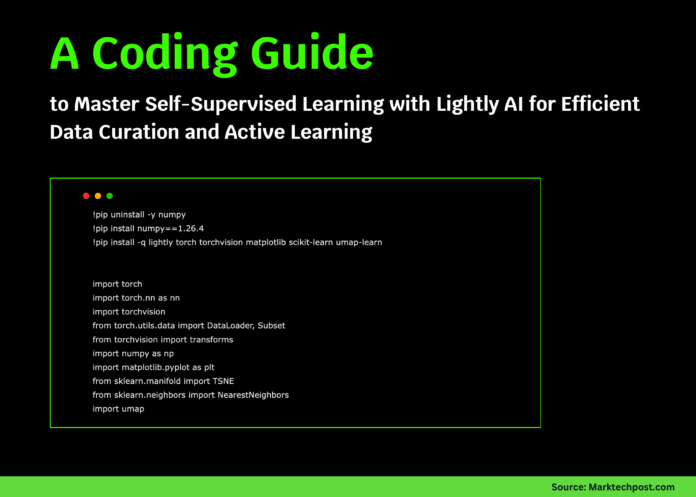

Environment Setup and Library Installation

To ensure a smooth workflow, we begin by configuring the environment, including fixing the NumPy version to 1.26.4 for compatibility and installing essential libraries such as Lightly, PyTorch, torchvision, matplotlib, scikit-learn, and umap-learn. We then import all necessary modules for model construction, training, and visualization, confirming that PyTorch and CUDA are available to leverage GPU acceleration.

!pip uninstall -y numpy

!pip install numpy==1.26.4

!pip install -q lightly torch torchvision matplotlib scikit-learn umap-learn

import torch

import torch.nn as nn

import torchvision

from torch.utils.data import DataLoader, Subset

from torchvision import transforms

import numpy as np

import matplotlib.pyplot as plt

from sklearn.manifold import TSNE

import umap

from lightly.loss import NTXentLoss

from lightly.models.modules import SimCLRProjectionHead

from lightly.transforms import SimCLRTransform

from lightly.data import LightlyDataset

print(f"PyTorch version: {torch.__version__}")

print(f"CUDA available: {torch.cuda.is_available()}")Building the SimCLR Model Architecture

Our SimCLR model employs a ResNet backbone to extract visual features without supervision. We replace the original classification head with an identity layer and append a projection head that maps features into a contrastive embedding space. Additionally, the model includes a method to extract raw backbone features, which is useful for downstream tasks such as embedding visualization and linear evaluation.

class SimCLRModel(nn.Module):

"""SimCLR architecture with ResNet backbone and projection head."""

def __init__(self, backbone, hidden_dim=512, out_dim=128):

super().__init__()

self.backbone = backbone

self.backbone.fc = nn.Identity()

self.projection_head = SimCLRProjectionHead(

input_dim=512, hidden_dim=hidden_dim, output_dim=out_dim

)

def forward(self, x):

features = self.backbone(x).flatten(start_dim=1)

projections = self.projection_head(features)

return projections

def extract_features(self, x):

"""Obtain backbone features without projection."""

with torch.no_grad():

return self.backbone(x).flatten(start_dim=1)Loading and Preparing the CIFAR-10 Dataset

We load the CIFAR-10 dataset, applying distinct transformations for self-supervised training and evaluation. For self-supervised learning, we generate multiple augmented views of each image to facilitate contrastive learning. For evaluation, images are normalized to standardize inputs for downstream tasks. This dual transformation approach enables the model to learn robust, invariant representations.

def load_dataset(train=True):

"""Load CIFAR-10 with separate transforms for SSL and evaluation."""

ssl_transform = SimCLRTransform(input_size=32, cj_prob=0.8)

eval_transform = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.4914, 0.4822, 0.4465), (0.2023, 0.1994, 0.2010))

])

base_dataset = torchvision.datasets.CIFAR10(

root='./data', train=train, download=True

)

class SSLDataset(torch.utils.data.Dataset):

def __init__(self, dataset, transform):

self.dataset = dataset

self.transform = transform

def __len__(self):

return len(self.dataset)

def __getitem__(self, idx):

img, label = self.dataset[idx]

return self.transform(img), label

ssl_dataset = SSLDataset(base_dataset, ssl_transform)

eval_dataset = torchvision.datasets.CIFAR10(

root='./data', train=train, download=True, transform=eval_transform

)

return ssl_dataset, eval_datasetTraining the SimCLR Model with Contrastive Loss

We train the SimCLR model using the NT-Xent loss, which encourages the model to bring augmented views of the same image closer in the embedding space while pushing apart different images. The optimizer of choice is stochastic gradient descent (SGD) with momentum and weight decay to ensure stable convergence. Loss values are logged periodically to monitor training progress.

def train_ssl_model(model, dataloader, epochs=5, device='cuda'):

"""Train SimCLR model using NT-Xent contrastive loss."""

model.to(device)

criterion = NTXentLoss(temperature=0.5)

optimizer = torch.optim.SGD(model.parameters(), lr=0.06, momentum=0.9, weight_decay=5e-4)

print("=== Starting Self-Supervised Training ===")

for epoch in range(epochs):

model.train()

total_loss = 0

for batch_idx, batch in enumerate(dataloader):

views = batch[0]

view1, view2 = views[0].to(device), views[1].to(device)

z1 = model(view1)

z2 = model(view2)

loss = criterion(z1, z2)

optimizer.zero_grad()

loss.backward()

optimizer.step()

total_loss += loss.item()

if batch_idx % 50 == 0:

print(f"Epoch {epoch+1}/{epochs} | Batch {batch_idx} | Loss: {loss.item():.4f}")

avg_loss = total_loss / len(dataloader)

print(f"Epoch {epoch+1} completed | Average Loss: {avg_loss:.4f}")

return modelEmbedding Generation and Visualization Techniques

After training, we extract feature embeddings from the backbone for the entire dataset. These embeddings are then visualized in two dimensions using UMAP or t-SNE, which reveal the semantic clustering of images learned by the model. To maintain clarity, we limit visualization to a subset of 5,000 samples when the dataset is large.

def generate_embeddings(model, dataset, device='cuda', batch_size=256):

"""Extract embeddings for all samples in the dataset."""

model.eval()

model.to(device)

dataloader = DataLoader(dataset, batch_size=batch_size, shuffle=False, num_workers=2)

embeddings = []

labels = []

print("=== Generating Embeddings ===")

with torch.no_grad():

for images, targets in dataloader:

images = images.to(device)

features = model.extract_features(images)

embeddings.append(features.cpu().numpy())

labels.append(targets.numpy())

embeddings = np.vstack(embeddings)

labels = np.concatenate(labels)

print(f"Generated {embeddings.shape[0]} embeddings with dimension {embeddings.shape[1]}")

return embeddings, labels

def visualize_embeddings(embeddings, labels, method='umap', n_samples=5000):

"""Visualize embeddings using UMAP or t-SNE dimensionality reduction."""

print(f"=== Visualizing Embeddings with {method.upper()} ===")

if len(embeddings) > n_samples:

indices = np.random.choice(len(embeddings), n_samples, replace=False)

embeddings = embeddings[indices]

labels = labels[indices]

if method == 'umap':

reducer = umap.UMAP(n_neighbors=15, min_dist=0.1, metric='cosine')

else:

reducer = TSNE(n_components=2, perplexity=30, metric='cosine')

embeddings_2d = reducer.fit_transform(embeddings)

plt.figure(figsize=(12, 10))

scatter = plt.scatter(embeddings_2d[:, 0], embeddings_2d[:, 1],

c=labels, cmap='tab10', s=5, alpha=0.6)

plt.colorbar(scatter)

plt.title(f'CIFAR-10 Embeddings ({method.upper()})')

plt.xlabel('Component 1')

plt.ylabel('Component 2')

plt.tight_layout()

plt.savefig(f'embeddings_{method}.png', dpi=150)

print(f"Saved visualization as embeddings_{method}.png")

plt.show()Intelligent Data Selection via Coreset Sampling

To optimize training efficiency, we implement coreset selection methods that prioritize the most informative and diverse samples. Two strategies are demonstrated: a class-balanced approach ensuring equal representation across classes, and a diversity-driven k-center greedy algorithm that maximizes coverage of the embedding space. This targeted data curation is crucial for reducing labeling costs while maintaining model performance.

def select_coreset(embeddings, labels, budget=1000, method='diversity'):

"""

Select a representative subset of data points (coreset) using:

- 'diversity': k-center greedy algorithm for maximum coverage

- 'balanced': equal class representation

"""

print(f"=== Coreset Selection Method: {method} ===")

if method == 'balanced':

selected_indices = []

n_classes = len(np.unique(labels))

per_class = budget // n_classes

for cls in range(n_classes):

cls_indices = np.where(labels == cls)[0]

selected = np.random.choice(cls_indices, min(per_class, len(cls_indices)), replace=False)

selected_indices.extend(selected)

return np.array(selected_indices)

elif method == 'diversity':

selected_indices = []

remaining_indices = set(range(len(embeddings)))

first_idx = np.random.randint(len(embeddings))

selected_indices.append(first_idx)

remaining_indices.remove(first_idx)

for _ in range(budget - 1):

if not remaining_indices:

break

remaining = list(remaining_indices)

selected_emb = embeddings[selected_indices]

remaining_emb = embeddings[remaining]

distances = np.min(

np.linalg.norm(remaining_emb[:, None] - selected_emb, axis=2), axis=1

)

max_dist_idx = np.argmax(distances)

selected_idx = remaining[max_dist_idx]

selected_indices.append(selected_idx)

remaining_indices.remove(selected_idx)

print(f"Selected {len(selected_indices)} samples for coreset")

return np.array(selected_indices)Evaluating Learned Representations with a Linear Probe

To quantify the quality of the learned features, we freeze the backbone and train a simple linear classifier on top of the extracted embeddings. This linear probe is trained on a curated subset and evaluated on the test set, providing a direct measure of how well the self-supervised model has captured meaningful visual information.

def evaluate_linear_probe(model, train_subset, test_dataset, device='cuda'):

"""Train and evaluate a linear classifier on frozen backbone features."""

model.eval()

train_loader = DataLoader(train_subset, batch_size=128, shuffle=True, num_workers=2)

test_loader = DataLoader(test_dataset, batch_size=256, shuffle=False, num_workers=2)

classifier = nn.Linear(512, 10).to(device)

criterion = nn.CrossEntropyLoss()

optimizer = torch.optim.Adam(classifier.parameters(), lr=0.001)

for epoch in range(10):

classifier.train()

for images, targets in train_loader:

images, targets = images.to(device), targets.to(device)

with torch.no_grad():

features = model.extract_features(images)

outputs = classifier(features)

loss = criterion(outputs, targets)

optimizer.zero_grad()

loss.backward()

optimizer.step()

classifier.eval()

correct = 0

total = 0

with torch.no_grad():

for images, targets in test_loader:

images, targets = images.to(device), targets.to(device)

features = model.extract_features(images)

outputs = classifier(features)

_, predicted = outputs.max(1)

total += targets.size(0)

correct += predicted.eq(targets).sum().item()

accuracy = 100. * correct / total

return accuracyEnd-to-End Pipeline Execution

The main function orchestrates the entire process: loading datasets, training the SimCLR model, generating embeddings, visualizing them, selecting a coreset, and finally evaluating the linear probe on both coreset and randomly sampled subsets. This comparison highlights the effectiveness of intelligent data selection in improving model accuracy with fewer labeled samples.

def main():

device = 'cuda' if torch.cuda.is_available() else 'cpu'

print(f"Using device: {device}")

ssl_dataset, eval_dataset = load_dataset(train=True)

_, test_dataset = load_dataset(train=False)

ssl_subset = Subset(ssl_dataset, range(10000))

ssl_loader = DataLoader(ssl_subset, batch_size=128, shuffle=True, num_workers=2, drop_last=True)

backbone = torchvision.models.resnet18(pretrained=False)

model = SimCLRModel(backbone)

model = train_ssl_model(model, ssl_loader, epochs=5, device=device)

eval_subset = Subset(eval_dataset, range(10000))

embeddings, labels = generate_embeddings(model, eval_subset, device=device)

visualize_embeddings(embeddings, labels, method='umap')

coreset_indices = select_coreset(embeddings, labels, budget=1000, method='diversity')

coreset_subset = Subset(eval_dataset, coreset_indices)

print("=== Active Learning Evaluation ===")

coreset_acc = evaluate_linear_probe(model, coreset_subset, test_dataset, device=device)

print(f"Coreset Accuracy (1000 samples): {coreset_acc:.2f}%")

random_indices = np.random.choice(len(eval_subset), 1000, replace=False)

random_subset = Subset(eval_dataset, random_indices)

random_acc = evaluate_linear_probe(model, random_subset, test_dataset, device=device)

print(f"Random Sampling Accuracy (1000 samples): {random_acc:.2f}%")

print(f"Coreset selection improved accuracy by: +{coreset_acc - random_acc:.2f}%")

print("=== Tutorial Completed ===")

print("Summary of insights:")

print("1. Self-supervised learning effectively captures meaningful image features without labels.")

print("2. Embeddings reveal semantic relationships between images.")

print("3. Strategic data selection via coresets outperforms random sampling.")

print("4. Active learning frameworks can reduce annotation costs while preserving accuracy.")

if __name__ == "__main__":

main()Conclusion: Advancing Efficient Representation Learning

This tutorial demonstrated how self-supervised learning frameworks like SimCLR can learn powerful image representations without manual annotations. By integrating coreset-based data selection, we showcased how to enhance model generalization while minimizing the amount of labeled data required. The end-to-end process-from training and embedding visualization to intelligent sampling and linear evaluation-provides a robust blueprint for scalable, resource-efficient machine learning workflows. Combining learned representations with smart data curation paves the way for building high-performing models that are both cost-effective and adaptable to real-world applications.