Our sheet music scanner at Soundslice digitizes music using photographs so that you can listen to, edit, and practice. We are constantly improving the system and I monitor the error logs in order to determine which images have poor results.

I noticed an odd upload in our error logs over the last few months. Instead of images such as this…

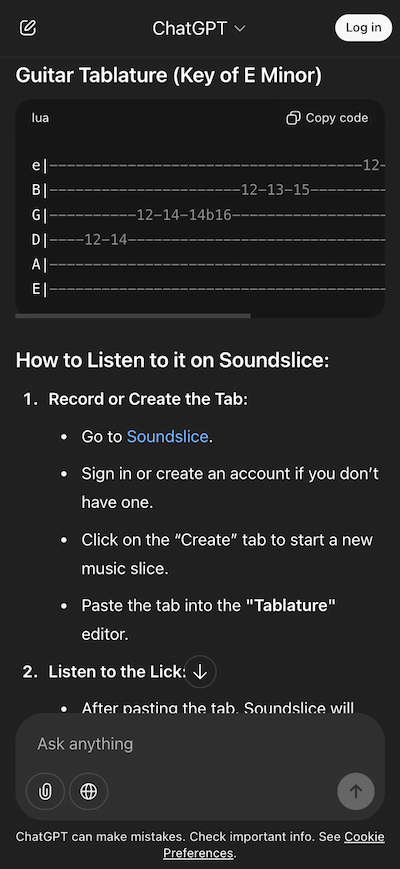

…we started to see images such as this:

Uh, that’s a screenshot from a ChatGPT Session …! WTF? It’s obvious that this is not music notation. It’s ASCII Tablaturea very basic way to notate music for guitar.

This style of notation was not intended to be supported by our scanning system. Why were we bombarded by ASCII tab screenshots of ChatGPT? I was confused for weeks until I played around with ChatGPT and got this:

It turns out ChatGPT tells people to go to Soundslice and create an account, then import ASCII Tab to hear the audio. That explains it.

The problem is, we did not have this feature. ChatGPT lied to people by claiming that ASCII tab was supported. In the process, they made us look bad by creating false expectations about our services.

This raised an interesting question about the product. What should we do now? We have a steady flow of new users that are being told incorrect information about our product. Do we plaster disclaimers on our product saying “Ignore ChatGPT’s claims about ASCII Tab support”?

In the end, we decided to meet the market’s demand. We created a custom ASCII tab exporter which was near the bottom on my “Software I expected in 2025” list. We changed the UI copy of our scanning system so that people would know about this feature.

This is the first time I’ve heard of a company creating a feature simply because ChatGPT incorrectly informs people that it exists. (Yay?) I’m telling you this story because I find it interesting.

I’m conflicted about this. I’m glad to have added a tool that can help people. But I feel that our hand was forced a strange way. Should we really develop features to respond to misinformation?