Music is always a challenge for me when I create audio or video content. I’m not talking about a theme song, but some basic intro or outro music to ease people in to the beginning or ending of a video or audio content rather than starting to speak abruptly.

There’s a lot of good music libraries, but I thought it would fun to see if AI music tools could compose a musical motif better than me.

In order to test it properly, I decided quickly to make a podcast’ using Google’s NotebookLM, another AI tool that I had been playing with. The Audio Overviews feature allows you to turn documents, video transcriptions, and other sources of information into AI-generated podcasts between two AI hosts. Glass blowing was chosen as a subject on a whim because it is something that interests me.

NotebookLM received a few links and videos on the history and process of glassblowing. Two AI voices began to discuss it for over 20 minutes. For this, I needed only ten seconds.

Suno serves

i tried out a few different AI tools, such as Soundverse, Beatoven and Loudly. All had their moments but most didn’t quite get it. I tried both short and longer prompts as well as keywords. They were mostly okay, but sometimes they were discordant, or just uncomfortable to listen to.

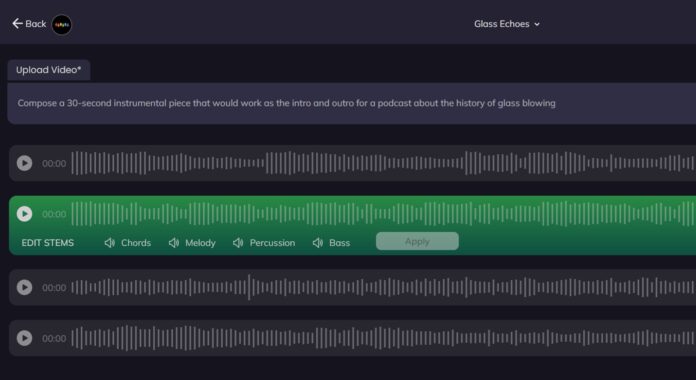

I spent way too much time trying out different edits and prompts before I decided to ask Suno to create an instrumental track to be used as the intro to a glassblowing podcast. It was that simple.

Suno created two tracks with evocative titles “Shaping Fire and Sand.” The first was alright, but I could imagine the second track being played before a nerdy conversation about annealing or sand purity.

I stitched it all together and it worked, or at least didn’t distract from the incoming speech. I showed it to a few friends and they didn’t notice that the music was AI-generated. However, they did recognize the podcast as AI voices.

Below you can listen to how it went.

It wasn’t perfect. I had to manually set the fading, and if it were human musicians, one might think that they were more enthusiastic rather than talented. Suno did an excellent job for a tool that was free and didn’t require any complex production.

Even in a free song collection, I wouldn’t choose it over a human-composed song. AI customization can’t replace human creativity in many cases, as the AI podcast shows. Suno added a harmonious touch as an experiment.

Google Gemini is racing for the AI crown by 2025.

Eric Hal Schwartz has been a freelance writer at TechRadar for more than 15 years. He has covered the intersection of technology and the world. He was the head writer of Voicebot.ai for five years and was at the forefront of reporting on large language models and generative AI. Since then, he has become an expert in the products of generative AI, including OpenAI’s ChatGPT and Anthropic’s Claude. He also knows Google Gemini and all other synthetic media tools. His experience spans print, digital and broadcast media as well as live events. He’s now continuing to tell stories that people want to hear and need to know about the rapidly changing AI space and the impact it has on their lives. Eric is based out of New York City.

More on artificial intelligence

Eric Hal Schwartz has been a freelance writer at TechRadar for more than 15 years. He has covered the intersection of technology and the world. He was the head writer of Voicebot.ai for five years and was at the forefront of reporting on large language models and generative AI. Since then, he has become an expert in the products of generative AI, including OpenAI’s ChatGPT and Anthropic’s Claude. He also knows Google Gemini and all other synthetic media tools. His experience spans print, digital and broadcast media as well as live events. He’s now continuing to tell stories that people want to hear and need to know about the rapidly changing AI space and the impact it has on their lives. Eric is based out of New York City.

More on artificial intelligence