Decoding What Word2Vec Learns and How It Does So

Understanding the inner workings of word2vec offers a window into the fundamentals of representation learning within a streamlined yet insightful language modeling framework. Although word2vec laid the groundwork for today’s advanced language models, a comprehensive, predictive theory explaining its learning mechanism remained elusive for years. Recent advances have now bridged this gap by demonstrating that, under practical conditions, the learning process simplifies to an unweighted least-squares matrix factorization. This breakthrough allows the gradient flow dynamics to be solved exactly, revealing that the final word embeddings correspond to principal components derived via PCA.

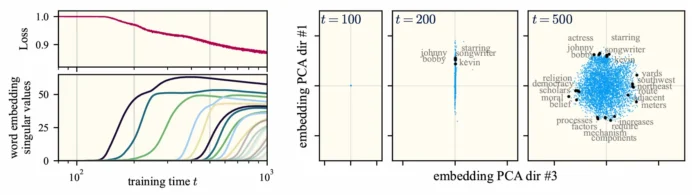

Word2Vec’s training from near-zero initialization unfolds in discrete, sequential phases. Left: stepwise rank increases in the weight matrix reduce loss incrementally. Right: snapshots of the embedding space show vectors expanding into higher-dimensional subspaces at each phase until full capacity is reached.

Why Study Word2Vec’s Learning Process?

word2vec is a seminal algorithm designed to generate dense vector representations of words by leveraging a contrastive learning approach. These embeddings capture semantic relationships through the geometric angles between vectors. Remarkably, the learned embeddings exhibit pronounced linear structures, where specific linear subspaces correspond to meaningful linguistic features such as gender, verb tense, or regional dialects. This phenomenon, known as the linear representation hypothesis, has gained significant traction recently, underpinning advances in interpretability and transfer learning.

For example, the classic analogy “man is to woman as king is to queen” is elegantly solved by vector arithmetic within the embedding space, highlighting the power of these linear directions. This is not coincidental: word2vec essentially trains a shallow two-layer linear network on a text corpus, capturing statistical patterns through self-supervised gradient descent. As such, it represents a minimal neural language model, making its study crucial for grasping feature learning in more complex language models.

Key Findings: Sequential Concept Learning via PCA

Our analysis reveals that when embeddings are initialized close to zero, the model learns one “concept” at a time-each corresponding to an orthogonal linear subspace-through a series of discrete learning stages. This process is akin to gradually mastering a new mathematical domain, where initially confusing terms and ideas become distinct and meaningful over time.

Each newly acquired linear concept increases the effective rank of the embedding matrix, providing richer representational capacity for words to express nuanced meanings. Importantly, these subspaces remain fixed once learned, effectively serving as the model’s learned features. Our theory enables the explicit calculation of these features in closed form: they are the eigenvectors of a matrix constructed solely from corpus statistics and algorithmic parameters.

Identifying the Learned Features

The latent features correspond to the leading eigenvectors of the matrix defined by:

M_{ij} = frac{P(i,j)}{P(i)P(j)} - 1

Here, i and j index vocabulary words, P(i,j) denotes the joint co-occurrence probability of words i and j, and P(i) is the unigram probability of word i. When this matrix is computed using Wikipedia corpus statistics, the top eigenvectors align with interpretable semantic themes: the first might highlight celebrity-related terms, the second government and administration, the third geography, and so forth.

In essence, word2vec training approximates a sequence of optimal low-rank factorizations of this matrix, effectively performing PCA on it.

Comparison of learning dynamics reveals discrete, stepwise improvements. Left: loss decreases in jumps as embedding rank increases. Right: embedding space snapshots show expansion along new orthogonal directions at each step.

Understanding the Assumptions and Their Implications

The theoretical framework relies on several mild assumptions:

- A quartic approximation of the objective function near the origin.

- Specific constraints on hyperparameters governing the algorithm.

- Very small initial embedding weights.

- Infinitesimally small gradient descent steps.

These conditions closely mirror the original word2vec setup and, crucially, do not impose any assumptions on the data distribution itself. This distribution-agnostic nature is a major strength, enabling precise predictions of learned features based solely on corpus statistics and algorithmic settings. Such detailed, distribution-free descriptions of learning dynamics are rare in natural language processing.

Empirical validation shows that despite these approximations, the theory closely matches actual word2vec behavior. For instance, on a standard analogy completion benchmark, word2vec achieves 68% accuracy, our approximate model scores 66%, and a classical baseline (PPMI) only reaches 51%.

Insights into Abstract Linear Representations

Applying this theory further, we explore how word2vec develops abstract linear representations corresponding to binary semantic concepts like masculine/feminine or past/future tense. These representations emerge through a sequence of noisy learning phases, with their geometry well-modeled by spiked random matrix theory. Early training stages are dominated by clear semantic signals, but as training progresses, noise can overshadow these signals, potentially degrading the model’s ability to resolve these linear features.

Conclusion: A Milestone in Theoretical Understanding of Language Models

This work delivers one of the first comprehensive, closed-form theories describing feature learning in a minimal yet meaningful natural language task. By connecting word2vec training dynamics to PCA on a corpus-derived matrix, it provides a clear, interpretable framework for understanding how semantic features arise. This represents a significant advance toward realistic analytical models that explain the performance of practical machine learning algorithms in natural language processing.