Amazon Trainium3 UltraServer: A New Chapter in AI Infrastructure

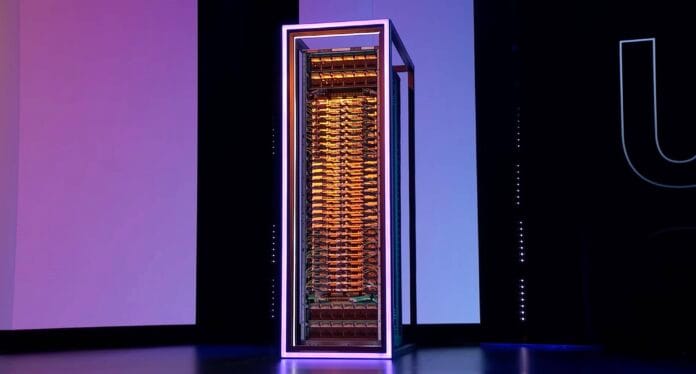

Amazon recently unveiled its Trainium3 UltraServer rack systems, marking a significant milestone in AI hardware development. At first glance, these racks bear a striking resemblance to Nvidia’s GB200 NVL72 systems, highlighting a growing trend of convergence in AI infrastructure design as the industry enters its fourth year of rapid expansion.

Converging Designs in AI Server Racks

The visual and architectural similarities between Amazon’s Trainium3 UltraServer and Nvidia’s GB200 NVL72 racks are more than coincidental. AWS has extensively deployed Nvidia’s GB200 and GB300 NVL72 racks, and it appears that Amazon’s new systems share many components with Nvidia’s designs. This alignment is further emphasized by Amazon’s announcement that its upcoming Trainium4 compute blades will be compatible with Nvidia’s MGX chassis, underscoring a strategic move toward modular, interoperable hardware.

Such standardization is a practical necessity for hyperscalers like Amazon, which operate at massive scale. Simplifying rack architectures reduces complexity and streamlines maintenance, enabling more efficient management of data center resources. This philosophy underpins the creation of industry consortia such as the Open Compute Platform (OCP), which promotes open standards for data center hardware.

Industry Collaboration and Open Standards

In recent months, Nvidia contributed its MGX reference designs to the OCP, while AMD and Meta introduced a new double-width rack based on AMD’s Helios system, which leverages Meta’s 2025 OCP design. These developments reflect a broader industry trend toward shared hardware blueprints that foster interoperability and innovation.

Compute Blades: The Heart of AI Servers

Amazon’s Trainium3 compute blade integrates a Graviton CPU with four Trainium3 accelerators and two Nitro data processing units, a departure from previous generations that relied on Intel x86 CPUs. This configuration closely mirrors the compute blades found in AMD and Nvidia’s AI racks. For example, AMD’s Helios system combines four MI400-series GPUs with a Venice CPU and dual smartNICs, while Nvidia’s GB300 pairs two Grace CPUs with multiple GPUs.

Notably, AMD opts for a double-wide OpenRack design, whereas Amazon and Nvidia utilize MGX-style chassis, illustrating subtle variations within a converging architectural framework.

Switched Scale-Up Fabrics: The Backbone of High-Performance AI

The Trainium3 UltraServer hosts 36 compute blades distributed across two MGX-style racks, totaling 144 accelerators interconnected via Amazon’s proprietary NeuronSwitch fabric. Although AWS has yet to disclose detailed topology, the design emphasizes low-latency, high-bandwidth communication essential for large-scale AI workloads.

Similarly, Nvidia’s GB200 and GB300 NVL72 racks employ 18 switches across nine sleds, while AMD’s Helios system uses 12 Ethernet switches delivering 102.4 Tbps throughput across six double-wide blades. These fabrics enable the aggregation of compute and memory resources into a unified, rack-scale accelerator.

Comparing Interconnect Protocols

While the overall architecture is comparable, the interconnect protocols differ: AWS uses NeuronSwitch, AMD tunnels the UALink protocol over Ethernet, and Nvidia relies on NVLink and NVSwitch technologies. Interestingly, Amazon plans to transition from NeuronSwitch to support both UALink and NVLink Fusion in its upcoming Trainium4 accelerators, signaling a shift toward broader compatibility.

From Mesh to Switch: Evolution of AI Compute Fabrics

Before adopting switched fabrics, Amazon’s Trainium2 accelerators utilized 2D and 3D Torus mesh topologies for interconnectivity. These mesh networks offer advantages in certain training scenarios but face limitations in scaling and latency for inference workloads.

Nafea Bshara, co-founder of AWS’s Annapurna Labs, explains that switched fabrics provide superior performance for token-by-token generation during inference by enabling wider parallelism and lower latency. While mesh topologies remain effective for some large model training tasks, switched fabrics excel at maximizing concurrency and maintaining low latency, especially at higher batch sizes.

Challenges and Trade-offs in Fabric Design

Despite their benefits, switched fabrics introduce complexity due to the need for network switches, unlike mesh topologies that rely solely on direct connections. Switches can reduce the number of hops between accelerators, potentially lowering latency, but scaling beyond 144 accelerators remains a technical challenge.

Google’s Unique Approach: Optical Mesh Networks

In contrast to Amazon, Nvidia, and AMD, Google’s 7th-generation Ironwood TPU clusters employ 2D and 3D torus topologies capable of scaling up to 9,216 TPUs within a single compute domain. This scalability is enabled by the use of optical interconnects, which, although more power-intensive, eliminate the need for packet switches.

Google’s optical circuit switches function like automated patch panels, allowing dynamic reconfiguration of TPU pods to match workload demands. This approach also simplifies fault tolerance by enabling rapid replacement of failed TPUs without disrupting the entire cluster.

As Amazon and others move toward switched fabrics, Google remains one of the few major providers relying on torus topologies for AI inference and training, highlighting diverse strategies in the evolving AI hardware landscape.