Why Do MoE Models Run Faster Despite Having More Parameters Than Transformers?

Mixture of Experts (MoE) models often contain significantly more parameters than traditional Transformer architectures, yet they can achieve faster inference speeds. What enables this seemingly paradoxical efficiency?

Understanding the Core Architectural Differences: Transformers vs. Mixture of Experts

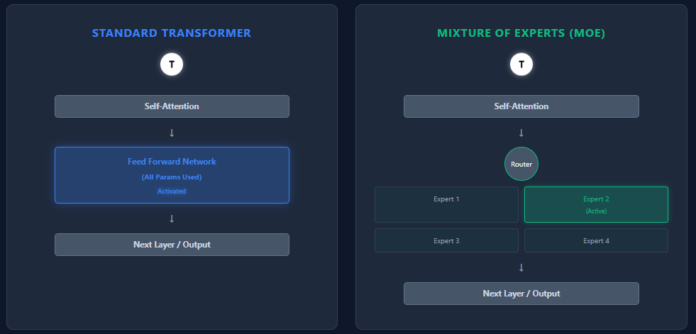

Both Transformers and MoE models are built upon a similar foundation-layers of self-attention followed by feed-forward networks (FFNs). However, their approach to parameter utilization and computation diverges sharply, leading to distinct performance characteristics.

Single Feed-Forward Network vs. Multiple Experts

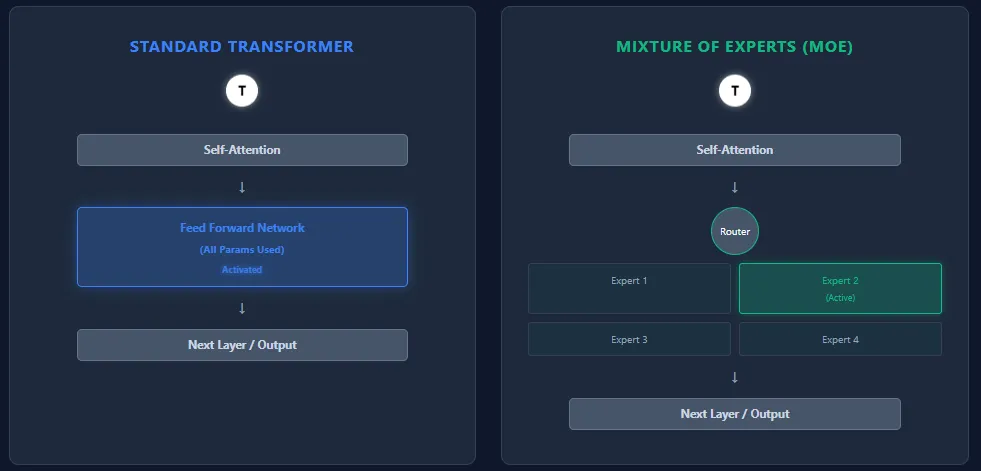

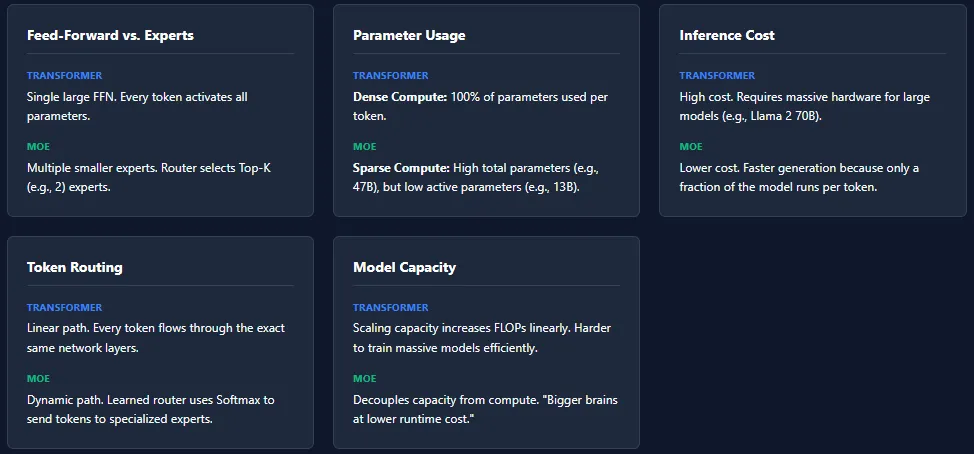

- Transformers: Each Transformer block contains one large feed-forward network. During inference, every token sequentially passes through this network, activating all its parameters.

- MoE Models: Instead of a single FFN, MoE replaces it with a collection of smaller specialized networks called experts. A routing mechanism dynamically selects a subset of these experts (commonly the top K) for each token, activating only a fraction of the total parameters.

Parameter Activation and Computational Efficiency

- Transformers: All parameters in every layer are engaged for each token, resulting in dense computation and higher resource consumption.

- MoE: Although MoE models have a much larger total parameter count, only a limited number of experts are activated per token, leading to sparse computation. For instance, the Mixtral 8×7B model boasts 46.7 billion parameters but activates roughly 13 billion per token during inference.

Inference Speed and Resource Requirements

- Transformers: The necessity to engage all parameters for every token results in substantial inference costs. Scaling to massive models like GPT-4 or LLaMA 2 70B demands high-end hardware and significant computational power.

- MoE: By activating only a handful of experts per layer, MoE models reduce inference costs dramatically. This selective activation enables faster and more cost-effective inference, especially beneficial for very large-scale models.

Dynamic Token Routing vs. Uniform Processing

- Transformers: Tokens follow a uniform path through all layers without differentiation.

- MoE: A learned routing network assigns tokens to specific experts based on softmax probabilities. Different tokens may activate different experts, and this routing can vary across layers, fostering expert specialization and enhancing overall model capacity.

Scaling Model Capacity Without Proportional Compute Increase

- Transformers: Increasing capacity typically involves adding more layers or expanding the FFN size, both of which significantly increase floating-point operations (FLOPs) and computational cost.

- MoE: MoE architectures can scale the total number of parameters extensively without a corresponding rise in per-token computation, effectively delivering “larger brains” at a fraction of the runtime expense.

Challenges in Training MoE Models

Despite their efficiency advantages, MoE models introduce unique training complexities. One major issue is expert collapse, where the routing mechanism disproportionately favors a small subset of experts, causing others to be underutilized and undertrained.

Another significant hurdle is load imbalance. Some experts may receive a disproportionately high number of tokens, leading to uneven learning and potential bottlenecks. To mitigate these problems, MoE models employ strategies such as noise injection into routing decisions, Top-K expert selection masking, and strict capacity limits per expert.

While these techniques help maintain balanced expert utilization and robust training, they also add layers of complexity to the training process compared to standard Transformer models.

Summary: Why MoE Models Are Both Larger and Faster

In essence, MoE models leverage a sparse activation strategy that allows them to house an enormous number of parameters while only engaging a small subset during inference. This selective activation reduces computational overhead, enabling faster processing times and lower costs compared to dense Transformer models of similar or smaller sizes. However, this efficiency comes with the trade-off of more intricate training dynamics, requiring sophisticated routing and balancing mechanisms to fully realize their potential.