Building an Intelligent Scientific Discovery System: A Step-by-Step Guide

This guide walks you through constructing a comprehensive AI-driven scientific discovery assistant, illustrating how each module integrates seamlessly to create an efficient research workflow. Starting with loading a curated collection of scientific literature, we develop retrieval mechanisms and language model components, then combine these into agents that perform literature search, hypothesis formulation, experimental design, and report generation. The following sections demonstrate how these elements interact to transform an initial research question into a detailed scientific analysis within a unified framework.

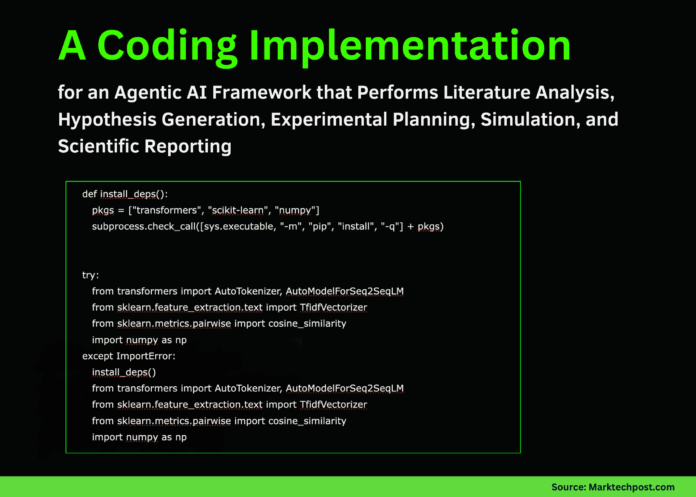

Setting Up the Foundation: Data Preparation and Model Initialization

Our first step involves importing essential libraries, preparing the literature dataset, and initializing the language model. We employ a TF-IDF vectorizer to embed abstracts and titles, enabling effective retrieval of relevant papers later in the process. This foundational setup establishes the computational infrastructure that supports all subsequent operations.

import sys, subprocess

def installdependencies():

packages = ["transformers", "scikit-learn", "numpy"]

subprocess.checkcall([sys.executable, "-m", "pip", "install", "-q"] + packages)

try:

from transformers import AutoTokenizer, AutoModelForSeq2SeqLM

from sklearn.featureextraction.text import TfidfVectorizer

from sklearn.metrics.pairwise import cosinesimilarity

import numpy as np

except ImportError:

installdependencies()

from transformers import AutoTokenizer, AutoModelForSeq2SeqLM

from sklearn.featureextraction.text import TfidfVectorizer

from sklearn.metrics.pairwise import cosinesimilarity

import numpy as np

from dataclasses import dataclass

from typing import List, Dict, Any

np.random.seed(42)

Sample scientific literature dataset

LITERATURE = [

{"id": "P1", "title": "Transformer-Based Protein Language Models for Structural Insights", "field": "computational biology",

"abstract": "This study leverages transformer architectures trained on extensive protein sequences to generate residue embeddings that enhance secondary structure and stability predictions."},

{"id": "P2", "title": "Deep Learning Approaches for CRISPR Off-Target Prediction", "field": "genome editing",

"abstract": "We introduce a convolutional neural network designed to predict CRISPR-Cas9 off-target effects directly from DNA sequences, outperforming existing methods on GUIDE-seq benchmarks."},

{"id": "P3", "title": "Symbolic Regression with Foundation Models for Equation Discovery", "field": "scientific machine learning",

"abstract": "Combining large language models with symbolic regression techniques enables recovery of governing physical equations from noisy experimental data in physics and fluid mechanics."},

{"id": "P4", "title": "Bayesian Optimization and Graph Neural Networks for Material Design", "field": "materials science",

"abstract": "This work integrates Bayesian optimization with graph neural networks to actively select materials candidates that optimize target properties while minimizing experimental costs."},

{"id": "P5", "title": "Graph-Based Cross-Domain Literature Retrieval in Scientific NLP", "field": "natural language processing",

"abstract": "We develop a heterogeneous graph combining citations and concepts across domains, demonstrating improved retrieval performance for interdisciplinary literature reviews."},

]

Prepare corpus for retrieval

corpustexts = [paper["abstract"] + " " + paper["title"] for paper in LITERATURE]

vectorizer = TfidfVectorizer(stopwords="english")

corpusmatrix = vectorizer.fittransform(corpustexts)

Load pretrained language model

MODELNAME = "google/flan-t5-small"

tokenizer = AutoTokenizer.frompretrained(MODELNAME)

model = AutoModelForSeq2SeqLM.frompretrained(MODELNAME)

def generatetext(prompt: str, maxtokens: int = 256) -> str:

inputs = tokenizer(prompt, returntensors="pt", truncation=True)

outputs = model.generate(*inputs, maxnewtokens=maxtokens, numbeams=4, earlystopping=True)

return tokenizer.decode(outputs[0], skipspecialtokens=True)

Implementing Literature Retrieval: Connecting Queries to Relevant Research

To enable our system to ground its reasoning in existing knowledge, we develop a literature search agent. This component transforms user queries into vector representations and identifies the most pertinent scientific papers by calculating cosine similarity scores. This retrieval mechanism ensures that the agent’s insights are informed by the closest matching prior studies.

@dataclass

class PaperMatch:

paper: Dict[str, Any]

relevancescore: float

class LiteratureSearchAgent:

def init(self, vectorizer, corpusmatrix, papers: List[Dict[str, Any]]):

self.vectorizer = vectorizer

self.corpusmatrix = corpusmatrix

self.papers = papers

def searchpapers(self, query: str, topk: int = 3) -> List[PaperMatch]:

queryvec = self.vectorizer.transform([query])

similarityscores = cosinesimilarity(queryvec, self.corpusmatrix)[0]

topindices = np.argsort(-similarityscores)[:topk]

results = [PaperMatch(self.papers[i], float(similarityscores[i])) for i in topindices]

return results

From Hypothesis to Experiment: Designing and Simulating Scientific Tests

Building on the retrieved literature and formulated hypotheses, we create an experiment agent that designs experimental protocols and simulates outcomes. This agent defines key variables, outlines step-by-step procedures, and generates synthetic performance metrics that mimic real-world scientific evaluations. This approach bridges theoretical concepts with practical experimentation.

@dataclass

class ExperimentDesign:

systemdescription: str

hypothesisstatement: str

parameters: Dict[str, Any]

steps: List[str]

@dataclass

class ExperimentOutcome:

design: ExperimentDesign

results: Dict[str, float]

class ExperimentationAgent:

def designexperiment(self, researchquestion: str, hypothesis: str, papermatches: List[PaperMatch]) -> ExperimentDesign:

primaryfield = papermatches[0].paper["field"] if papermatches else "computational science"

steps = [

f"Compile dataset integrating concepts from papers: {', '.join(p.paper['id'] for p in papermatches)}.",

"Partition dataset into training, validation, and testing subsets.",

"Evaluate baseline model against enhanced model incorporating the hypothesis.",

"Analyze results using relevant metrics and conduct ablation studies.",

]

parameters = {

"baselinemodel": "sequence-based CNN",

"enhancedmodel": "protein language model combined with CNN",

"trainingsamples": 5000,

"validationsamples": 1000,

"evaluationmetric": "AUROC",

}

systemdescription = f"{primaryfield} experimental setup addressing: {researchquestion}"

return ExperimentDesign(systemdescription, hypothesis, parameters, steps)

def simulateexperiment(self, design: ExperimentDesign) -> ExperimentOutcome:

baselinescore = 0.78 + 0.02 np.random.randn()

improvement = abs(0.05 + 0.01 * np.random.randn())

results = {

"baselineAUROC": round(baselinescore, 3),

"enhancedAUROC": round(baselinescore + improvement, 3),

"performancegain": round(improvement, 3),

}

return ExperimentOutcome(design, results)

Crafting Scientific Reports: Transforming Data into Clear Communication

To finalize the research cycle, we develop a report generation agent that synthesizes the hypothesis, experimental design, results, and related literature into a structured scientific document. This agent leverages the language model to produce a coherent report with sections such as background, methodology, results, and future directions, facilitating effective dissemination of findings.

class ReportGeneratorAgent:

def createreport(self, researchquestion: str, papermatches: List[PaperMatch], design: ExperimentDesign, outcome: ExperimentOutcome) -> str:

relatedpapers = "n".join(f"- {p.paper['title']} ({p.paper['field']})" for p in papermatches)

protocolsteps = "n".join(f"- {step}" for step in design.steps)

prompt = f"""

You are an AI research assistant tasked with composing a concise scientific report.

Research Question:

{researchquestion}

Hypothesis:

{design.hypothesisstatement}

Relevant Literature:

{relatedpapers}

Experimental Design:

System Description: {design.systemdescription}

Parameters: {design.parameters}

Protocol Steps:

{protocolsteps}

Simulated Results:

{outcome.results}

Please write a clear report including the following sections:

- Introduction

- Methodology

- Experimental Setup

- Results and Analysis

- Limitations and Future Work

"""

return generatetext(prompt.strip(), maxtokens=320)

Integrating Components: The Complete Scientific Discovery Agent

We bring together all modules into a cohesive scientific agent that manages the entire research process-from literature search and hypothesis generation to experiment simulation and report writing. This integrated system can be applied to real-world research questions, demonstrating the power of combining AI components for automated scientific inquiry.

class ScientificDiscoveryAgent:

def init(self):

self.literatureagent = LiteratureSearchAgent(vectorizer, corpusmatrix, LITERATURE)

self.experimentagent = ExperimentationAgent()

self.reportagent = ReportGeneratorAgent()

def formulatehypothesis(self, researchquestion: str, papermatches: List[PaperMatch]) -> str:

abstracts = " ".join(p.paper["abstract"] for p in papermatches)

prompt = f"""

You are an AI scientist. Based on the research question and related abstracts,

formulate a concise, testable hypothesis in 2-3 sentences.

Research Question:

{researchquestion}

Related Abstracts:

{abstracts}

"""

return generatetext(prompt.strip(), maxtokens=96)

def executeresearchpipeline(self, researchquestion: str) -> str:

papermatches = self.literatureagent.searchpapers(researchquestion, topk=3)

hypothesis = self.formulatehypothesis(researchquestion, papermatches)

design = self.experimentagent.designexperiment(researchquestion, hypothesis, papermatches)

outcome = self.experimentagent.simulateexperiment(design)

report = self.reportagent.createreport(researchquestion, papermatches, design, outcome)

return report

if name == "main":

question = (

"In what ways can embeddings from protein language models enhance CRISPR off-target "

"prediction accuracy compared to traditional sequence-based CNN models?"

)

agent = ScientificDiscoveryAgent()

finalreport = agent.executeresearchpipeline(question)

print(final_report)

Summary and Future Directions

This tutorial demonstrates how a compact yet powerful codebase can evolve into an AI-powered research collaborator capable of literature retrieval, hypothesis generation, experimental planning, simulation, and scientific reporting. Each component contributes uniquely to the overall pipeline, and their integration amplifies the system’s capabilities. Looking ahead, this framework can be expanded with larger and more diverse literature databases, advanced modeling techniques, and more nuanced experimental simulations, paving the way for increasingly sophisticated AI-assisted scientific discovery.