Introducing HunyuanOCR: Tencent’s Specialized 1B Parameter Vision-Language Model for OCR and Document Analysis

Tencent Hunyuan has unveiled HunyuanOCR, a cutting-edge vision-language model (VLM) with 1 billion parameters, meticulously designed to excel in optical character recognition (OCR) and comprehensive document understanding. Built upon Hunyuan’s proprietary multimodal framework, this model seamlessly integrates multiple tasks-including text spotting, document parsing, information extraction, visual question answering (VQA), and text image translation-into a unified, end-to-end processing pipeline.

Efficient and Focused: A Lightweight Alternative to Large-Scale VLMs

While heavyweight models like Gemini 2.5 and Qwen3 VL dominate the general VLM landscape, HunyuanOCR offers a streamlined yet powerful solution tailored specifically for OCR-centric applications. It is optimized for real-world production scenarios such as parsing complex documents, extracting data from cards and receipts, capturing subtitles from videos, and translating multilingual documents. Despite its relatively compact size, HunyuanOCR matches or even surpasses larger models in OCR-related benchmarks.

Innovative Architecture: Native Resolution Vision Transformer Meets Adaptive Language Modeling

HunyuanOCR’s architecture is composed of three integral components:

- Native Resolution Visual Encoder (Hunyuan ViT): Based on the SigLIP-v2-400M backbone, this encoder supports arbitrary input resolutions by employing adaptive patching that maintains the original aspect ratio. This approach enables the model to effectively process long text lines, extensive documents, and low-quality scans by applying global attention to patches derived from the native image proportions.

- Adaptive MLP Connector: This module performs learnable pooling across spatial dimensions, condensing dense visual tokens into a shorter sequence while preserving critical information from text-rich regions. This compression reduces the computational load on the language model without sacrificing OCR-relevant details.

- Lightweight Language Model: Built on the densely connected Hunyuan 0.5B model, it incorporates XD RoPE embeddings, which divide rotary position embeddings into four subspaces-text, height, width, and time. This design enables the model to naturally align one-dimensional token sequences with two-dimensional layouts and three-dimensional spatiotemporal structures, allowing it to handle multi-column pages, cross-page flows, and video frame sequences within the same architecture.

Training and inference are conducted in a fully end-to-end manner, eliminating the need for external layout analysis or post-processing modules. All tasks are framed as natural language prompts and executed in a single forward pass, minimizing error propagation and simplifying deployment.

Comprehensive Multilingual Dataset and Robust Pretraining Strategy

The training dataset comprises over 200 million image-text pairs spanning nine diverse real-world scenarios, including street scenes, official documents, advertisements, handwritten notes, screenshots, identity cards, invoices, gaming interfaces, video frames, and artistic typography. This extensive corpus covers more than 130 languages, ensuring broad linguistic and contextual coverage.

To augment real data, synthetic samples are generated using a multilingual engine capable of rendering right-to-left scripts and paragraph-level layouts. The synthetic pipeline manipulates font styles, languages, rotations, and color channels, while applying realistic distortions such as warping, blurring, and localized lighting variations to mimic challenging capture conditions like mobile photography.

HunyuanOCR’s pretraining unfolds in four progressive stages:

- Vision-Language Alignment: Utilizing 50 billion tokens and an 8,000-token context window, this phase aligns pure text, synthetic parsing and recognition data, and general caption datasets.

- Multimodal Pretraining: Conducted on 300 billion tokens, this stage blends pure text with synthetic samples for spotting, parsing, translation, and VQA tasks.

- Extended Context Training: Expanding the context length to 32,000 tokens, this phase focuses on long documents and extended text sequences using 80 billion tokens.

- Application-Specific Fine-Tuning: Supervised fine-tuning on 24 billion tokens of human-annotated and challenging negative samples, maintaining the 32,000-token context and employing unified instruction templates.

Advanced Reinforcement Learning with Verifiable Reward Mechanisms

Beyond supervised learning, HunyuanOCR undergoes further refinement through reinforcement learning (RL) using Group Relative Policy Optimization (GRPO) combined with verifiable reward functions tailored for structured tasks:

- Text Spotting: Rewards are calculated based on the intersection-over-union (IoU) of detected bounding boxes and normalized edit distance between predicted and ground truth text.

- Document Parsing: The reward metric relies on normalized edit distance comparing generated document structures against reference annotations.

- Visual Question Answering and Translation: An LLM-based evaluator provides feedback-binary semantic match rewards for VQA, and COMET-style scoring (scaled from 0 to 1) for translation quality. The training framework enforces strict output length and format constraints, assigning zero reward for invalid or malformed outputs, thereby stabilizing optimization and encouraging well-structured JSON or other formatted responses.

Benchmark Performance: A Compact Model Outperforming Larger Competitors

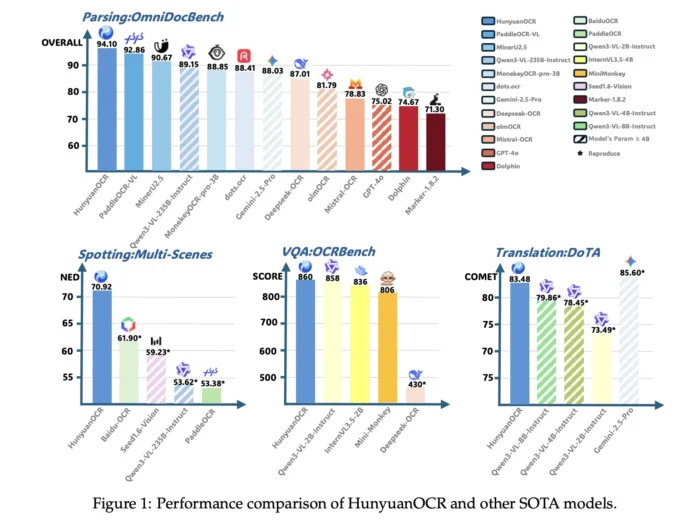

On an internal text spotting benchmark featuring 900 images across nine categories, HunyuanOCR achieves an impressive overall score of 70.92, surpassing traditional OCR pipelines like PaddleOCR and BaiduOCR, as well as larger generalist VLMs such as Gemini 2.5 Pro, Qwen3 VL 2B, and Seed 1.6 Vision.

In the OmniDocBench evaluation, it attains a remarkable 94.10 overall score, including 94.73 on formula recognition and 91.81 on table parsing. Under the more challenging Wild OmniDocBench conditions-simulating document folds and lighting variations-it scores 85.21. On DocML, a multilingual parsing benchmark covering 14 languages beyond Chinese and English, HunyuanOCR sets new state-of-the-art results with a score of 91.03.

For information extraction and VQA tasks, the model records accuracies of 92.29% on cards, 92.53% on receipts, and 92.87% on video subtitle extraction. On OCRBench, it achieves a score of 860, outperforming similarly sized models like DeepSeek OCR and rivaling larger VLMs such as Qwen3 VL 2B Instruct and Gemini 2.5 Pro.

In text image translation, HunyuanOCR excels on the DoTA benchmark for English-to-Chinese document translation, securing a top COMET score and winning first place in the Track 2.2 OCR-Free Small Model category at the ICDAR 2025 DIMT competition.

Summary of Key Innovations and Advantages

- Compact, End-to-End OCR VLM: Integrates a 0.4B native resolution Vision Transformer with a 0.5B language model via an adaptive MLP connector, enabling unified execution of spotting, parsing, information extraction, VQA, and translation without external layout or detection modules.

- Extensive Multilingual and Multimodal Support: Trained on over 200 million image-text pairs across nine diverse scenarios, covering 130+ languages during training and supporting 100+ languages in deployment.

- Robust Training Pipeline with Reinforcement Learning: Employs a four-stage pretraining regimen followed by reinforcement learning with verifiable rewards, enhancing performance on structured OCR tasks.

- State-of-the-Art Performance Among Sub-3B Parameter Models: Demonstrates leading accuracy and benchmark scores on multiple OCR and document understanding datasets, outperforming larger commercial and open-source models.

Conclusion: A Milestone in Practical OCR Vision-Language Models

HunyuanOCR represents a significant advancement in the evolution of OCR-specialized vision-language models, transitioning from experimental benchmarks to viable production-grade solutions. Tencent’s innovative combination of a native resolution Vision Transformer, adaptive MLP connector, and reinforcement learning with verifiable rewards delivers a versatile, instruction-driven model capable of handling a wide array of OCR tasks across numerous languages. Its compact size and superior performance make it a promising candidate for real-world deployment in industries requiring accurate and efficient document analysis.