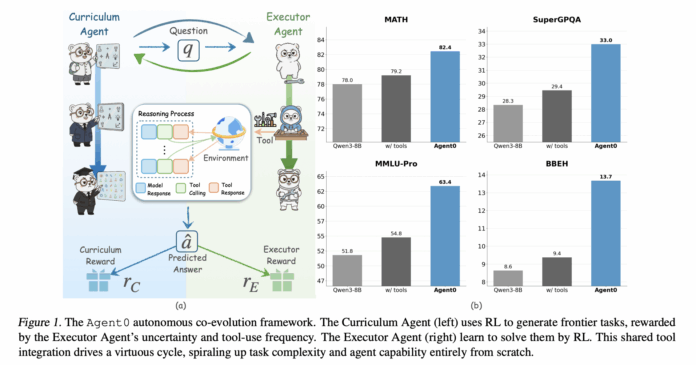

Large language models traditionally depend on vast amounts of human-curated data for training. But what if a model could autonomously design its own learning curriculum and independently master the use of external tools? A collaborative research effort from UNC-Chapel Hill, Salesforce Research, and Stanford University introduces Agent0, an innovative, fully autonomous AI framework that evolves high-performing agents without relying on any external datasets. This is achieved through a multi-step co-evolution process combined with seamless integration of tool usage.

Introducing Agent0: Self-Directed Learning Through Co-Evolution

Agent0 is designed to enhance both mathematical and general reasoning capabilities. By generating carefully crafted tasks and incorporating tool-assisted problem-solving, it pushes a foundational language model beyond its initial limits. The framework was tested across ten diverse benchmarks, demonstrating significant performance improvements.

Dual-Agent Architecture from a Single Base Model

Starting with a base policy, such as Qwen3 4B Base or Qwen3 8B Base, Agent0 creates two specialized agents:

- Curriculum Agent (πθ): Responsible for generating new tasks.

- Executor Agent (πϕ): Tasked with solving these problems using a Python-based tool.

The training unfolds iteratively in two main phases per cycle:

- Curriculum Evolution: The curriculum agent produces a batch of tasks. For each task, the executor generates multiple solutions. A composite reward evaluates the executor’s uncertainty, frequency of tool usage, and task diversity. The curriculum agent is then updated using Group Relative Policy Optimization (GRPO) based on this reward.

- Executor Evolution: With the curriculum agent fixed, it generates a large set of tasks. Agent0 filters these to retain only those near the executor’s current skill frontier. The executor is then trained on this refined set using an ambiguity-aware reinforcement learning method called Ambiguity Dynamic Policy Optimization (ADPO).

This cyclical feedback loop ensures that as the executor improves-especially through enhanced tool use-the curriculum agent correspondingly generates more challenging, tool-dependent tasks to maintain high rewards.

Evaluating Task Difficulty: How the Curriculum Agent Measures Success

The curriculum agent’s reward system integrates three key components:

- Uncertainty Reward: For each task, the executor produces multiple answers. The fraction of responses agreeing with the majority vote, termed self-consistency (p̂(x)), is calculated. Tasks with p̂ near 0.5 receive the highest reward, encouraging challenges that are neither too simple nor impossible.

- Tool Usage Reward: The executor can invoke a sandboxed Python interpreter during problem-solving. The number of tool calls is tracked and rewarded up to a cap (set to 4 in experiments), incentivizing tasks that require active tool engagement rather than mere mental calculation.

- Repetition Penalty: To avoid generating redundant tasks, Agent0 measures similarity between tasks using a BLEU-based metric. Larger clusters of similar tasks incur penalties, promoting diversity within each batch.

The final composite reward combines these factors, adjusted by a format validation check, and guides the curriculum agent’s updates via GRPO.

Executor Training: Learning from Ambiguous Self-Generated Labels

The executor agent is trained using GRPO on multi-turn interactions that blend natural language reasoning, Python code execution, and tool feedback, relying on pseudo labels derived from majority voting rather than ground truth answers.

Constructing the Frontier Dataset

After each curriculum phase, the frozen curriculum agent generates a large candidate task pool. Agent0 filters this pool to retain tasks where the executor’s self-consistency score falls within an informative range (e.g., 0.3 to 0.8), focusing training on problems that are challenging yet solvable.

Multi-Turn, Tool-Integrated Reasoning

For each frontier task, the executor produces a reasoning trajectory that interleaves:

- Natural language explanations,

- Python code snippets,

- Outputs from the sandboxed interpreter.

When a tool call is made, execution pauses to run the code in a secure environment powered by the VeRL Tool, then resumes conditioned on the results. The process concludes when the model outputs a final answer enclosed in {boxed ...} tags. Majority voting across multiple trajectories establishes the pseudo label and terminal reward.

Ambiguity Dynamic Policy Optimization (ADPO)

Standard GRPO treats all samples equally, which can destabilize training when labels are noisy or ambiguous. ADPO addresses this by:

- Scaling the advantage function based on self-consistency, reducing the impact of uncertain tasks.

- Dynamically adjusting the upper clipping bound of the importance ratio according to task ambiguity, enhancing exploration on uncertain problems.

This adaptive approach stabilizes reinforcement learning updates when relying on pseudo labels derived from majority votes.

Performance Highlights on Mathematical and General Reasoning Benchmarks

Agent0 was implemented atop the VeRL framework and tested using Qwen3 4B Base and Qwen3 8B Base models, with a sandboxed Python interpreter as the sole external tool.

The evaluation spanned ten benchmarks, including:

- Mathematical Reasoning: AMC, Minerva, MATH, GSM8K, Olympiad Bench, AIME24, AIME25.

- General Reasoning: SuperGPQA, MMLU Pro, BBEH.

Metrics reported include pass@1 for most datasets and mean@32 for AMC and AIME tasks.

On the Qwen3 8B Base model, Agent0 achieved:

- An average math score of 58.2, up from 49.2 for the base model.

- An overall general reasoning average of 42.1, compared to 34.5 for the base.

Agent0 also outperformed strong zero-data baselines such as R Zero, Absolute Zero, SPIRAL, and Socratic Zero, both with and without tool integration. Notably, it exceeded Socratic Zero, which depends on external OpenAI APIs.

Over three co-evolution iterations, math performance on Qwen3 8B steadily rose from 55.1 to 58.2, with general reasoning improving in tandem-demonstrating consistent self-enhancement rather than performance degradation.

Qualitative analyses reveal that curriculum tasks evolve from straightforward geometry problems to intricate constraint satisfaction challenges, while executor trajectories skillfully combine reasoning narratives with Python code execution to arrive at correct solutions.

Summary of Core Contributions

- Data-Free Co-Evolution: Agent0 operates without any external datasets or human annotations, evolving two agents from a single base LLM through reinforcement learning and tool use.

- Frontier Curriculum via Self-Assessment: The curriculum agent leverages the executor’s self-consistency and tool engagement to generate tasks that are optimally challenging and tool-dependent.

- Robust RL with ADPO: The executor’s training incorporates Ambiguity Dynamic Policy Optimization, which mitigates instability from ambiguous pseudo labels by weighting tasks according to confidence and adapting clipping bounds.

- Significant Performance Gains: On Qwen3 8B Base, Agent0 boosts math benchmark scores by approximately 18% and general reasoning by 24%, marking substantial improvements over the base model.

- Surpassing Previous Zero-Data Methods: Across multiple benchmarks, Agent0 outperforms prior self-evolving frameworks, including those utilizing external APIs or tools, highlighting the effectiveness of its co-evolution and tool integration strategy.

Concluding Remarks

Agent0 represents a pivotal advancement toward practical, data-independent reinforcement learning for tool-augmented reasoning. By enabling a base language model to simultaneously act as both curriculum designer and executor, and by employing GRPO enhanced with ADPO alongside the VeRL Tool, it achieves stable, continuous self-improvement from noisy pseudo labels. This approach not only surpasses existing zero-data frameworks but also establishes self-evolving, tool-integrated LLM agents as a promising paradigm for future AI training methodologies.