Transforming Business Through Actionable AI Predictions

Developing an AI model capable of forecasting equipment malfunctions is a notable technical feat. However, the true impact on business emerges only when these predictions translate into decisive actions-when the AI system accurately identifies a failing machine and triggers timely intervention. While the initial achievement may reside in a proof-of-concept demonstration, the latter drives tangible improvements in operational efficiency and profitability.

Unlocking AI’s Potential: The Power of Inference

Craig Partridge, Senior Director of Digital Next Advisory at HPE, emphasizes that “the real value of AI is realized through inference.” Inference represents the practical application of AI training within everyday workflows, where the model’s insights directly influence decisions and outcomes. Partridge describes this as “trusted AI inferencing at scale and in production,” highlighting it as the primary source of return on AI investments.

Bridging the Gap: From Experimentation to Operational AI

Despite growing enthusiasm, many organizations struggle to move beyond pilot projects. According to Christian Reichenbach, Worldwide Digital Advisor at HPE, a recent survey of 1,775 IT leaders reveals that only 22% of companies have fully operationalized AI-an increase from 15% the previous year-while the majority remain in experimental phases.

Advancing to widespread AI adoption demands a strategic framework centered on three pillars: embedding trust as a foundational principle, adopting a data-centric approach, and empowering IT leadership to scale AI initiatives effectively.

Building Trust: The Cornerstone of Reliable AI Systems

For AI to be dependable, users must have confidence in the system’s outputs. This trust is essential not only for routine applications like automated content creation or customer support chatbots but is absolutely critical in high-risk environments such as robotic-assisted surgeries or autonomous vehicle navigation.

Ensuring trustworthy inference begins with uncompromising data quality. Partridge’s guiding principle is clear: “Poor data input inevitably leads to flawed AI outputs.”

Reichenbach points to the proliferation of AI-generated misinformation-often called hallucinations-as a cautionary example. Such inaccuracies burden employees with extensive fact-checking, eroding trust and undermining productivity gains. Conversely, when trust is embedded in AI inference, teams experience enhanced efficiency. For instance, a network operations group equipped with a reliable AI assistant can receive precise, tailored troubleshooting advice around the clock, effectively gaining a new, tireless team member.

Embracing Data-Centric AI and the Emergence of the AI Factory

The initial AI surge focused heavily on recruiting data scientists and developing massive, complex models. Today, as organizations seek measurable business outcomes, the emphasis has shifted toward robust data engineering and architecture.

“The past five years have seen a critical shift toward dismantling data silos, integrating diverse data streams, and rapidly extracting actionable insights,” explains Reichenbach. This evolution coincides with the rise of the AI factory-a continuous production system where data flows through pipelines and feedback loops to generate ongoing intelligence.

This transition from model-centric to data-centric AI raises strategic questions: How much of the AI model’s intelligence is proprietary? How unique and valuable is the input data derived from your customers, operations, or market?

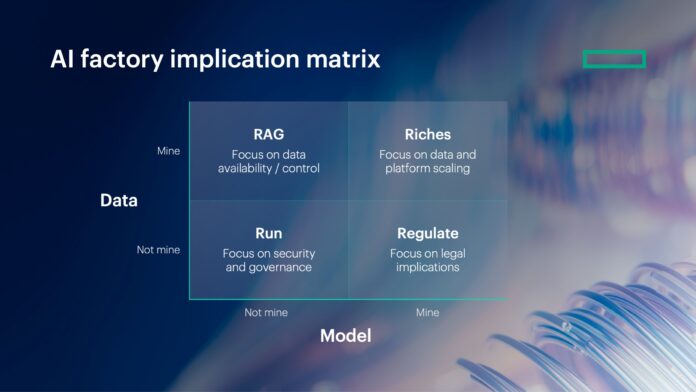

These considerations influence platform strategies, operational frameworks, engineering roles, and governance policies. To assist organizations in navigating these complexities, Partridge introduces HPE’s four-quadrant AI factory framework:

- Run: Utilizing externally pretrained models via APIs without owning the model or data. This approach demands stringent security, governance, and a dedicated center of excellence to oversee AI usage.

- Retrieval Augmented Generation (RAG): Combining external pretrained models with proprietary data to generate unique insights. Implementation focuses on seamless integration of data streams with inferencing capabilities for rapid, comprehensive AI solutions.

- Riches: Developing custom models trained on internal enterprise data to unlock distinct competitive advantages. This requires scalable, energy-efficient infrastructure and often high-performance computing resources.

- Regulate: Employing custom models trained on external data, necessitating similar infrastructure to Riches but with heightened emphasis on legal compliance and careful handling of sensitive, non-owned data.

These quadrants are complementary rather than exclusive. Partridge notes that many organizations, including HPE, operate across multiple quadrants simultaneously. For example, HPE builds proprietary models to analyze network operations and then integrates that intelligence into products that customers can deploy under the Run quadrant-leveraging AI capabilities without owning the underlying data or models.

Scaling AI: IT’s Crucial Role in Enterprise-Wide Adoption

The phrase “at scale” in Partridge’s description of AI inferencing highlights a key challenge: solutions that succeed in isolated cases often falter when expanded across an entire organization.

“Experimentation is valuable for innovation,” Partridge acknowledges, “but to truly harness AI’s benefits, it must be accessible and effective across diverse use cases and teams.”

IT departments are uniquely positioned to lead this transformation. Their expertise in operationalizing technology at scale makes them essential players in moving AI from niche pilots to enterprise-wide systems. “IT’s role is to apply discipline and governance to scale AI responsibly,” Partridge asserts.

Ignoring this opportunity risks repeating past mistakes seen during the cloud migration era, when many IT teams ceded control to business units. This led to fragmented cloud environments, duplicated costs, and security vulnerabilities that took years to resolve.

A similar scenario is unfolding with AI, where “shadow AI” initiatives-uncoordinated experiments outside IT oversight-proliferate. Partridge observes that many organizations already experience this dynamic, as employees adopt AI tools regardless of formal authorization.

Rather than stifle innovation, IT must channel it by establishing structured governance, unified data platforms, and standardized infrastructure. This includes safeguarding data integrity, protecting brand reputation, and enabling the agility AI demands. These elements are essential to achieving the ultimate goal: AI fully integrated into production environments.

Strategic Clarity: Navigating the AI Factory Quadrants for Success

Reichenbach summarizes the path forward: “Success depends on understanding when to Run external models efficiently, when to enhance them with RAG, where to invest in Riches by leveraging proprietary data, and when to Regulate sensitive or external data responsibly.”

Organizations that clearly define their position across these quadrants and align technological ambitions with governance and value creation will emerge as leaders in the AI-driven future.