Revolutionizing AI Reasoning Through Video Generation

For years, advancements in artificial intelligence have focused on enhancing reasoning capabilities. Initially, chain-of-thought prompting enabled language models to articulate their logic stepwise rather than jumping directly to conclusions. Subsequently, vision-language models integrated visual data, anchoring reasoning in images. Both strategies yielded measurable improvements in AI performance.

However, a critical limitation remains largely unaddressed: the constraint of time in these reasoning methods.

The Limitations of Text and Static Images in AI Reasoning

Text-based reasoning excels at outlining processes sequentially but remains inherently abstract. When tasked with solving spatial problems-such as geometric puzzles or mechanical verifications-expressing these concepts purely through language becomes cumbersome. Describing spatial relationships with words alone often lacks precision and clarity.

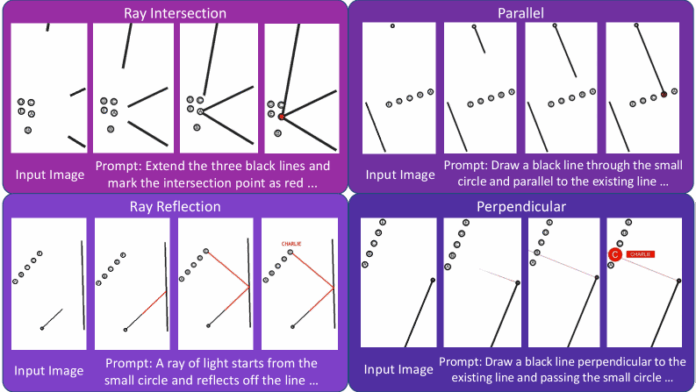

On the other hand, images capture only static snapshots. For example, a single photo showing water halfway filling a glass conveys a moment, but it cannot illustrate the dynamic process of pouring. Many reasoning challenges are intrinsically temporal or spatial, requiring motion to fully understand transitions-whether it’s sketching shapes to validate geometry, animating mechanical parts to test functionality, or simulating stepwise transformations to detect patterns. Static visuals fall short in representing how one state evolves into another.

The Disconnect Between Visual and Textual Reasoning

Another fundamental issue lies in the architectural separation of text and vision in current AI systems. Present models either analyze images through textual descriptions or interpret text with visual cues, operating as dual, loosely connected systems rather than a truly integrated whole. This division prevents seamless interaction between visual and textual reasoning processes.

Introducing Video as a Medium for AI Thought

What if AI could leverage video generation not just to present answers but to actively reason through problems? This concept proposes that models externalize their cognitive process by creating motion sequences-essentially “thinking in video.”

Instead of silently computing solutions and outputting text, the AI animates its reasoning step-by-step. For instance, when solving a puzzle, it produces a video illustrating each stage of the solution. When identifying patterns, it generates frames that reveal the gradual transformation. This dynamic approach offers a richer, more intuitive representation of complex reasoning tasks.

Why Video Generation Enhances Logical Consistency

Modern video generation models, such as Sora-2, are trained to produce coherent sequences of images that adhere to physical laws and logical progression. This requirement-that each frame logically follows from the previous one-acts as an intrinsic consistency check, reinforcing the reasoning process. Unlike text-based methods, which lack this temporal coherence constraint, video generation inherently demands that the AI’s thought process be continuous and plausible over time.

Expanding the Horizons of Multimodal AI Reasoning

By enabling AI to “think” through video, we unlock a new dimension of multimodal reasoning that bridges the gap between visual and textual cognition. This approach not only enriches the AI’s problem-solving toolkit but also paves the way for more natural, integrated understanding of complex, dynamic phenomena.

As of 2024, research into video-based reasoning is gaining momentum, with early experiments demonstrating significant improvements in tasks involving spatial-temporal understanding, such as robotics simulation and interactive design. This paradigm shift suggests that video might be the missing link to achieving truly unified, multimodal AI intelligence.