Researchers are increasingly focused on developing neural networks that enhance the transparency, debuggability, and governance of AI systems. Sparse neural networks, in particular, offer businesses clearer insights into the decision-making processes of AI models, fostering greater confidence in their outputs.

For organizations relying on AI-generated insights, comprehending the rationale behind model responses is crucial. This understanding builds trust and ensures that AI-driven decisions align with organizational goals and ethical standards.

Instead of solely evaluating models based on their performance after training, OpenAI researchers have adopted a novel approach that emphasizes interpretability through the use of sparse circuits. This method aims to reveal the internal workings of AI models by simplifying their complex architectures.

OpenAI highlights that much of the difficulty in interpreting AI models arises from their dense and intricate design. To overcome this, they propose innovative strategies that make the models’ internal processes more accessible and understandable.

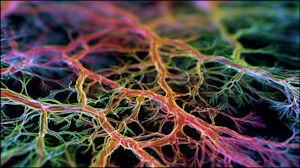

“Modern AI systems are powered by neural networks that, despite their capabilities, remain largely inscrutable,” OpenAI explained. “These models do not operate based on explicit instructions; instead, they learn by fine-tuning billions of internal parameters until they excel at a task. While we control the training framework, the specific behaviors that emerge are not directly programmed, resulting in a dense network of connections that defy straightforward human interpretation.”

Advancing Transparency in AI Models

Gaining insight into how AI models generate their outputs is essential, given the significant real-world consequences of their decisions. OpenAI defines interpretability as the set of techniques that clarify why a model produces a particular result.

There are multiple interpretability approaches, including chain-of-thought interpretability, which leverages stepwise reasoning, and mechanistic interpretability, which involves dissecting the model’s mathematical framework to understand its inner mechanics.

OpenAI has concentrated efforts on mechanistic interpretability, acknowledging that while it has been less immediately practical, it holds the promise of delivering a more comprehensive explanation of model behavior. This approach seeks to explain AI decisions at the most fundamental level, reducing assumptions and increasing confidence in the model’s outputs. However, translating low-level details into explanations of complex behaviors remains a challenging and lengthy process.

Improved interpretability not only enhances oversight but also provides early detection of deviations from expected or policy-aligned behavior, which is critical for maintaining trust and safety in AI applications.

Although OpenAI admits that advancing mechanistic interpretability is an ambitious goal, recent progress with sparse neural networks has made this pursuit more feasible.

Decoding Neural Networks Through Sparsity

To simplify the tangled web of connections within neural networks, OpenAI’s team began by drastically reducing the number of active connections. Transformer models like GPT-2 typically contain thousands of interlinked pathways, so the researchers “zeroed out” many of these, allowing only a limited subset to remain active. This pruning results in a more organized and interpretable network structure.

Following this, the team employed “circuit tracing” techniques to identify clusters of interpretable circuits associated with specific tasks. The final step involved pruning the model further to isolate the smallest possible circuit that could achieve a target loss threshold-set at 0.15-thereby pinpointing the precise nodes and weights responsible for particular behaviors.

“Our experiments demonstrate that pruning weight-sparse models produces circuits approximately 16 times smaller than those derived from dense models with comparable pretraining loss,” the researchers reported. “Moreover, we can construct highly accurate circuits by allowing more connections, indicating that simple behaviors are significantly more disentangled and localized in sparse models compared to dense ones.”

Implications for Model Training and Enterprise Use

While these sparse models are more interpretable, they currently remain smaller than the large-scale foundation models commonly deployed in enterprise environments. Nonetheless, as AI systems continue to evolve, even cutting-edge models are expected to benefit from enhanced interpretability techniques.

Other AI developers are also prioritizing transparency. For example, Anthropic has recently unveiled new interpretability tools, which have garnered attention from industry leaders. Similarly, companies like Cohere are actively researching methods to better understand the reasoning processes of their models.

As AI becomes increasingly integral to critical business decisions and customer interactions, ongoing research into model interpretability will be vital. Providing organizations with clear explanations of AI behavior will help build the trust necessary for widespread adoption and responsible use.