Maya Research has introduced Maya1, an advanced text-to-speech (TTS) model featuring 3 billion parameters. This innovative system transforms written text combined with a brief voice description into dynamic, expressive speech, all while operating in real time on a single GPU.

Understanding Maya1’s Capabilities

Maya1 represents a cutting-edge solution for generating lifelike, emotionally rich speech. Designed to accurately reflect human vocal nuances, it enables precise voice customization directly from textual input.

The model requires two primary inputs:

- A descriptive voice prompt in natural language, such as “Young female with a Southern American accent, cheerful and articulate” or “Villain character, deep male voice, rough texture, deliberate pacing.”

- The actual text content to be vocalized.

By integrating these inputs, Maya1 produces audio that aligns with both the message and the specified vocal style. Additionally, users can embed over 20 emotion tags within the text-examples include <laugh>, <sigh>, <whisper>, <angry>, and <cry>-to further refine expressiveness.

The output is high-quality 24 kHz mono audio with real-time streaming capabilities, making it ideal for applications such as virtual assistants, interactive gaming, podcasting, and live broadcasts.

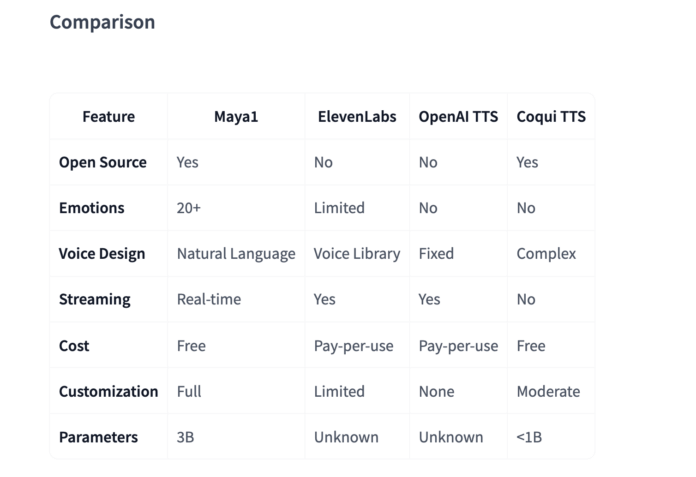

According to Maya Research, Maya1 surpasses many leading proprietary TTS systems while remaining fully open source under the Apache 2.0 license.

Technical Framework and the SNAC Codec

Maya1 is built as a 3-billion-parameter, decoder-only transformer model inspired by the LLaMA architecture. Instead of generating raw audio waveforms directly, it predicts discrete tokens from a neural audio codec called SNAC.

The audio generation pipeline follows this sequence:

Text → Tokenization → SNAC token generation (7 tokens per audio frame) → Decoding → 24 kHz audio output

SNAC employs a hierarchical, multi-scale structure operating at approximately 12 Hz, 23 Hz, and 47 Hz. This design compresses the autoregressive sequence length while maintaining fine audio detail. The codec is optimized for real-time streaming at a low bitrate of around 0.98 kbps.

Crucially, the transformer works on discrete codec tokens rather than continuous waveform samples. A separate SNAC decoder (e.g., hubertsiuzdak/snac_24khz) reconstructs the final audio waveform. This modular approach enhances generation efficiency and scalability compared to direct waveform prediction methods.

Data Preparation and Voice Conditioning Strategies

Maya1’s training began with large-scale pretraining on diverse English speech datasets to capture wide acoustic variability and natural speech flow. It was subsequently fine-tuned on a carefully curated proprietary collection of studio-quality recordings. These include human-verified voice descriptions, extensive emotion tagging (over 20 emotions per sample), multiple English dialects, and various character roles.

The data processing pipeline involves:

- Resampling audio to 24 kHz mono with loudness normalized to approximately -23 LUFS

- Voice activity detection to trim silences ranging from 1 to 14 seconds

- Forced alignment using Montreal Forced Aligner to identify phrase boundaries

- Text deduplication via MinHash Locality Sensitive Hashing (LSH)

- Audio deduplication using Chromaprint fingerprinting

- Encoding audio into SNAC tokens with 7-token frame packing

For voice conditioning, the team experimented with various input formats. Simple colon-separated or key-value tags either caused the model to read the description verbatim or failed to generalize well. The most effective method uses an XML-style attribute wrapper that naturally encodes voice descriptions alongside the text, ensuring robustness and flexibility.

This approach allows developers to specify voice characteristics in free-form language, similar to briefing a voice actor, without needing to learn complex parameter schemas.

Efficient Inference and Single-GPU Deployment

The official Python example on Hugging Face demonstrates loading Maya1 with:

AutoModelForCausalLM.from_pretrained("maya-research/maya1", torch_dtype=torch.bfloat16, device_map="auto")

and the SNAC decoder via:

SNAC.from_pretrained("hubertsiuzdak/snac_24khz")

Recommended hardware includes a single GPU with at least 16 GB of VRAM, such as NVIDIA A100, H100, or consumer-grade RTX 4090 cards.

For production environments, Maya Research offers a vllm_streaming_inference.py script that integrates with the vLLM framework. This setup supports features like Automatic Prefix Caching for repeated voice prompts, a WebAudio ring buffer for smooth playback, multi-GPU scaling, and latency under 100 milliseconds, enabling real-time applications.

Additional resources provided include:

- An interactive Hugging Face Space demo where users can input text and voice descriptions to hear generated speech

- GGUF quantized versions of Maya1 optimized for lightweight deployments using

llama.cpp - A ComfyUI node that encapsulates Maya1 with built-in emotion tag support and SNAC codec integration

All these tools utilize the official model weights and interfaces, ensuring consistency across implementations.

Summary of Key Features

- Maya1 is a 3-billion-parameter, decoder-only LLaMA-style TTS model that predicts SNAC codec tokens instead of raw audio, delivering 24 kHz mono output with streaming support.

- It accepts two inputs: a natural language voice description and the text to be spoken, with support for over 20 inline emotion tags like

<laugh>,<cry>,<whisper>, and<gasp>to finely control expressiveness. - The training pipeline combines large-scale English speech pretraining with studio-quality fine-tuning, including loudness normalization, voice activity detection, forced alignment, and deduplication of text and audio.

- The model runs efficiently on a single GPU with 16+ GB VRAM using bfloat16 precision, integrates with a SNAC decoder, and supports low-latency streaming via vLLM with Automatic Prefix Caching.

- Released under the Apache 2.0 license, Maya1 comes with official weights, a Hugging Face demo, quantized variants, and ComfyUI integration, making expressive, controllable TTS accessible for both commercial and local use.

Final Thoughts

Maya1 marks a significant advancement in open-source text-to-speech technology, entering a domain traditionally dominated by closed-source commercial APIs. Its 3-billion-parameter LLaMA-style decoder, combined with SNAC codec token prediction, real-time single-GPU operation, and rich emotional control, provides a versatile foundation for real-time conversational agents, gaming, and creative tools. Maya1 demonstrates that high-quality, expressive, and controllable TTS can be both open and production-ready, empowering developers and creators worldwide.