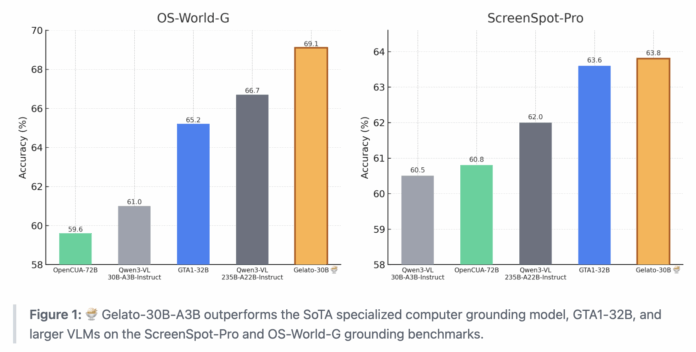

How can we enable AI agents to accurately identify and click the precise on-screen element we intend when given straightforward instructions? Researchers from ML Foundations have unveiled Gelato-30B-A3B, an advanced grounding model tailored for graphical user interfaces (GUIs). This model is designed to seamlessly integrate with AI-driven computer agents, translating natural language commands into exact click coordinates with remarkable reliability. Trained on the comprehensive Click 100k dataset, Gelato-30B-A3B achieves impressive accuracy rates: 63.88% on ScreenSpot Pro, 69.15% on OS-World-G, and 74.65% on the refined OS-World-G dataset. It outperforms previous leading models such as GTA1-32B and even larger vision-language models like Qwen3-VL-235B-A22B-Instruct.

Gelato-30B-A3B: Revolutionizing GUI Interaction in AI Agents

Gelato-30B-A3B is a sophisticated 31-billion parameter model that fine-tunes the Qwen3-VL-30B-A3B Instruct model using a mixture of experts (MoE) architecture. It processes a screenshot alongside a textual instruction and outputs a single, precise click location on the screen.

Functioning as a modular grounding unit, Gelato-30B-A3B works in tandem with a high-level planner-such as GPT-5 in the Gelato experiments-which determines the next action and delegates the task of pinpointing the exact click to Gelato. This clear division between planning and grounding is crucial for agents operating across diverse operating systems and applications, each with unique interface layouts.

Click 100k: A Comprehensive Dataset for GUI Grounding

The foundation of Gelato’s success lies in the Click 100k dataset, a meticulously curated collection that links computer screen images with natural language instructions, bounding boxes for target elements, and normalized coordinates. Each entry represents a granular command, for example, “tap the element located between Background and Notifications,” accompanied by a precise clickable region.

Click 100k consolidates and refines data from multiple public sources, including ShowUI, AutoGUI, PC Agent E, WaveUI, OS Atlas, UGround, PixMo Points, SeeClick, UI VISION, and a specialized JEDI subset focusing on spreadsheet and text cell manipulations. Additionally, it incorporates annotations from over 80 professional tutorial videos, generated using Claude-4-Sonnet and manually verified for accuracy. Each source contributes up to 50,000 samples, all harmonized into a unified schema featuring images, instructions, and bounding box data.

To ensure data quality, the team employs a rigorous filtering pipeline: OmniParser removes clicks outside detected interface elements; Qwen2.5-7B-VL and SE-GUI-3B filter out trivial interactions like simple hyperlink clicks; GTA1-7B-2507 and UI-Venus-7B discard mismatched instruction-click pairs. Training a Qwen2.5-7B-VL baseline on a balanced 10k subset of this filtered data yields a 9 percentage point accuracy improvement on ScreenSpot Pro compared to unfiltered datasets.

Advanced Training with GRPO Reinforcement Learning

Gelato-30B-A3B leverages GRPO, a reinforcement learning algorithm inspired by DeepSeekMath and related frameworks, to enhance its grounding capabilities. Following the DAPO training protocol, the model omits the KL divergence term, sets a clipping threshold at 0.28, and excludes rollouts with zero advantage. Rewards are sparse and only granted when the predicted click falls within the correct bounding box, mirroring the approach used in GTA1 training.

The training process begins with the Qwen3 VL 30B A3B Instruct model as a base, running 100 reinforcement learning steps on 32 A100 GPUs equipped with 40 GB memory each. The optimal checkpoint, identified at step 84, is selected based on average performance across ScreenSpot Pro, OS World G, and OS World G Refined benchmarks. At this stage, the model attains 63.88% accuracy on ScreenSpot Pro, 67.19% on OS World G, and 73.40% on OS World G Refined. Incorporating a refusal prompting strategy-where the model is instructed to respond with refusal if the target element is not found-further boosts OS World G scores to 69.15% and 74.65%, respectively.

Real-World Agent Performance in OS World Environment

To evaluate Gelato-30B-A3B beyond static benchmarks, the researchers integrated it into a full agent framework operating within the OS World environment. Here, GPT-5 serves as the planner, while Gelato handles grounding. The agent is allowed up to 50 steps per task, with a 3-second pause between actions to simulate realistic interaction speeds.

Testing involved three runs per model on a fixed OS World snapshot. Gelato-30B-A3B achieved an automated success rate of 58.71%, outperforming GTA1-32B’s 56.97% under identical conditions. Recognizing that automated evaluation may overlook some valid solutions, a human assessment was conducted on 20 challenging tasks. Under human judgment, Gelato’s success rate rose to 61.85%, compared to GTA1-32B’s 59.47%, confirming its superior practical effectiveness.

Summary of Key Insights

- Gelato-30B-A3B, built on the Qwen3-VL-30B-A3B Instruct model with a mixture of experts design, sets a new standard in GUI grounding, outperforming both GTA1-32B and larger vision-language models like Qwen3-VL-235B-A22B-Instruct on major benchmarks.

- The model’s training relies on Click 100k, a carefully assembled dataset that merges multiple public GUI datasets and professional application traces, pairing authentic screen images with detailed natural language commands and exact click coordinates.

- Employing GRPO reinforcement learning with sparse reward signals significantly enhances grounding accuracy compared to purely supervised approaches.

- When deployed within an agent framework alongside GPT-5 as the planner, Gelato-30B-A3B delivers higher success rates on complex computer use tasks in OS World, demonstrating that improved grounding directly elevates overall agent performance.

Final Thoughts

Gelato-30B-A3B represents a significant advancement in grounded AI for computer interfaces. By combining a Qwen3-VL-based mixture of experts architecture with the rigorously filtered Click 100k dataset, it surpasses previous state-of-the-art models like GTA1-32B and even larger vision-language models on key GUI grounding benchmarks. Its availability through platforms like Hugging Face ensures accessibility for further research and development, establishing a new benchmark for open-source computer grounding models.