In the realm of large language models (LLMs), the primary bottleneck has shifted from training constraints to the efficiency of token serving under real-world traffic conditions. The performance of LLM serving hinges on three critical implementation factors: the strategy for batching requests during runtime, the concurrency between prefill and decode phases, and the management of the key-value (KV) cache storage and reuse. Various inference engines adopt distinct approaches to these aspects, directly influencing throughput (tokens per second), latency percentiles (P50/P99), and GPU memory consumption.

Top Six LLM Inference Runtimes in 2025: An In-Depth Analysis

This review examines six prominent inference runtimes frequently deployed in production environments:

- vLLM

- TensorRT LLM

- Hugging Face Text Generation Inference (TGI v3)

- LMDeploy

- SGLang

- DeepSpeed Inference / ZeRO Inference

vLLM: Efficient KV Management with PagedAttention

Architecture Overview

vLLM introduces PagedAttention, a novel approach that segments the KV cache into fixed-size blocks rather than allocating a large contiguous buffer per sequence. This design employs an indirection layer, allowing each sequence to reference a list of KV blocks.

Advantages

- Minimizes KV cache fragmentation to under 4%, a significant improvement over traditional allocators that waste 60-80% of memory.

- Enables high GPU utilization through continuous batching.

- Supports prefix sharing and KV reuse at the block level, enhancing efficiency for repeated contexts.

Recent updates incorporate FP8 KV quantization and integrate FlashAttention-style kernels, further boosting performance.

Performance Highlights

Benchmarks reveal that vLLM delivers 14 to 24 times greater throughput compared to Hugging Face Transformers and outperforms early versions of TGI by 2.2 to 3.5 times on LLaMA models running on NVIDIA GPUs.

Memory and KV Cache Behavior

- The paged KV layout is optimized for GPU memory access patterns and reduces fragmentation.

- FP8 quantization decreases KV cache size, improving decoding speed when compute resources are not the limiting factor.

Ideal Use Cases

vLLM is well-suited as a versatile, high-performance backend for general-purpose LLM serving, offering balanced throughput, time-to-first-token (TTFT), and compatibility across diverse hardware.

TensorRT LLM: NVIDIA-Optimized Compilation and KV Reuse

Design Principles

Built atop NVIDIA’s TensorRT, TensorRT LLM compiles model-specific fused kernels tailored to exact model architectures and input shapes. It exposes an executor API utilized by platforms like Triton.

KV Cache Innovations

- Supports paged KV caches for efficient memory management.

- Offers quantized KV caches in INT8 and FP8 formats, with ongoing enhancements.

- Implements circular buffer KV caches for continuous token streaming.

- Enables KV cache reuse, including offloading KV data to CPU memory to accelerate TTFT.

NVIDIA reports that CPU-based KV reuse can reduce TTFT by up to 14 times on H100 GPUs, with even greater improvements on GH200 hardware under specific workloads.

Performance Characteristics

- Exhibits extremely low latency for single requests when engines are finely tuned for the target model.

- Offers tunable trade-offs between low TTFT and high throughput at moderate concurrency levels.

- At very high concurrency, throughput-optimized configurations may increase P99 latency due to aggressive batching.

Memory and KV Management

- Combines paged and quantized KV caches to optimize memory footprint and bandwidth.

- Provides APIs for cache-aware routing, enabling sophisticated application-level memory management.

Best Fit Scenarios

TensorRT LLM excels in latency-sensitive applications within NVIDIA-exclusive environments where teams can dedicate resources to engine compilation and model-specific tuning.

Hugging Face TGI v3: Robust Long-Context Handling for Chat Workloads

System Architecture

Text Generation Inference (TGI) is a server-oriented stack featuring:

- A Rust-based HTTP and gRPC server.

- Continuous batching, streaming capabilities, and safety hooks.

- Backends supporting PyTorch and TensorRT, with seamless Hugging Face Hub integration.

TGI v3 introduces a specialized long-context pipeline with:

- Chunked prefill to efficiently process lengthy inputs.

- Prefix KV caching to avoid recomputing extensive conversation histories.

Performance Insights

- While vLLM slightly outperforms TGI in raw tokens per second at high concurrency for standard prompts, the margin is modest.

- For extended prompts with long histories, TGI v3 can process approximately 3 times more tokens and achieve up to 13 times faster throughput than vLLM, thanks to prefix caching.

Latency Profile

- P50 latency for short to medium prompts is comparable between TGI v3 and vLLM when both utilize continuous batching.

- For long chat sessions, TGI v3’s prefix reuse significantly reduces TTFT and median latency.

KV Cache and Memory Management

- Employs paged attention kernels and chunked prefill to minimize memory usage.

- Supports quantization techniques such as bits and bytes and GPTQ, compatible with multiple hardware backends.

Recommended Use Cases

Ideal for production environments already integrated with Hugging Face, especially for conversational AI applications requiring efficient handling of long dialogue histories.

LMDeploy: High Throughput with TurboMind and Quantized KV

Framework Overview

LMDeploy, part of the InternLM ecosystem, offers two inference engines:

- TurboMind: High-performance CUDA kernels optimized for NVIDIA GPUs.

- PyTorch engine: A flexible fallback option.

Key Runtime Features

- Persistent, continuous batching to maximize GPU utilization.

- Blocked KV cache with dynamic allocation and reuse management.

- Dynamic splitting and fusion of attention blocks.

- Tensor parallelism support.

- Weight-only and KV quantization, including AWQ and online INT8/INT4 KV quantization.

Performance Metrics

- Vendor benchmarks indicate LMDeploy can achieve up to 1.8 times the throughput of vLLM, particularly for 4-bit LLaMA-style models on NVIDIA A100 GPUs.

- 4-bit inference runs approximately 2.4 times faster than FP16 for supported models.

Latency Considerations

- Time-to-first-token (TTFT) for single requests is competitive with other optimized GPU runtimes when batch sizes are moderate.

- Under heavy load, persistent batching combined with blocked KV caching sustains high throughput without TTFT degradation.

KV Cache and Memory Strategy

- Blocked KV cache divides KV storage into manageable chunks, akin to vLLM’s PagedAttention but with a distinct internal structure.

- Extensive support for weight and KV quantization enables deployment of large models on GPUs with limited memory.

Optimal Deployment Scenarios

Best suited for NVIDIA-centric environments prioritizing maximum throughput and willing to adopt LMDeploy’s specialized tooling and TurboMind kernels.

SGLang: Structured LLM Programs with RadixAttention

Conceptual Framework

SGLang serves dual roles:

- A domain-specific language (DSL) for constructing structured LLM workflows such as agents, retrieval-augmented generation (RAG), and tool pipelines.

- A runtime implementing RadixAttention, a KV reuse mechanism that organizes KV caches in a radix tree keyed by token prefixes rather than simple block hashes.

RadixAttention Benefits

- Efficiently stores KV caches for multiple requests sharing common prefixes.

- Achieves high KV cache hit rates in scenarios with repeated context, such as multi-turn conversations or few-shot prompting.

Performance Gains

- Demonstrates up to 6.4 times higher throughput and 3.7 times lower latency compared to baseline systems like vLLM and LMQL on structured workloads.

- Cache hit rates range from approximately 50% to 99%, with cache-aware schedulers approaching optimal reuse.

KV and Memory Handling

- Builds on paged attention kernels but emphasizes KV reuse over mere allocation.

- Integrates with hierarchical context caching systems that transfer KV data between GPU and CPU for long sequences, often implemented as separate modules.

Use Case Recommendations

Ideal for agent-based systems, complex tool chains, and RAG applications where extensive prefix sharing and KV reuse at the application level are critical.

DeepSpeed Inference and ZeRO Inference: Scaling Large Models Beyond GPU Memory

Core Components

- DeepSpeed Inference: Provides optimized transformer kernels with tensor and pipeline parallelism.

- ZeRO Inference / ZeRO Offload: Techniques to offload model weights and sometimes KV cache to CPU or NVMe storage, enabling inference of very large models on GPUs with limited memory.

ZeRO Inference Focus

- Minimizes GPU-resident model weights.

- Streams tensors dynamically from CPU or NVMe as needed.

- Prioritizes throughput and model size over low latency.

Performance Overview

For example, running the OPT 30B model on a single NVIDIA V100 32GB GPU:

- Full CPU offload achieves approximately 43 tokens per second.

- Full NVMe offload reaches about 30 tokens per second.

- Both outperform partial offload setups by 1.3 to 2.4 times due to the ability to use larger batch sizes.

While these speeds are modest compared to GPU-resident runtimes on A100 or H100 GPUs, they enable inference on models that exceed native GPU memory capacity.

Recent I/O profiling studies highlight that offload-based systems are bottlenecked by frequent small (128 KiB) reads, making I/O throughput a critical factor.

KV and Memory Management

- Model weights and occasionally KV cache blocks are offloaded to CPU or SSD storage.

- TTFT and P99 latency are higher relative to fully GPU-resident engines, but this tradeoff allows running extremely large models.

Appropriate Use Cases

Best suited for offline or batch inference scenarios, or low-query-per-second (QPS) services where model size is prioritized over latency and GPU resource count.

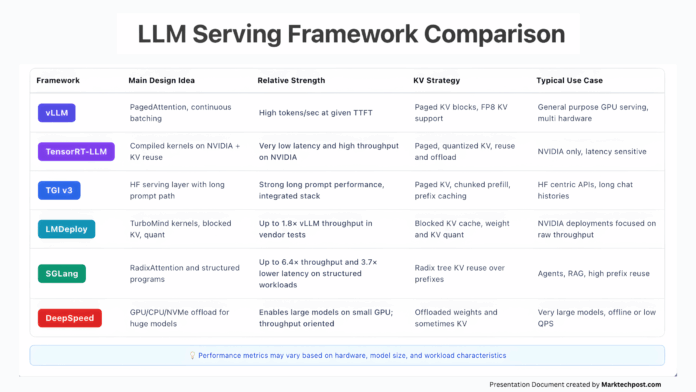

Summary Table: Key Features and Use Cases of Leading LLM Runtimes

| Runtime | Core Design | Strengths | KV Cache Strategy | Typical Applications |

|---|---|---|---|---|

| vLLM | PagedAttention with continuous batching | High throughput with balanced TTFT | Paged KV blocks, FP8 quantization | General GPU serving, multi-hardware support |

| TensorRT LLM | Compiled fused kernels on NVIDIA GPUs | Extremely low latency, high throughput | Paged, quantized KV with reuse and offload | NVIDIA-only, latency-critical workloads |

| Hugging Face TGI v3 | Server stack with long prompt optimization | Superior long-context performance | Paged KV, chunked prefill, prefix caching | Hugging Face ecosystem, chat applications |

| LMDeploy | TurboMind kernels, blocked KV cache | Up to 1.8× vLLM throughput in tests | Blocked KV, weight and KV quantization | NVIDIA-focused, throughput-optimized deployments |

| SGLang | RadixAttention and structured LLM programs | Up to 6.4× throughput, 3.7× lower latency on structured tasks | Radix tree KV reuse over prefixes | Agentic systems, RAG, tool pipelines |

| DeepSpeed Inference | GPU/CPU/NVMe offload for massive models | Enables very large models on limited GPUs | Offloaded weights and KV cache | Offline/batch inference, low QPS, large models |

Practical Guidance for Selecting an LLM Inference Runtime

When choosing an inference runtime for production, consider the following common scenarios:

- Seeking a robust, out-of-the-box engine: vLLM offers solid throughput, reasonable TTFT, and flexible hardware compatibility with minimal customization.

- Operating exclusively on NVIDIA hardware with latency sensitivity: TensorRT LLM provides fine-grained control over latency and KV cache, though it requires model-specific tuning and engine compilation.

- Already integrated with Hugging Face and handling long conversational contexts: TGI v3 excels with its long prompt pipeline and prefix caching.

- Maximizing throughput on NVIDIA GPUs with quantized models: LMDeploy with TurboMind and blocked KV caching is a strong candidate.

- Developing agentic workflows, tool chains, or RAG systems with heavy prefix reuse: SGLang leverages RadixAttention for efficient KV reuse.

- Running extremely large models on limited GPU memory: DeepSpeed Inference / ZeRO Inference enables this at the cost of higher latency, using CPU and NVMe offloading.

Ultimately, the consensus across these runtimes is clear: the KV cache represents the critical bottleneck resource. The most effective engines treat KV cache as a sophisticated data structure-employing paging, quantization, reuse, and offloading-rather than a monolithic tensor simply loaded into GPU memory.