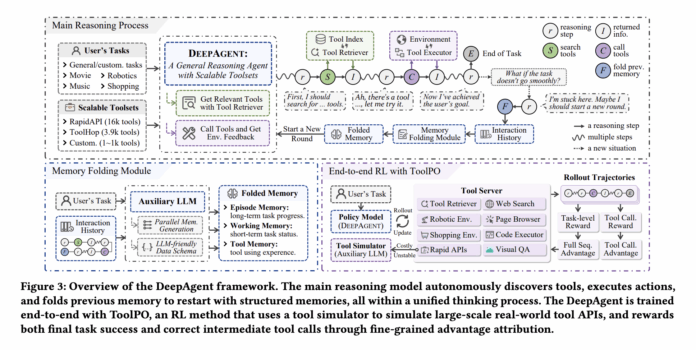

Traditional agent frameworks typically operate on a fixed Reason, Act, Observe cycle, limiting their ability to utilize only the tools predefined within their prompts. While this approach suffices for straightforward tasks, it struggles when faced with extensive toolsets, prolonged tasks, or scenarios requiring dynamic strategy shifts during reasoning. Addressing these challenges, researchers from Renmin University of China and Xiaohongshu introduce DeepAgent, an integrated deep reasoning agent designed to encapsulate autonomous thinking, tool discovery, and action execution within a unified reasoning framework.

Dynamic Tool Discovery Through Integrated Reasoning

DeepAgent empowers the model to generate four distinct action types in textual form: internal reflection, tool searching, tool invocation, and memory consolidation. When opting to search, the agent queries a dense index comprising descriptions from vast tool repositories-over 16,000 tools from RapidAPI and nearly 4,000 from ToolHop. This selective retrieval returns only the highest-ranked tools relevant to the current context, enabling the agent to dynamically access tools without relying on a static, preloaded list. Such flexibility aligns DeepAgent with real-world environments where tool availability frequently evolves.

Efficient Memory Management for Extended Reasoning

Handling long sequences of tool interactions, web data, and code outputs can overwhelm the agent’s context window. DeepAgent tackles this with an autonomous memory folding mechanism. Upon emitting a special fold token, an auxiliary large language model condenses the entire interaction history into three structured memory types: Episodic Memory capturing task events, Working Memory tracking current subgoals and recent challenges, and Tool Memory documenting tool usage details such as names, parameters, and results. These compressed memories are reintegrated as structured text, allowing the agent to maintain a rich yet compact state for ongoing reasoning.

Tool Policy Optimization: Reinforcement Learning for Precise Tool Utilization

Supervised learning alone falls short in teaching robust tool usage, as correct tool calls represent only a small fraction of generated tokens. To overcome this, the team developed Tool Policy Optimization (ToolPO), a reinforcement learning approach that performs rollouts on simulated APIs powered by large language models. This method ensures stable and cost-effective training by attributing rewards directly to the specific tokens responsible for tool calls-a technique known as tool call advantage attribution. ToolPO trains the agent not only to execute tool calls accurately but also to decide optimally when to initiate searches or fold memory, enhancing overall decision-making.

Comprehensive Benchmarking: Labeled Tools Versus Open-Set Retrieval

The researchers evaluated DeepAgent across five general tool usage benchmarks-ToolBench, API Bank, TMDB, Spotify, and ToolHop-and four complex downstream tasks: ALFWorld, WebShop, GAIA, and HLE. In scenarios where agents are provided with exact tool sets (labeled tool setting), DeepAgent with a 32-billion parameter model backbone achieved top-tier results: 69.0 on ToolBench, 75.3 on API Bank, 89.0 on TMDB, 75.4 on Spotify, and 51.3 on ToolHop. These scores represent the strongest performance at this scale across all datasets. While other workflow-based methods like ReAct and CodeAct excel on individual datasets (e.g., ReAct performs well on TMDB and Spotify), none maintain consistently high performance across all five, highlighting DeepAgent’s superior uniformity.

In the more realistic open-set retrieval setting-where the agent must first discover and then utilize tools-DeepAgent still leads, scoring 64.0 on ToolBench and 40.6 on ToolHop. Competing workflow baselines lag behind with 55.0 and 36.2 respectively. The study also demonstrates that autonomous tool retrieval enhances the performance of workflow agents, but DeepAgent benefits even more, underscoring the synergy between its architecture and training methodology tailored for large-scale toolsets.

Performance in Complex Real-World Environments

When tested on challenging environments such as ALFWorld, WebShop, GAIA, and HLE using a 32-billion parameter reasoning model, DeepAgent achieved a 91.8% success rate on ALFWorld, 34.4% success and 56.3 average score on WebShop, 53.3 on GAIA, and outperformed workflow agents on HLE. These tasks involve longer, noisier interactions, where the combination of memory folding and ToolPO likely contributes significantly to DeepAgent’s superior results.

Summary of Innovations and Advantages

- DeepAgent integrates the entire agent loop into a single reasoning stream, enabling seamless transitions between thinking, tool searching, tool invocation, and memory management without being confined to fixed workflows like ReAct.

- It leverages dense retrieval over extensive tool registries-over 16,000 RapidAPI tools and nearly 4,000 ToolHop tools-allowing on-demand tool discovery rather than relying on static prompt lists.

- The autonomous memory folding mechanism compresses lengthy interaction histories into episodic, working, and tool memories, preventing context overflow and supporting stable long-term reasoning.

- Tool Policy Optimization (ToolPO) employs reinforcement learning with simulated APIs and token-level reward attribution, training the agent to execute precise tool calls and make strategic decisions about searching and memory folding.

- Across multiple benchmarks and downstream tasks, DeepAgent at 32B scale demonstrates more consistent and superior performance than workflow-based baselines, especially in scenarios where tool discovery is critical.

Concluding Insights

DeepAgent represents a significant advancement in agent design by eliminating dependence on fixed tool prompts and unifying autonomous reasoning, dense tool retrieval, structured tool invocation, and memory management within a single loop. The innovative use of LLM-simulated APIs in ToolPO addresses previous challenges related to latency and instability in tool-using agents. Evaluations reveal consistent improvements at the 32-billion parameter scale across both labeled and open-set tool scenarios, making large-scale tool ecosystems practically accessible for language model agents. This work solidifies the emerging paradigm of end-to-end tool agents enhanced by memory mechanisms and reinforcement learning as the future standard in AI agent architectures.